This is the multi-page printable view of this section. Click here to print.

Postgres

- pg_exporter v1.0.0 Released – Next-Level PostgreSQL Observability

- OrioleDB is Here! 4x Performance, Zero Bloat, Decoupled Storage

- OpenHalo: PostgreSQL Now Speaks MySQL Wire Protocol!

- PGFS: Using PostgreSQL as a File System

- PostgreSQL Frontier

- Pig, The PostgreSQL Extension Wizard

- Don't Update! Rollback Issued on Release Day: PostgreSQL Faces a Major Setback

- PostgreSQL 12 Reaches End-of-Life as PG 17 Takes the Throne

- The idea way to install PostgreSQL Extensions

- PostgreSQL 17 Released: The Database That's Not Just Playing Anymore!

- Can PostgreSQL Replace Microsoft SQL Server?

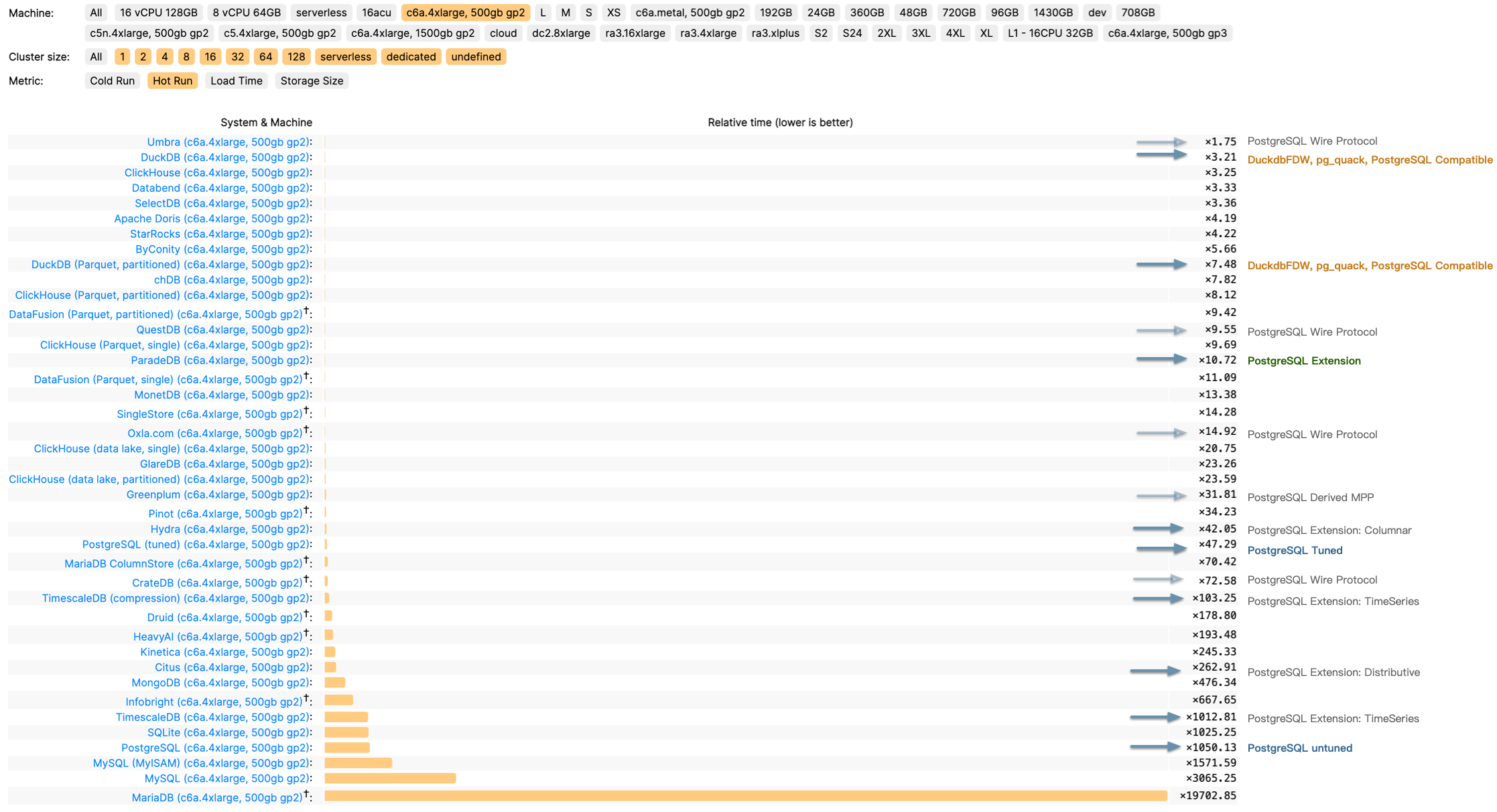

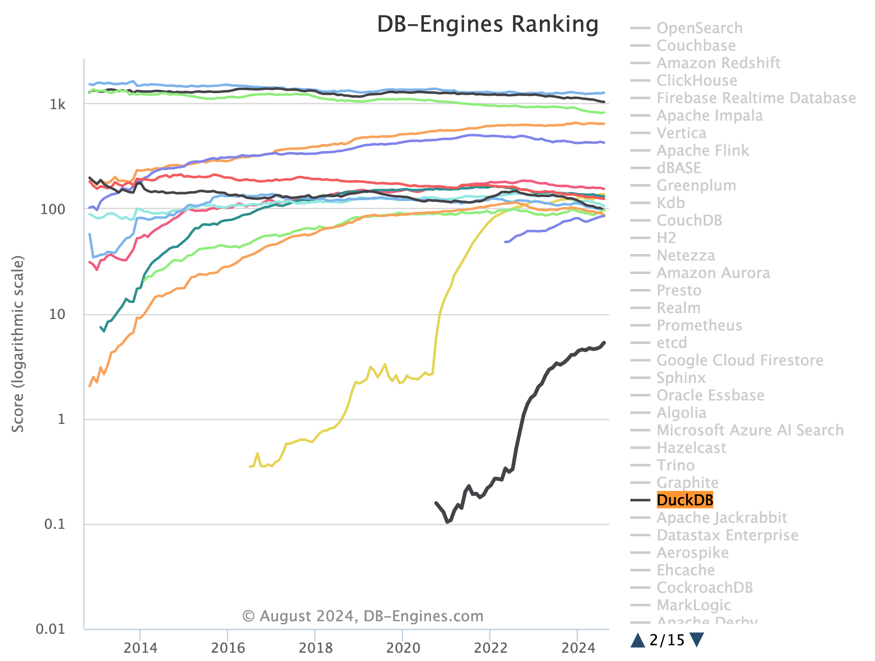

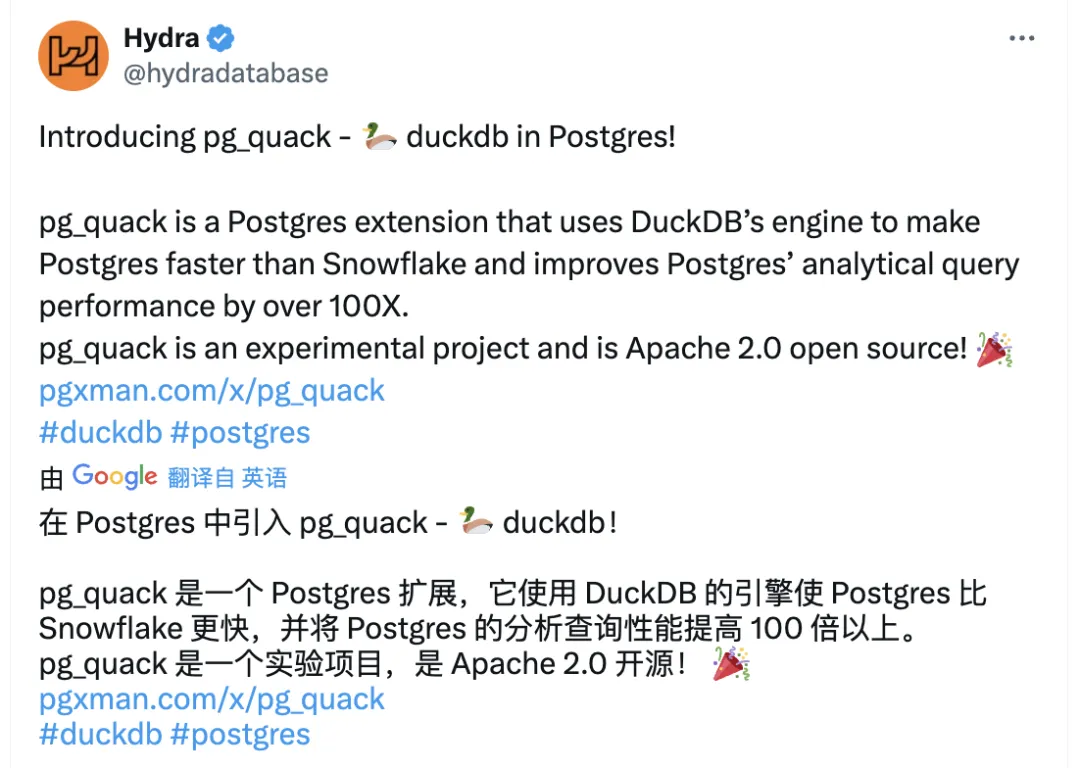

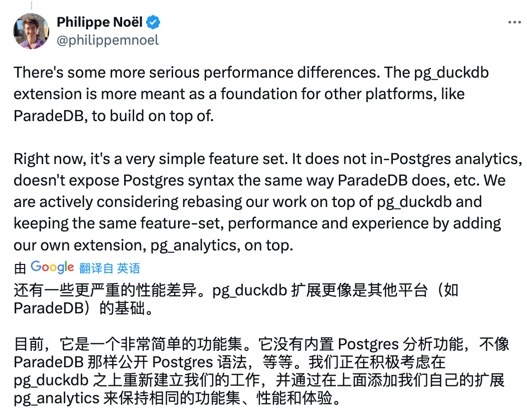

- Whoever Integrates DuckDB Best Wins the OLAP World

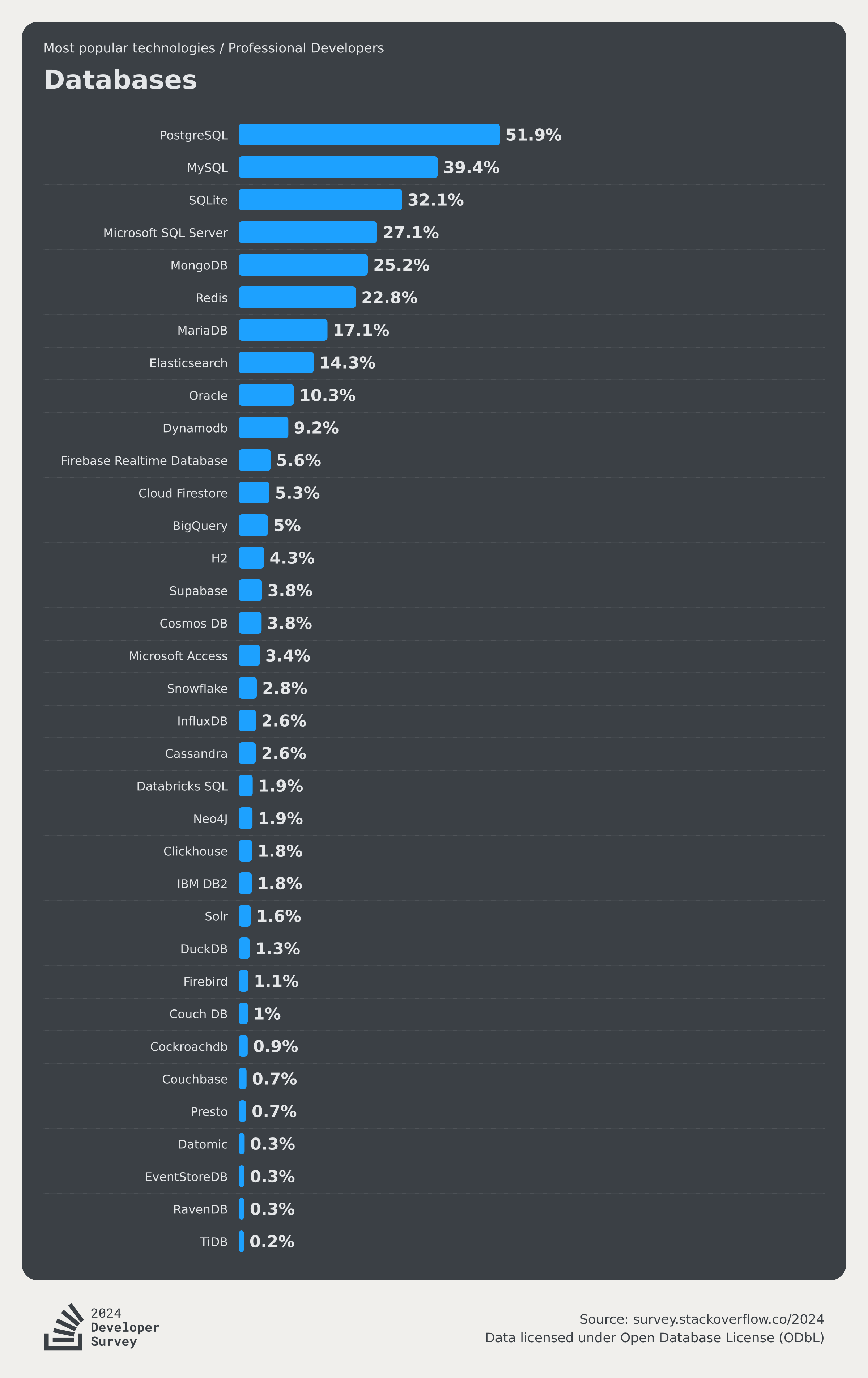

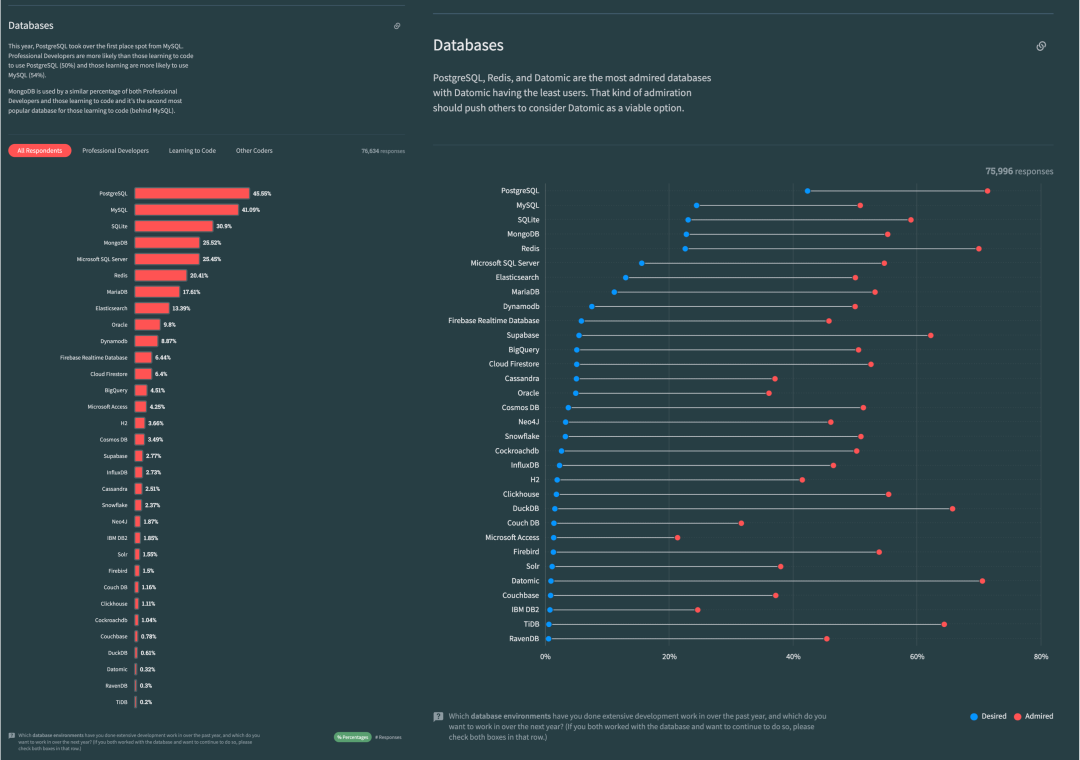

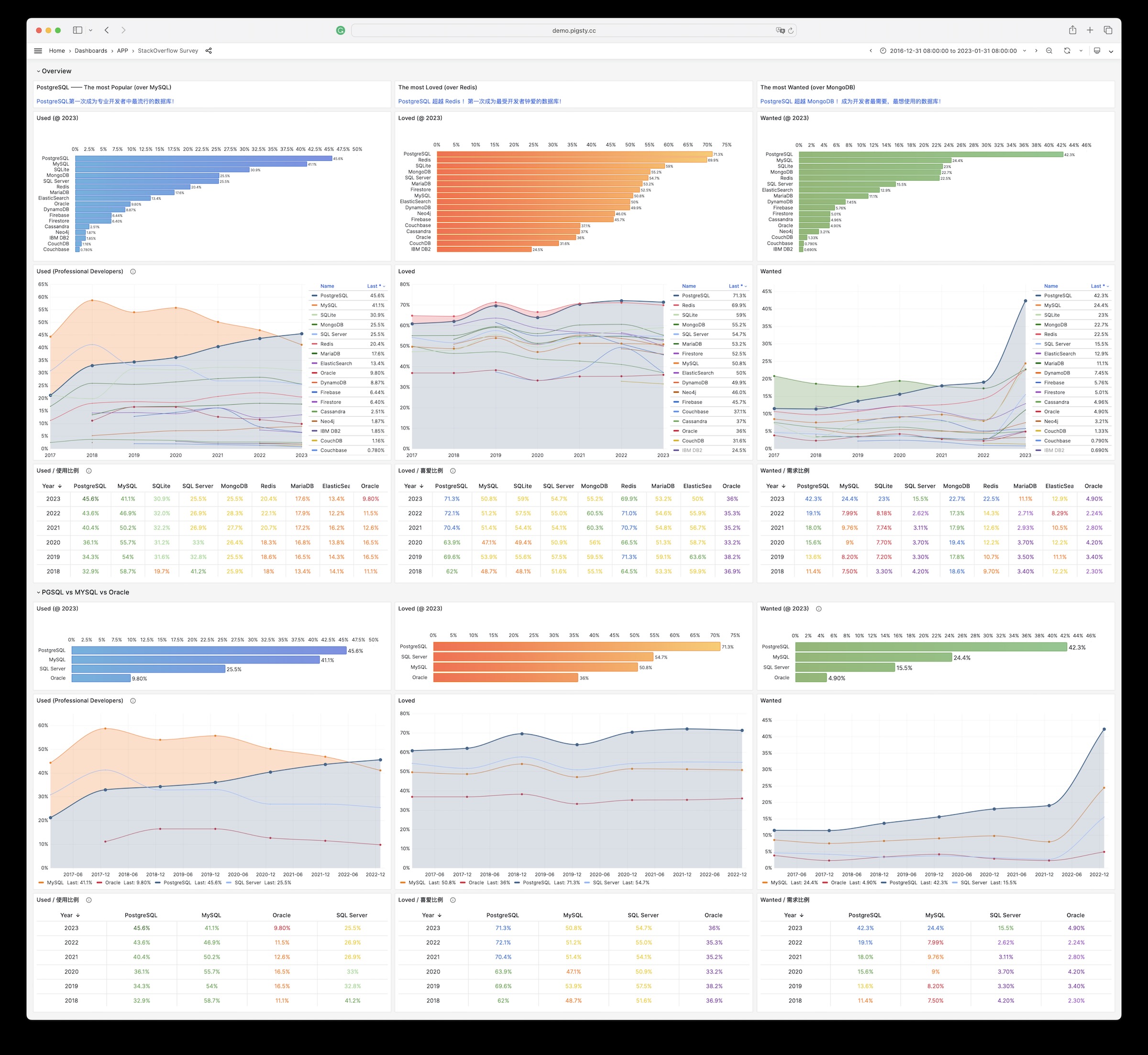

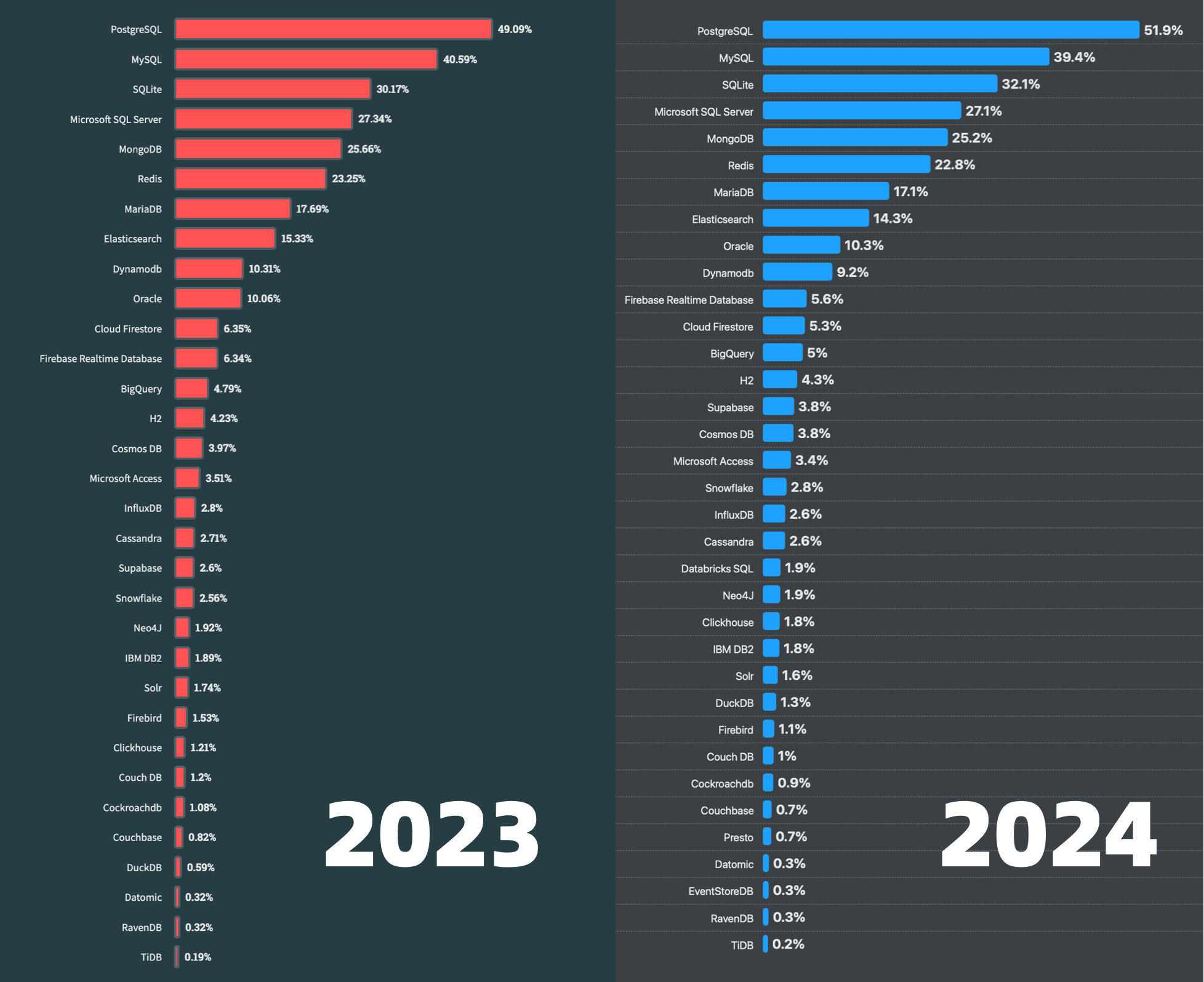

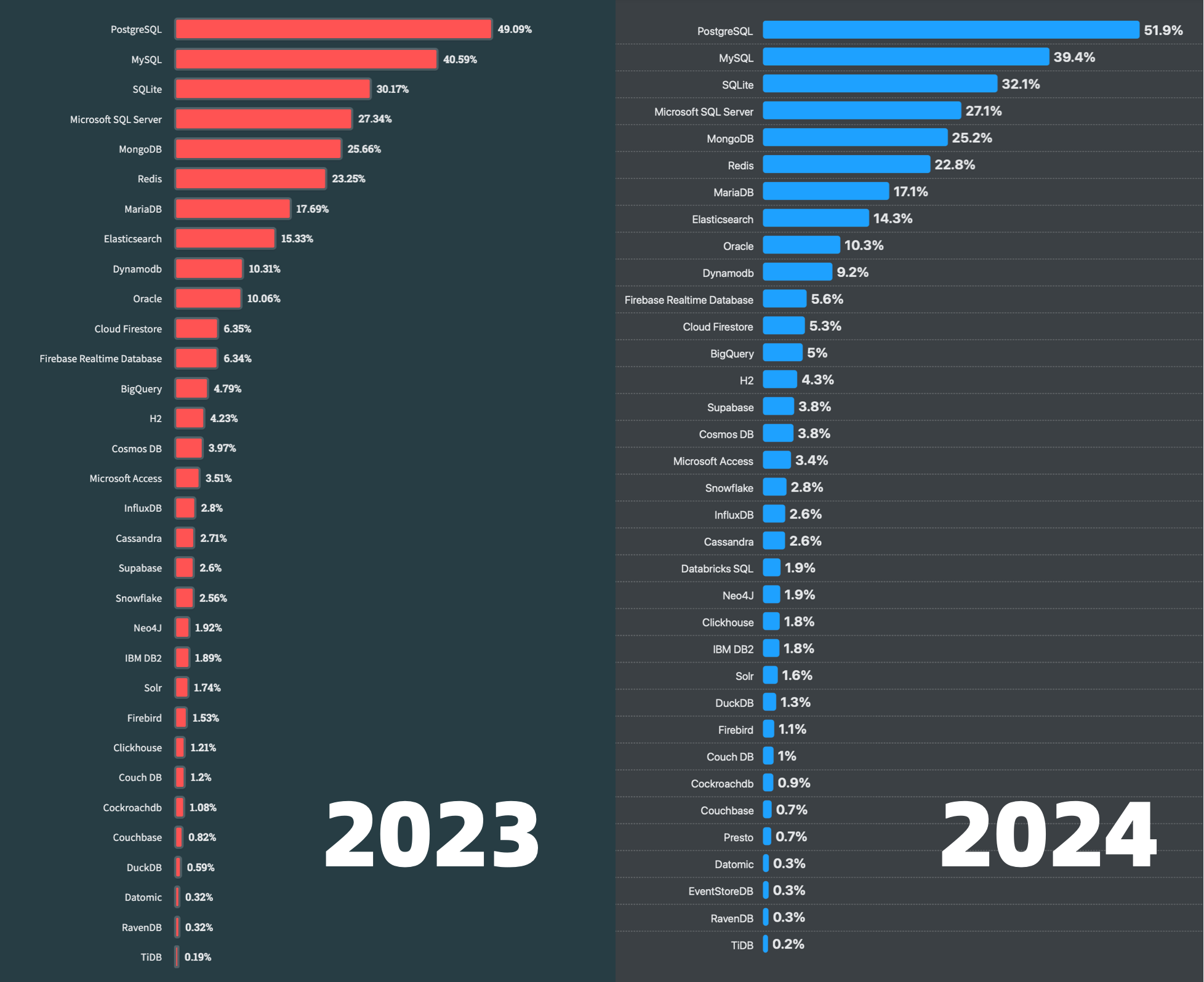

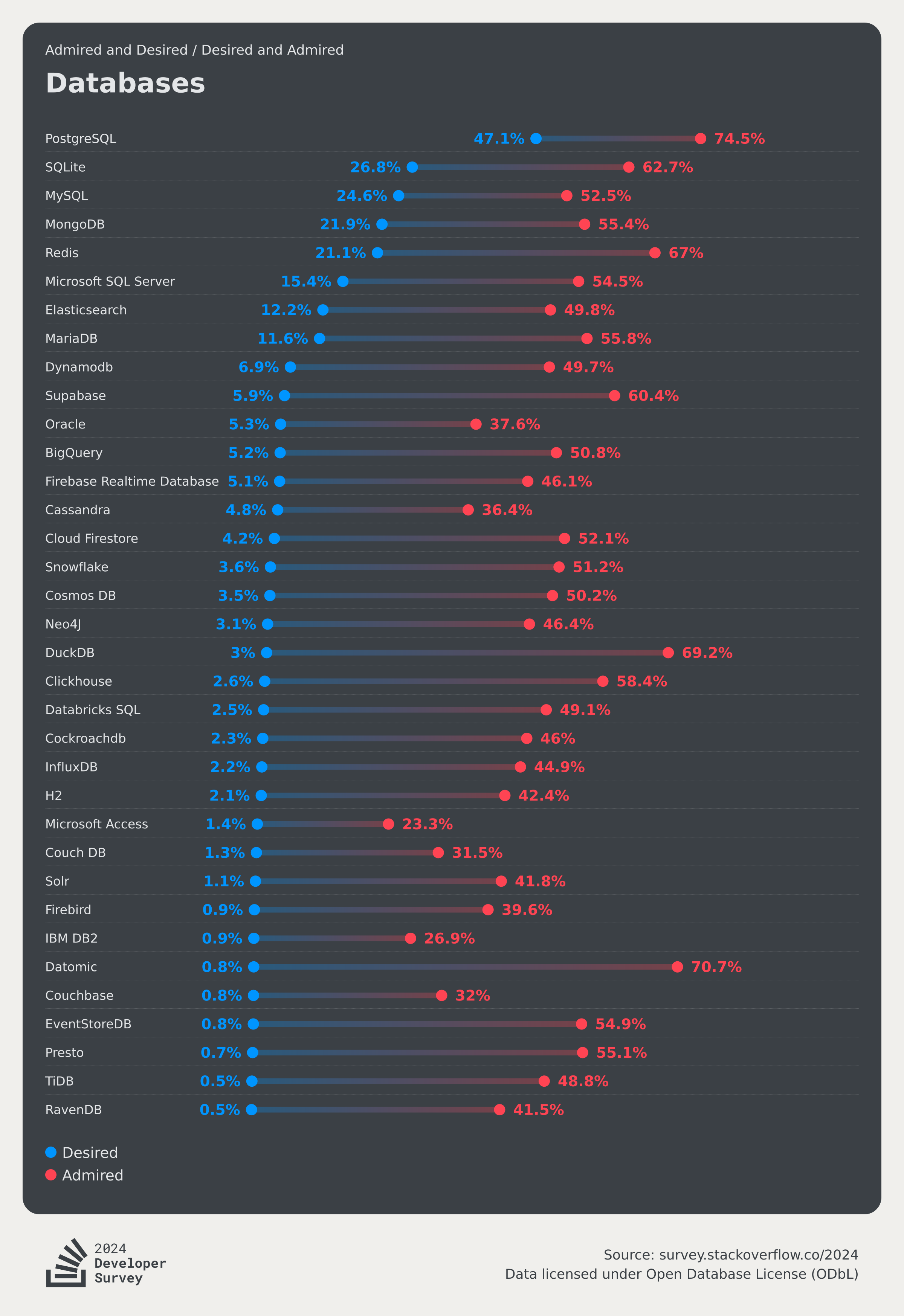

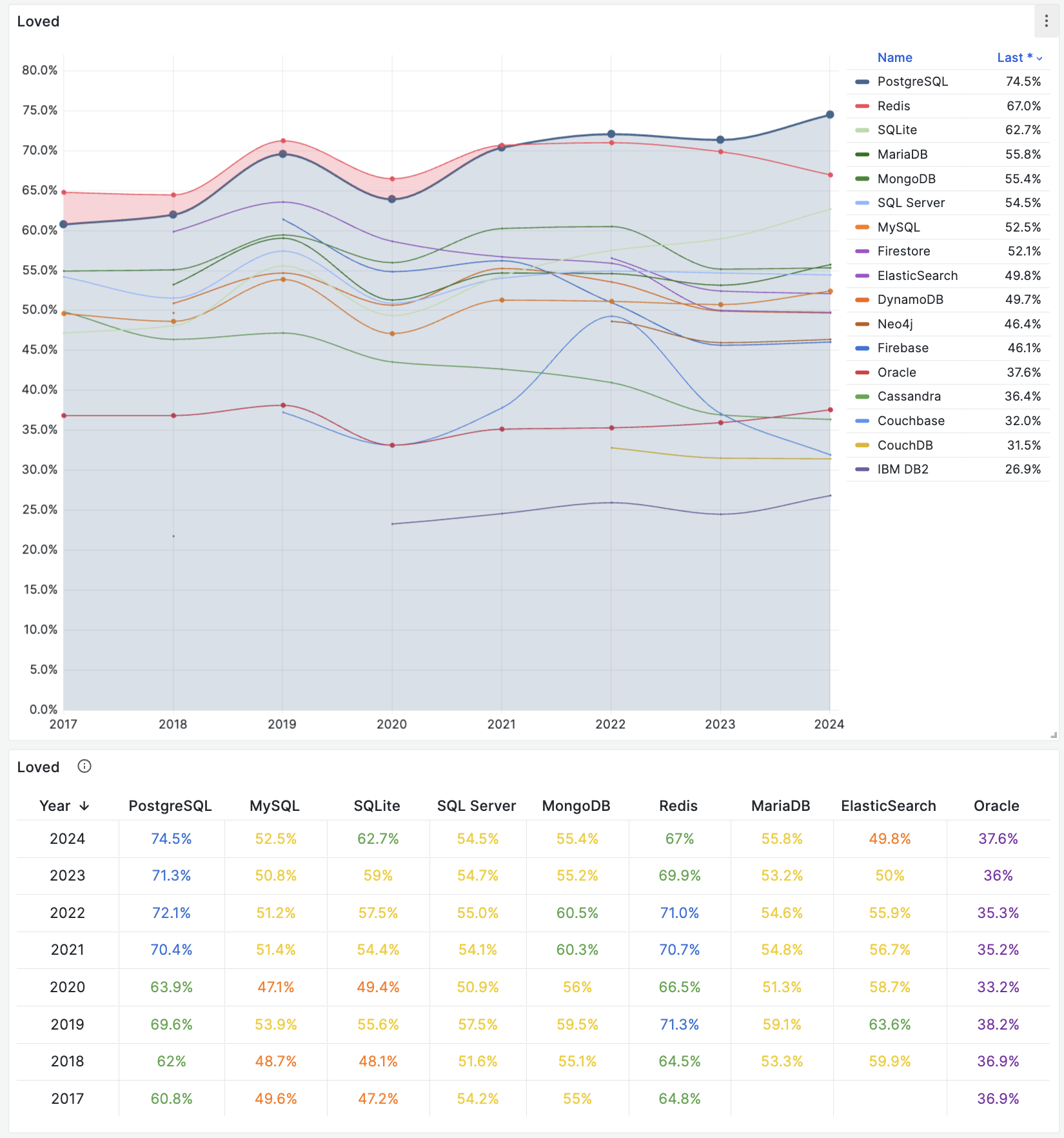

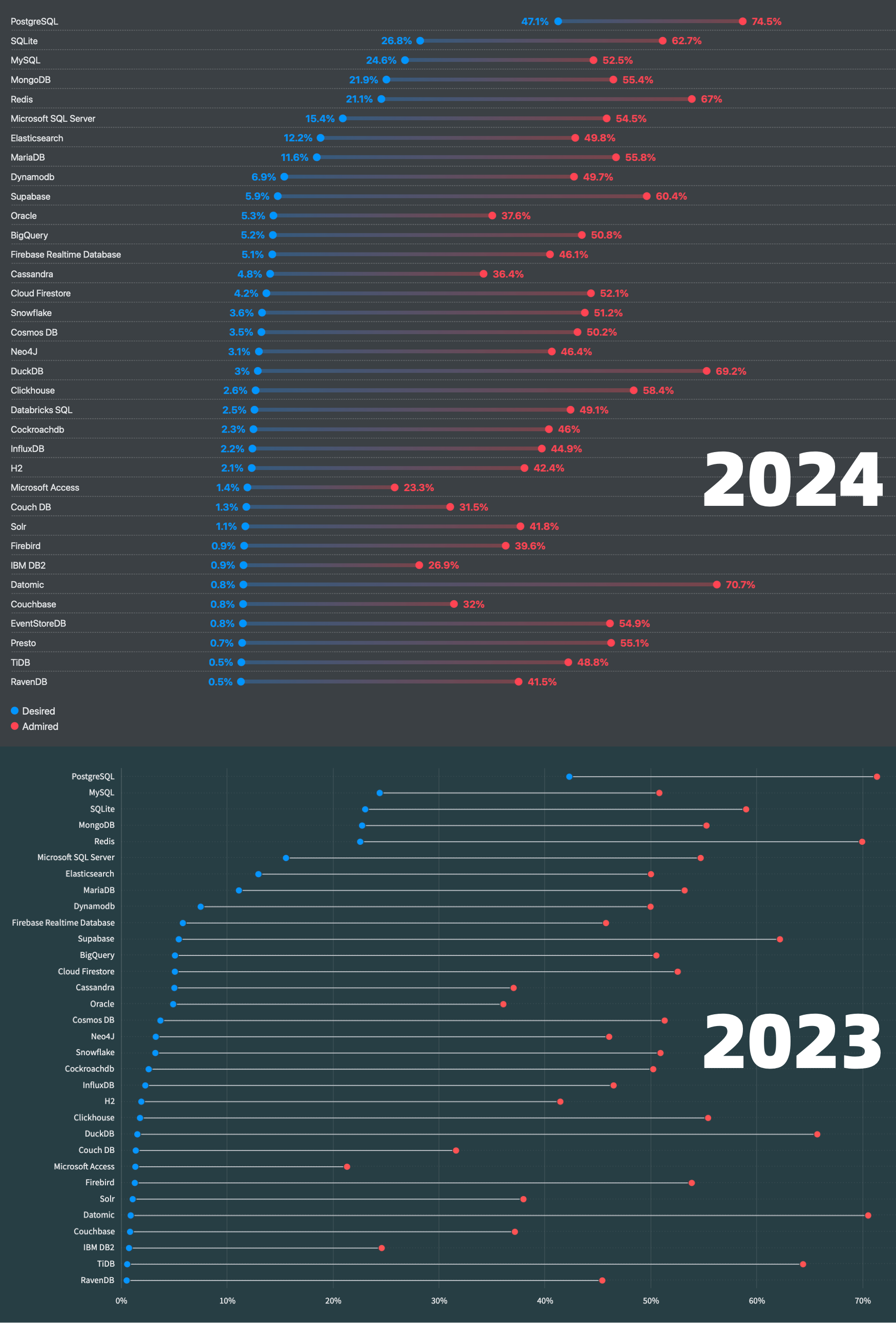

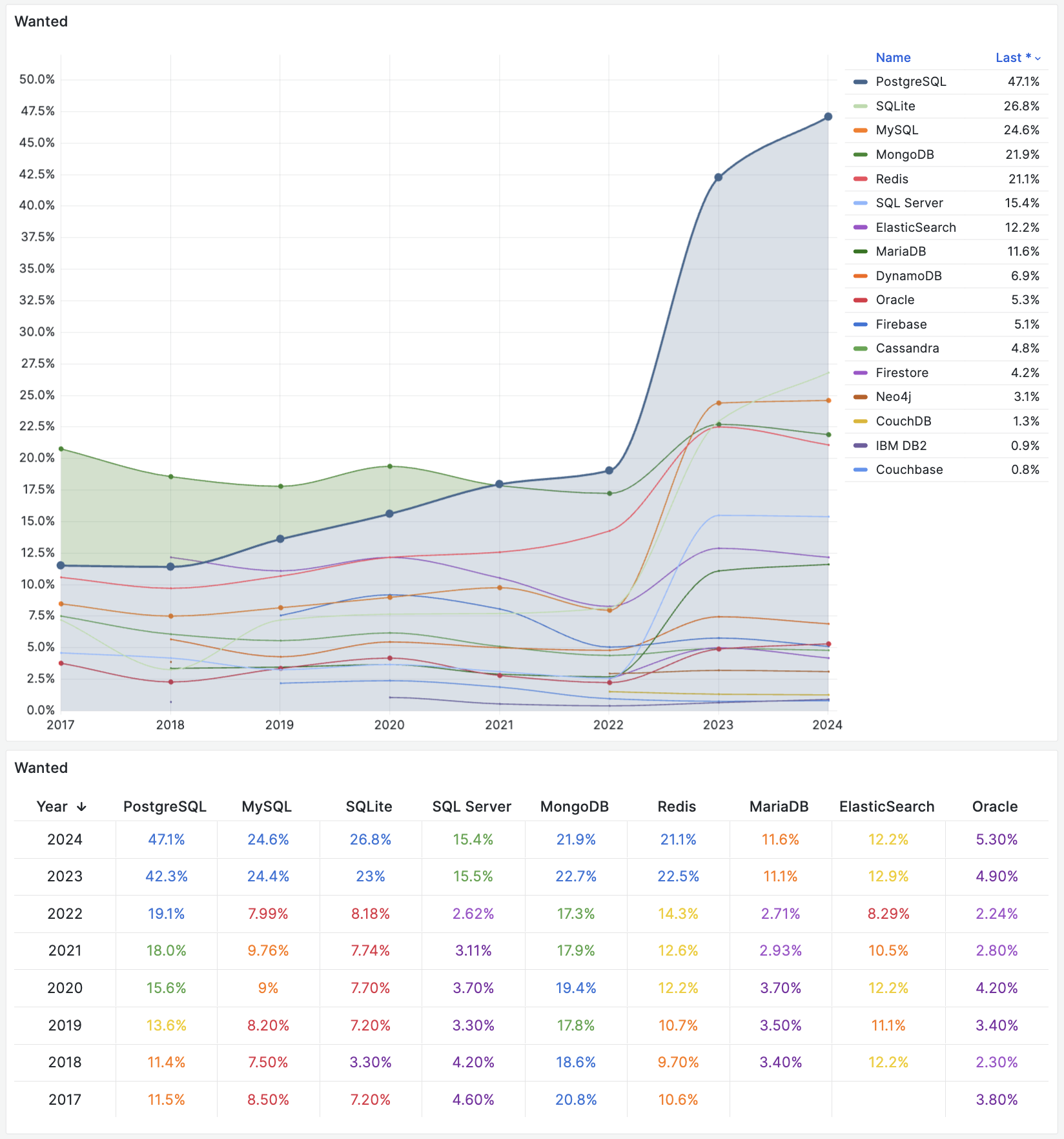

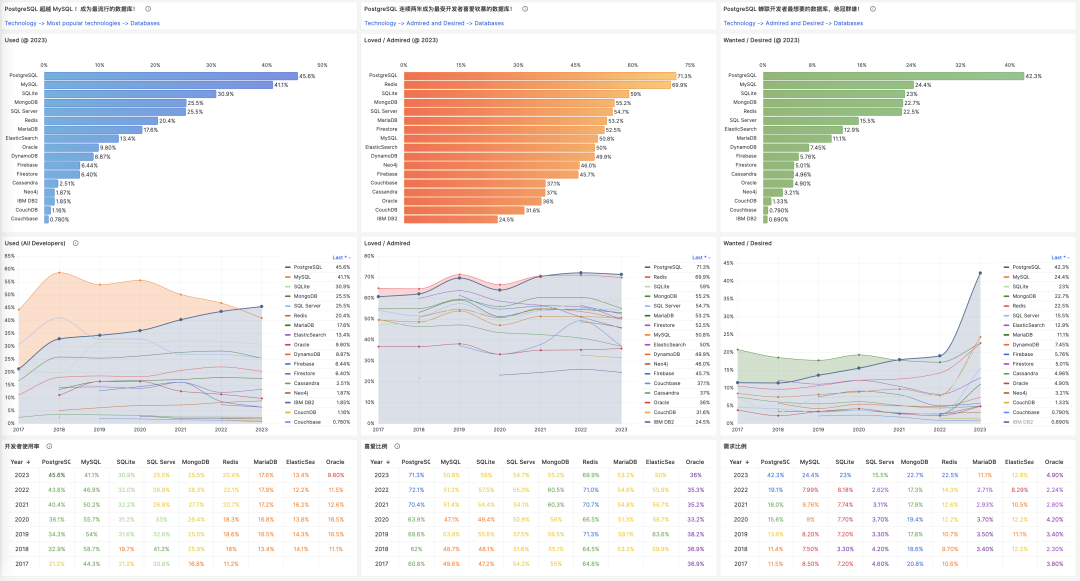

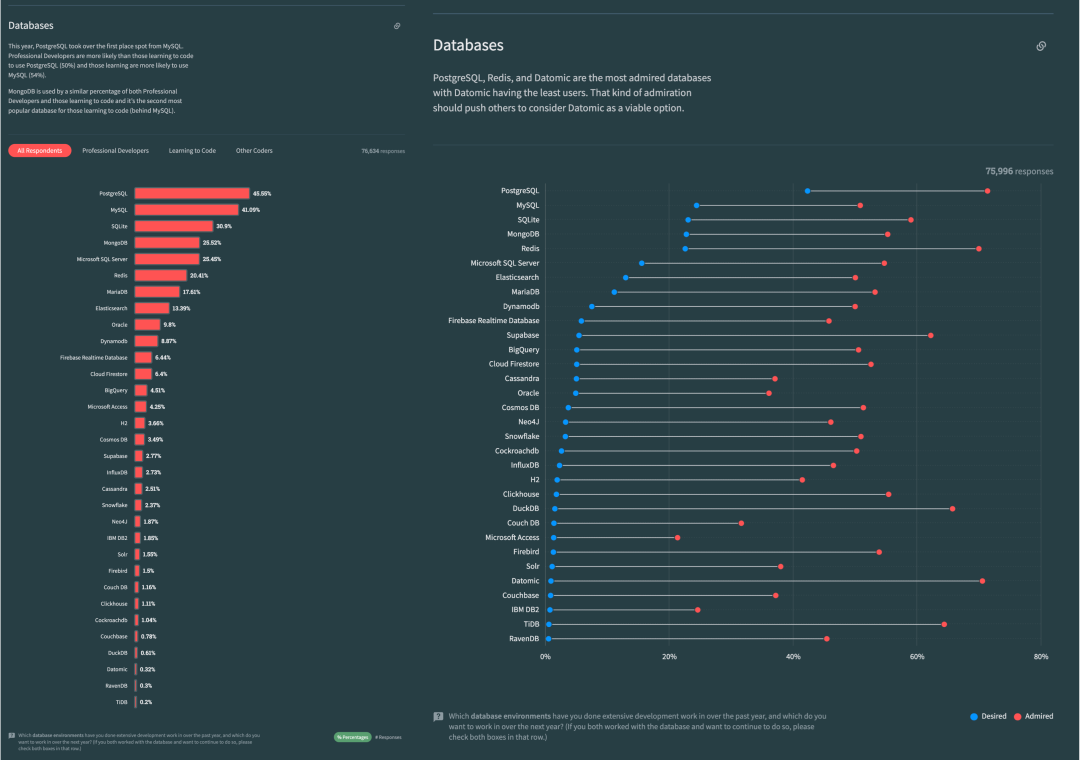

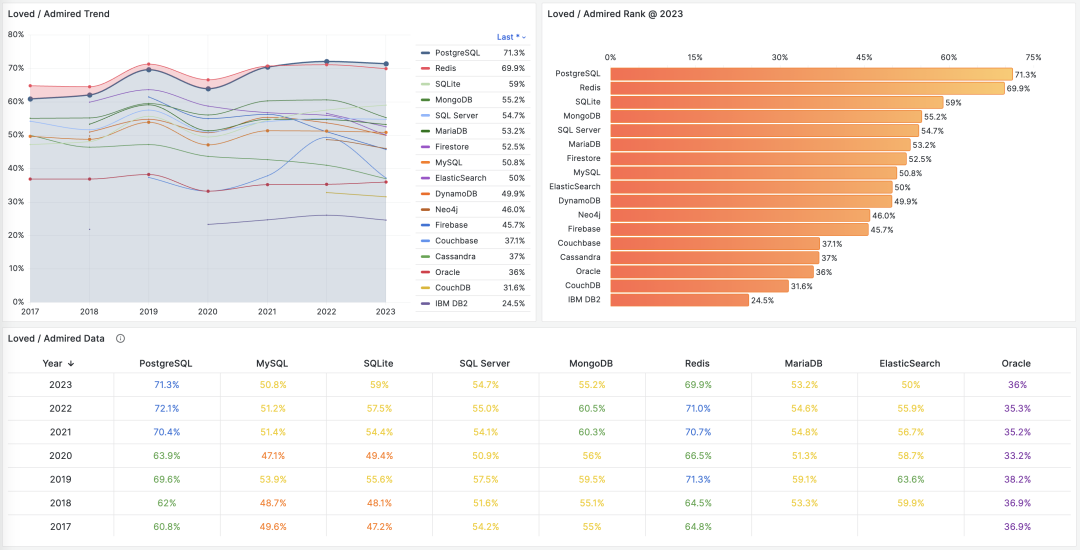

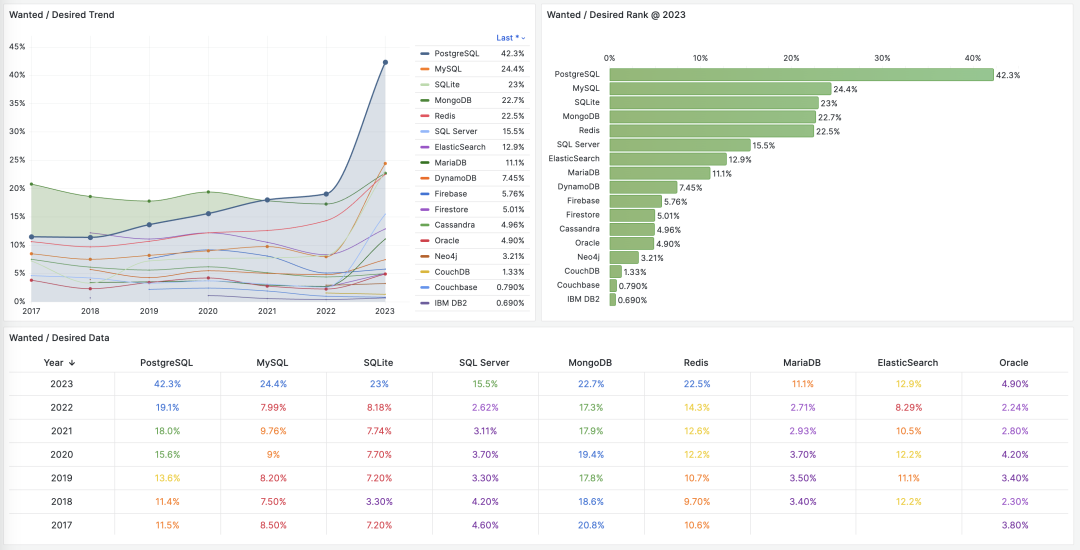

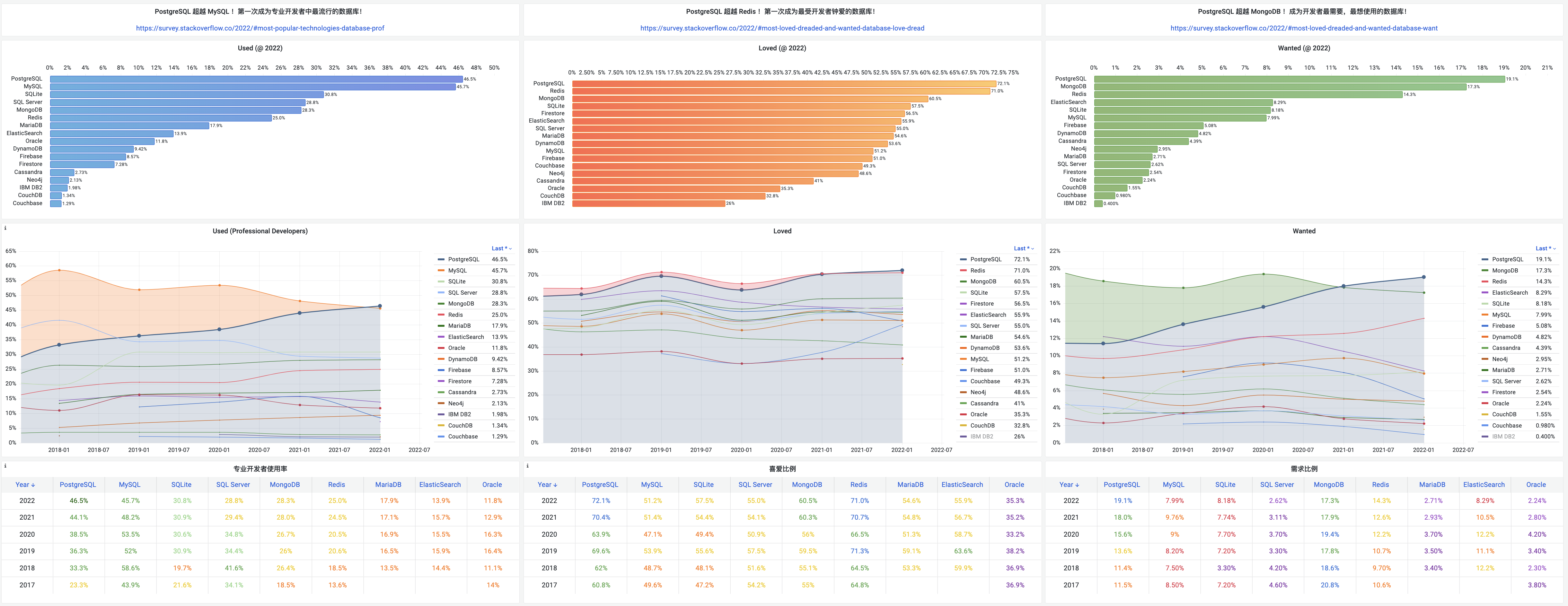

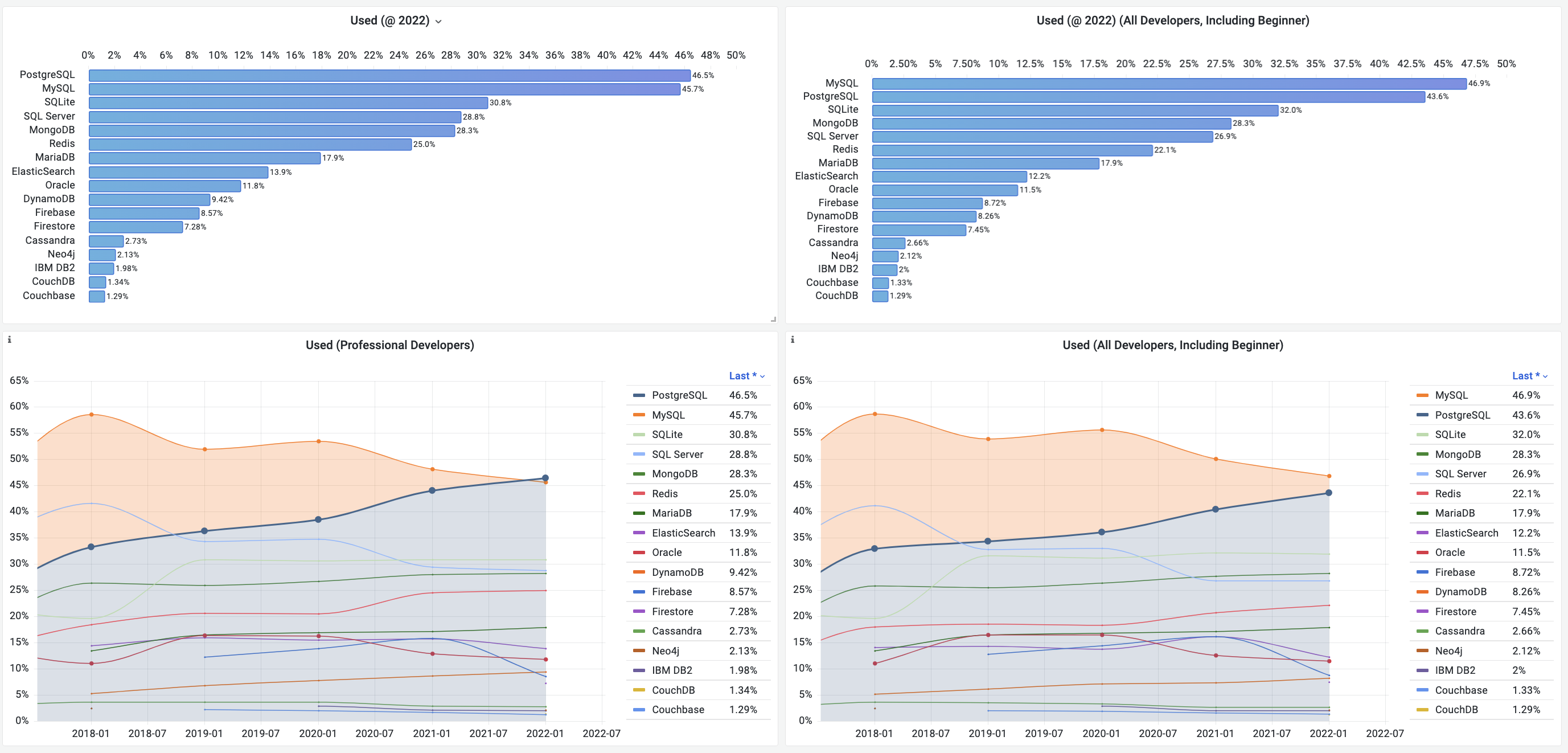

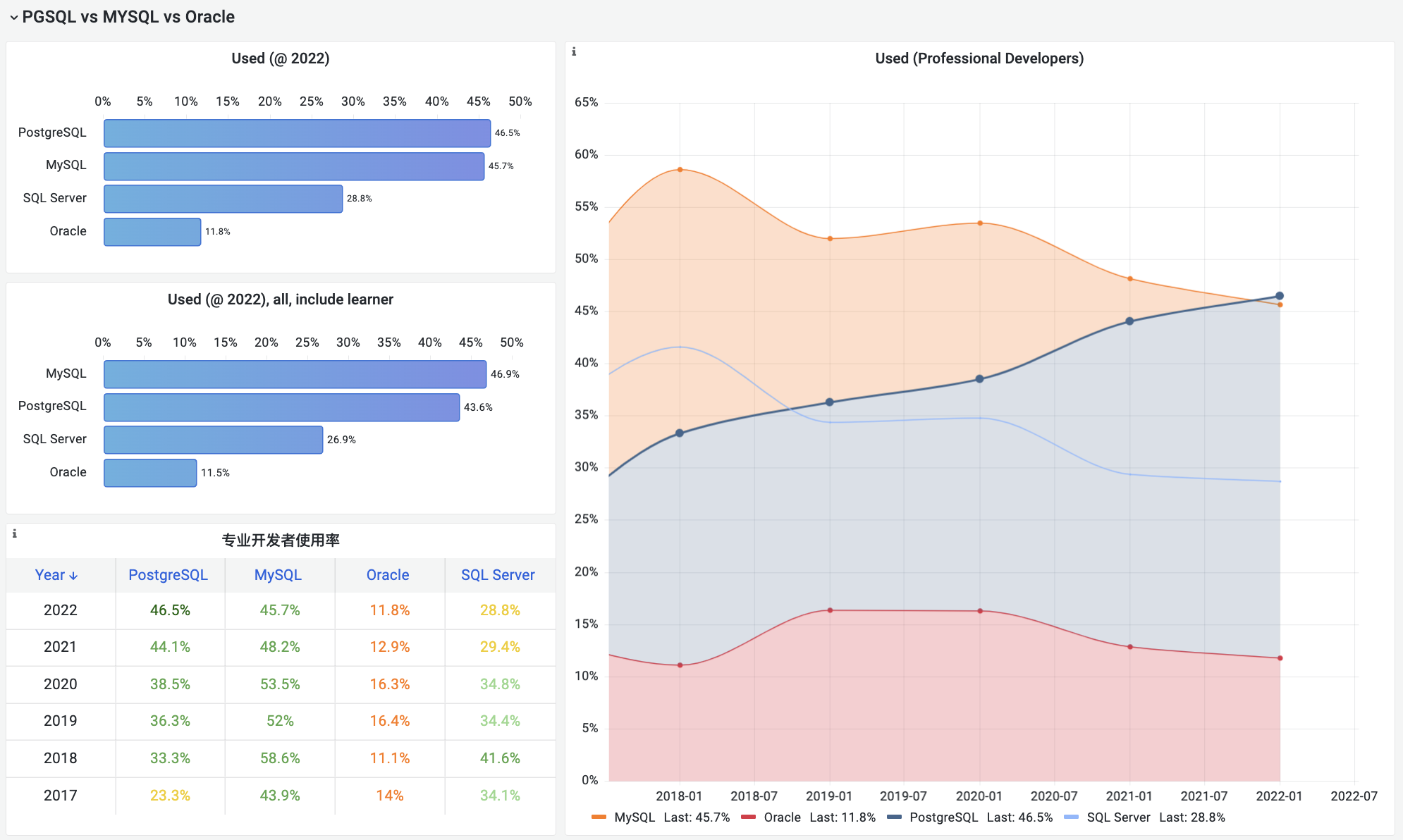

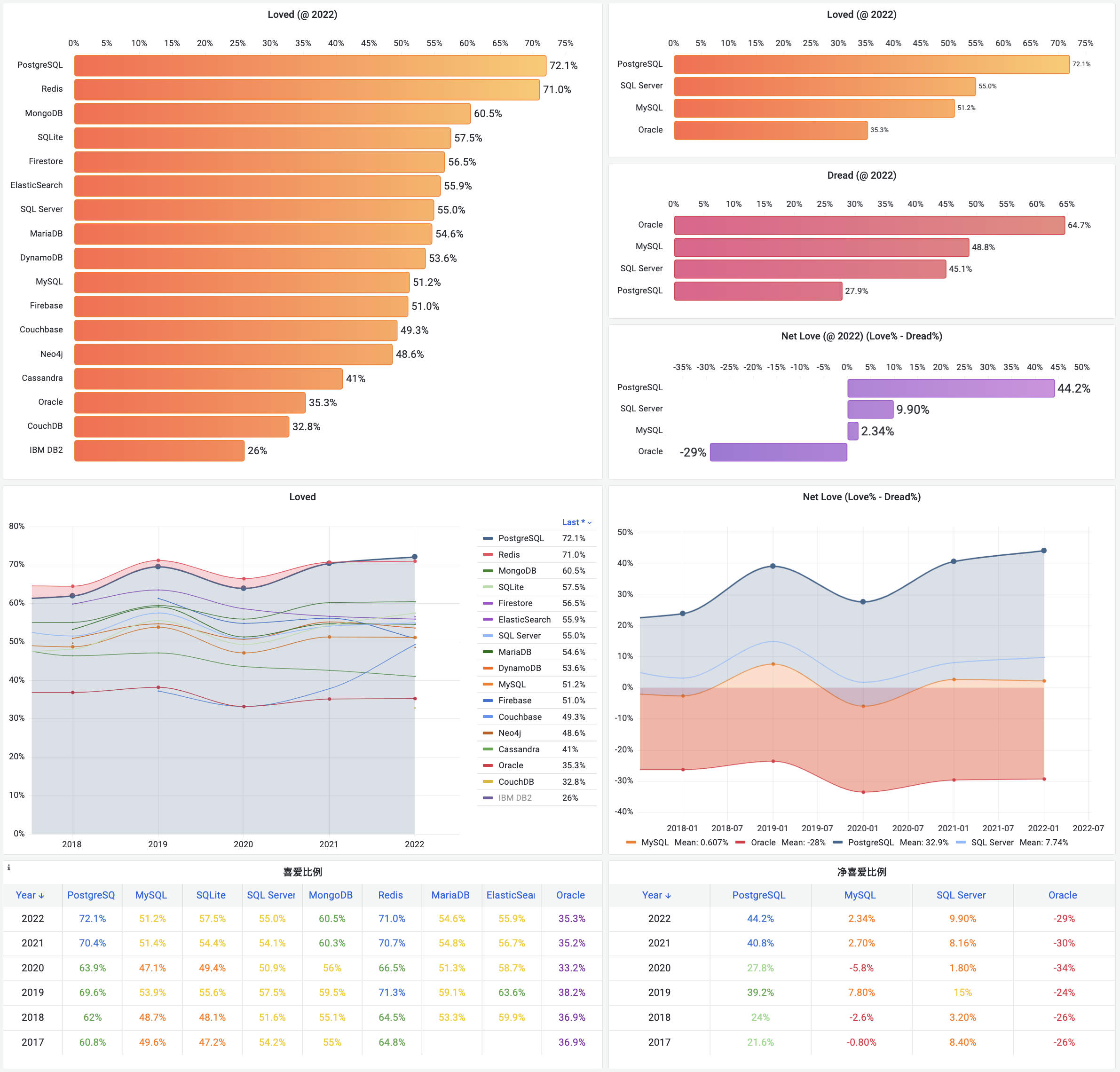

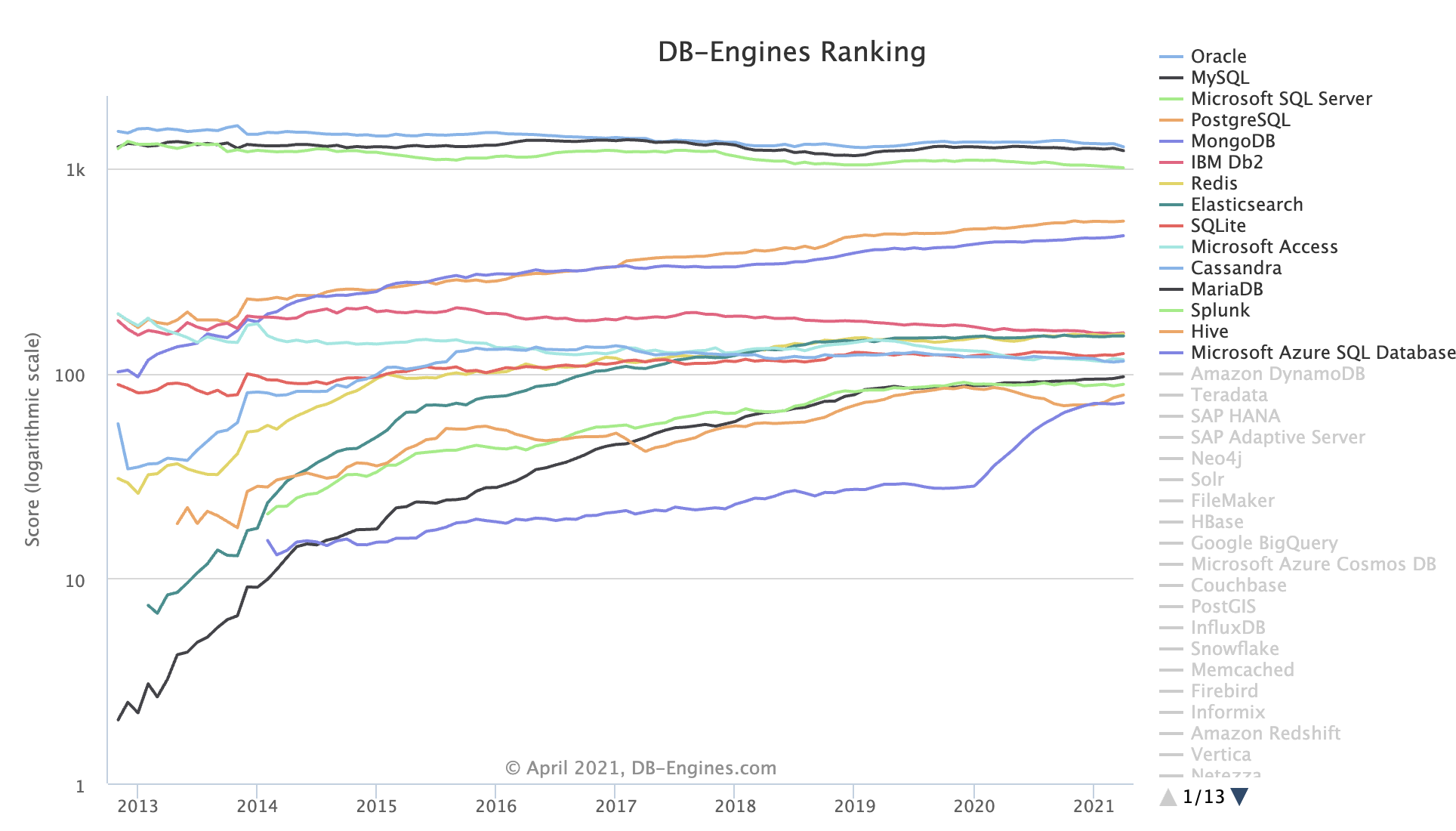

- StackOverflow 2024 Survey: PostgreSQL Is Dominating the Field

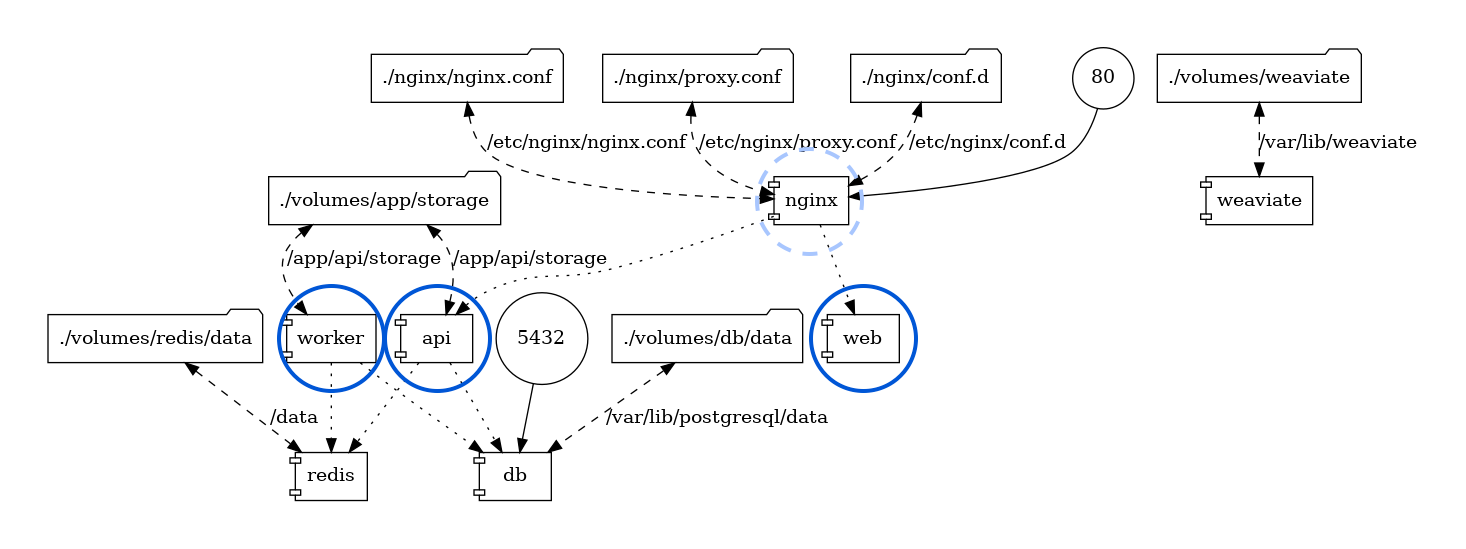

- Self-Hosting Dify with PG, PGVector, and Pigsty

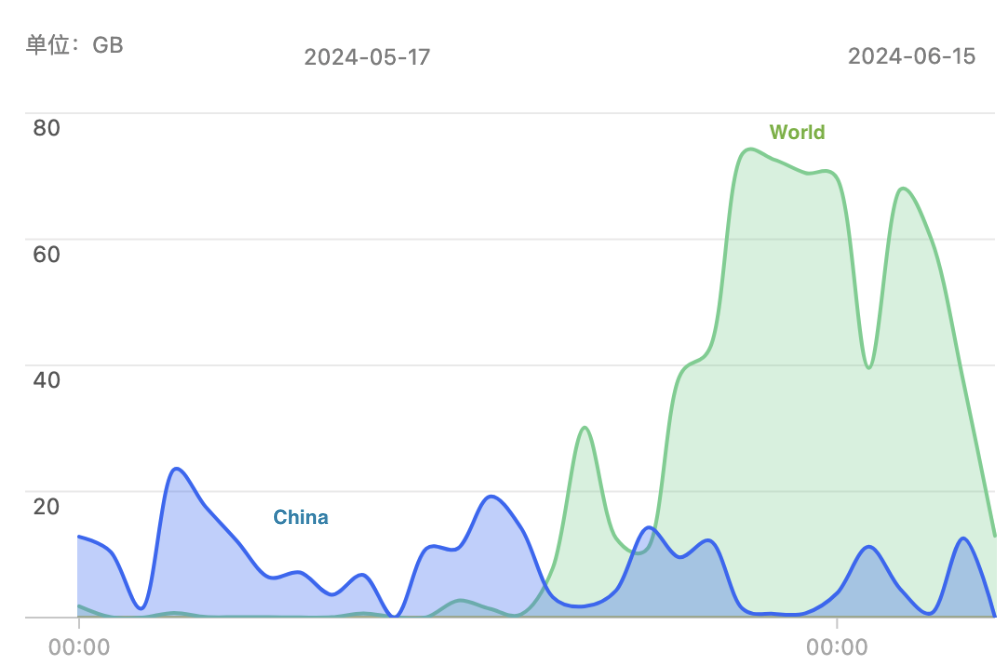

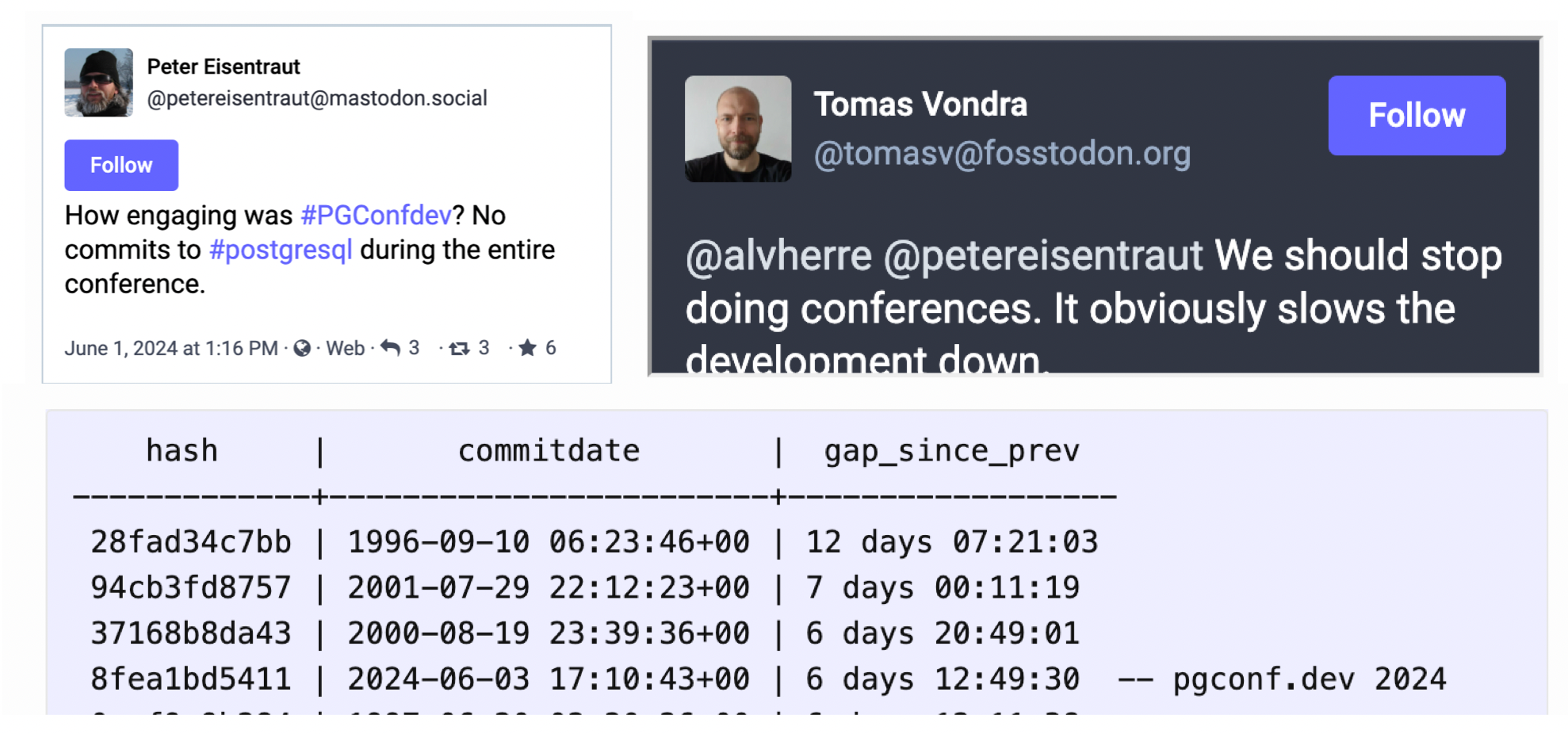

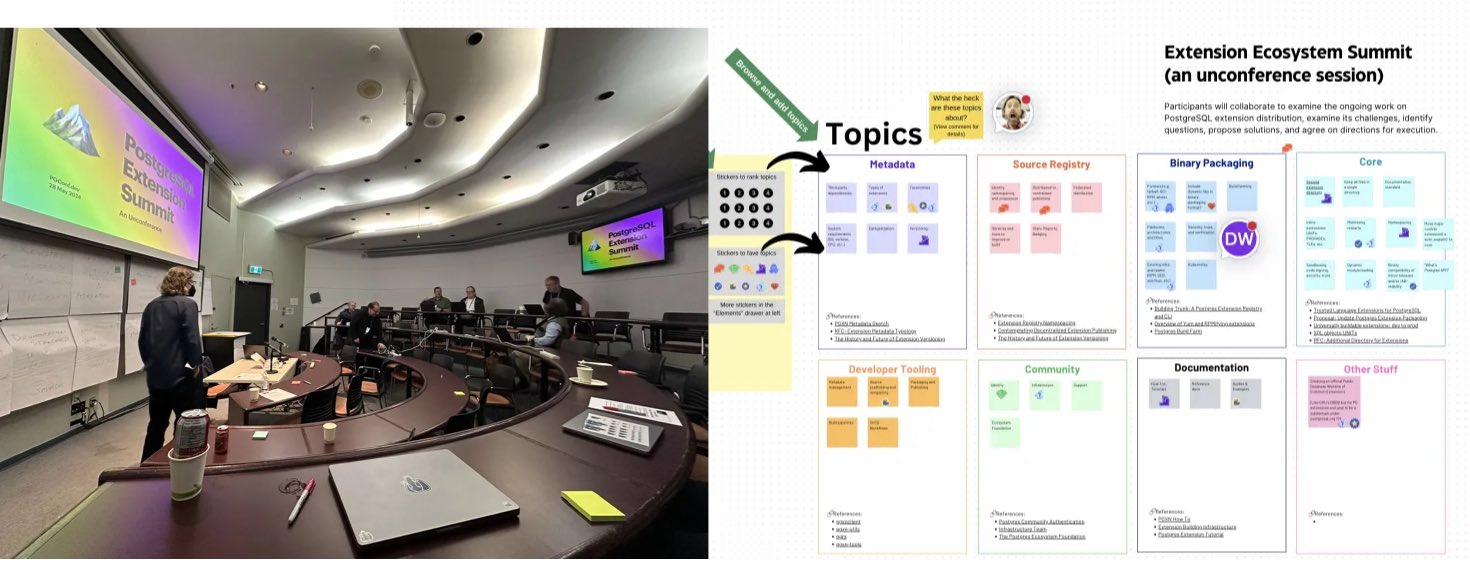

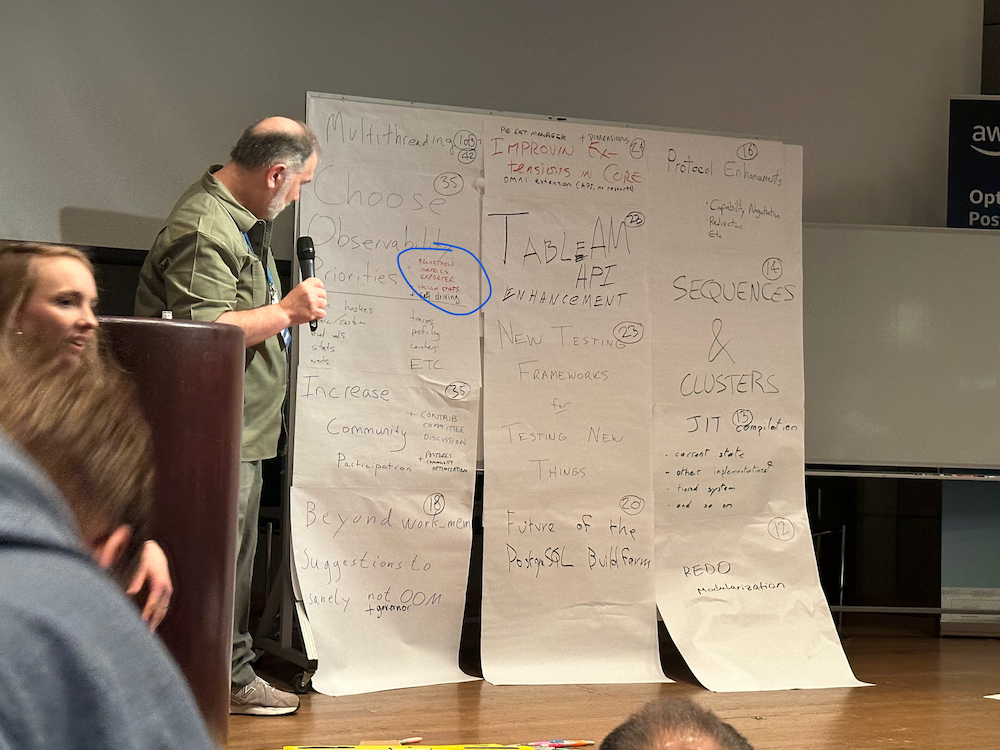

- PGCon.Dev 2024, The conf that shutdown PG for a week

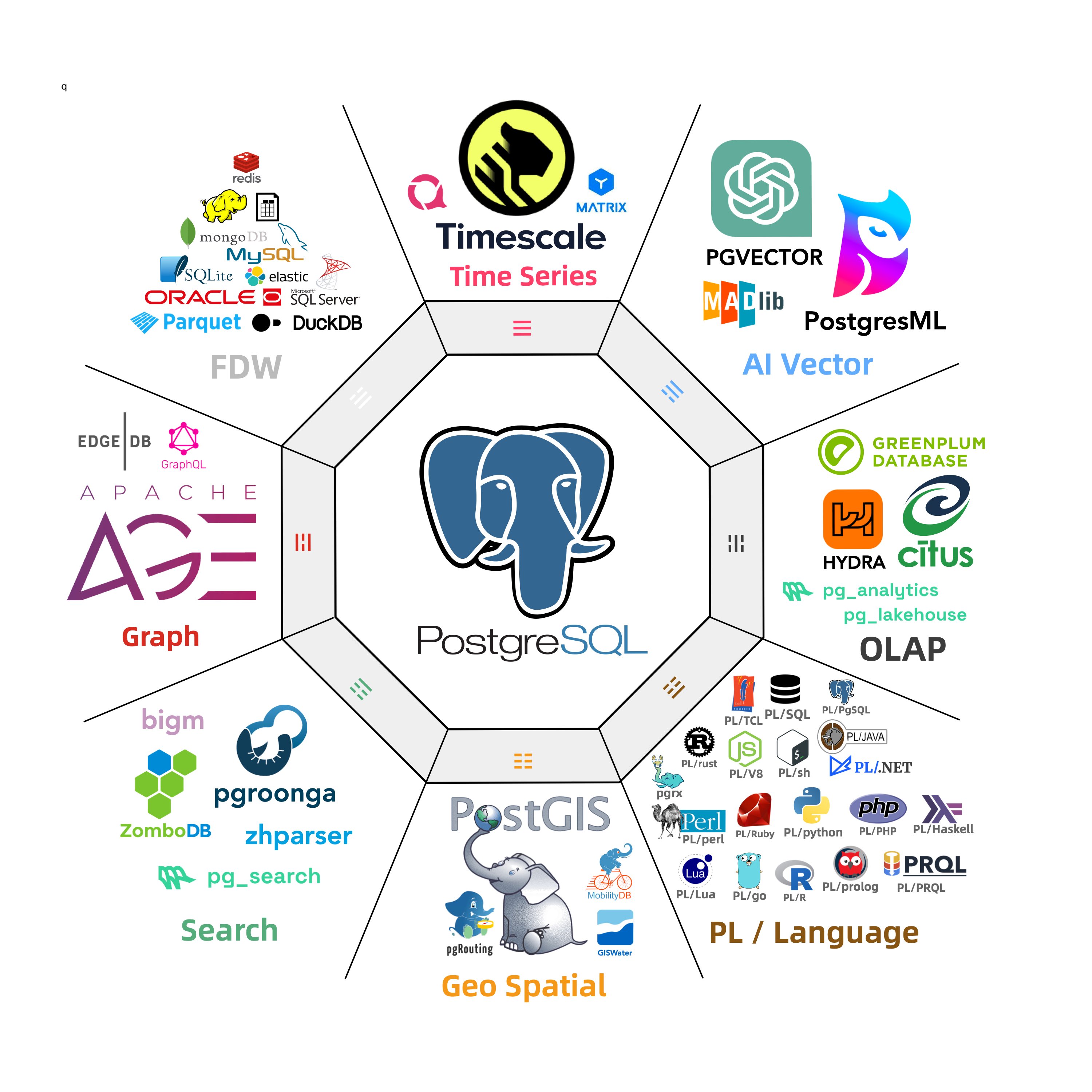

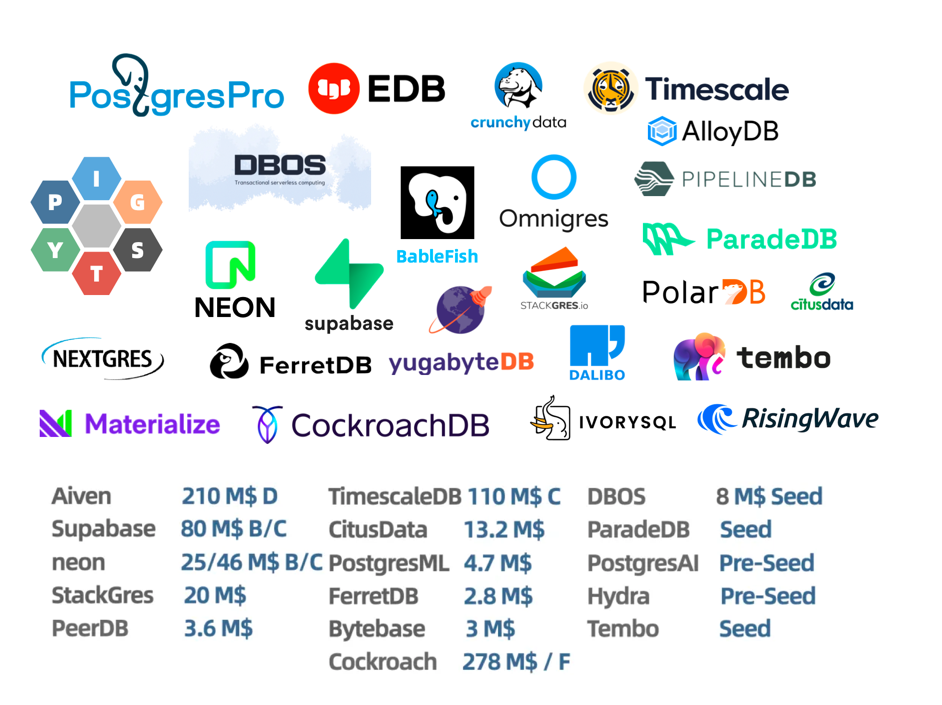

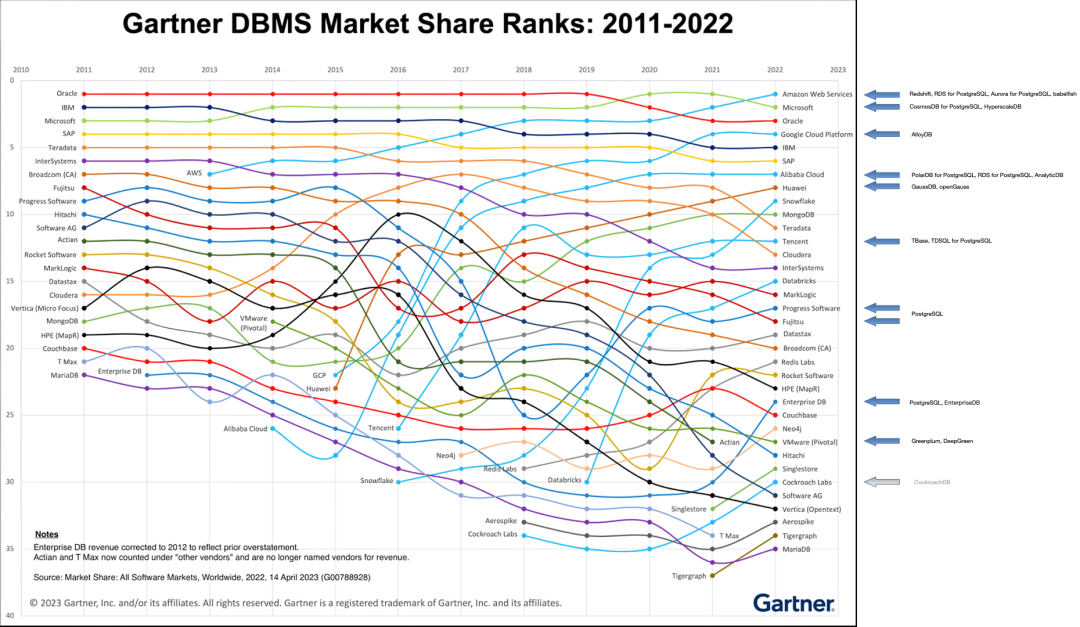

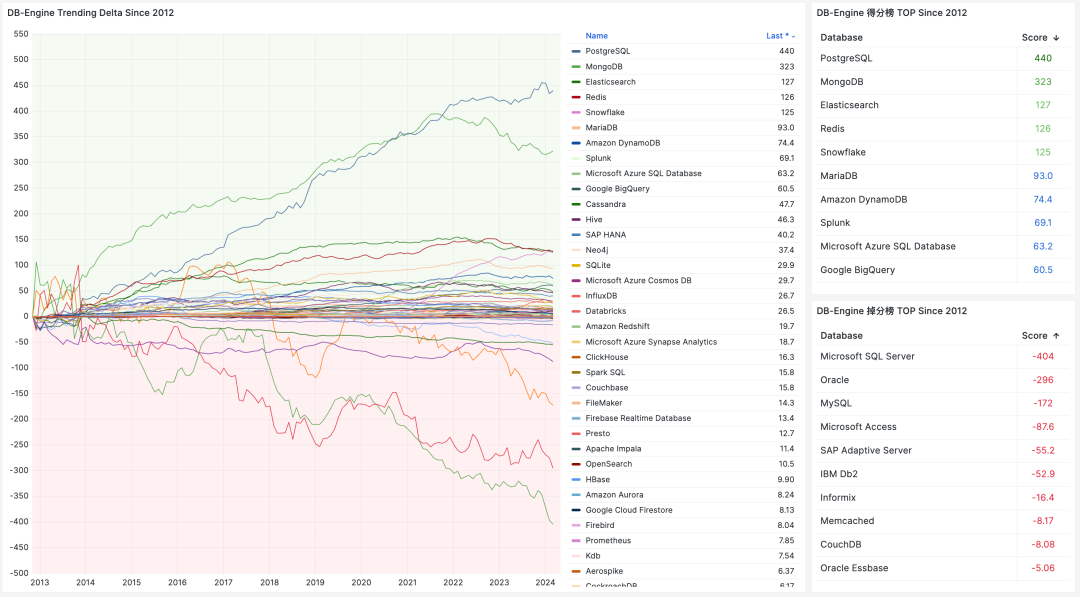

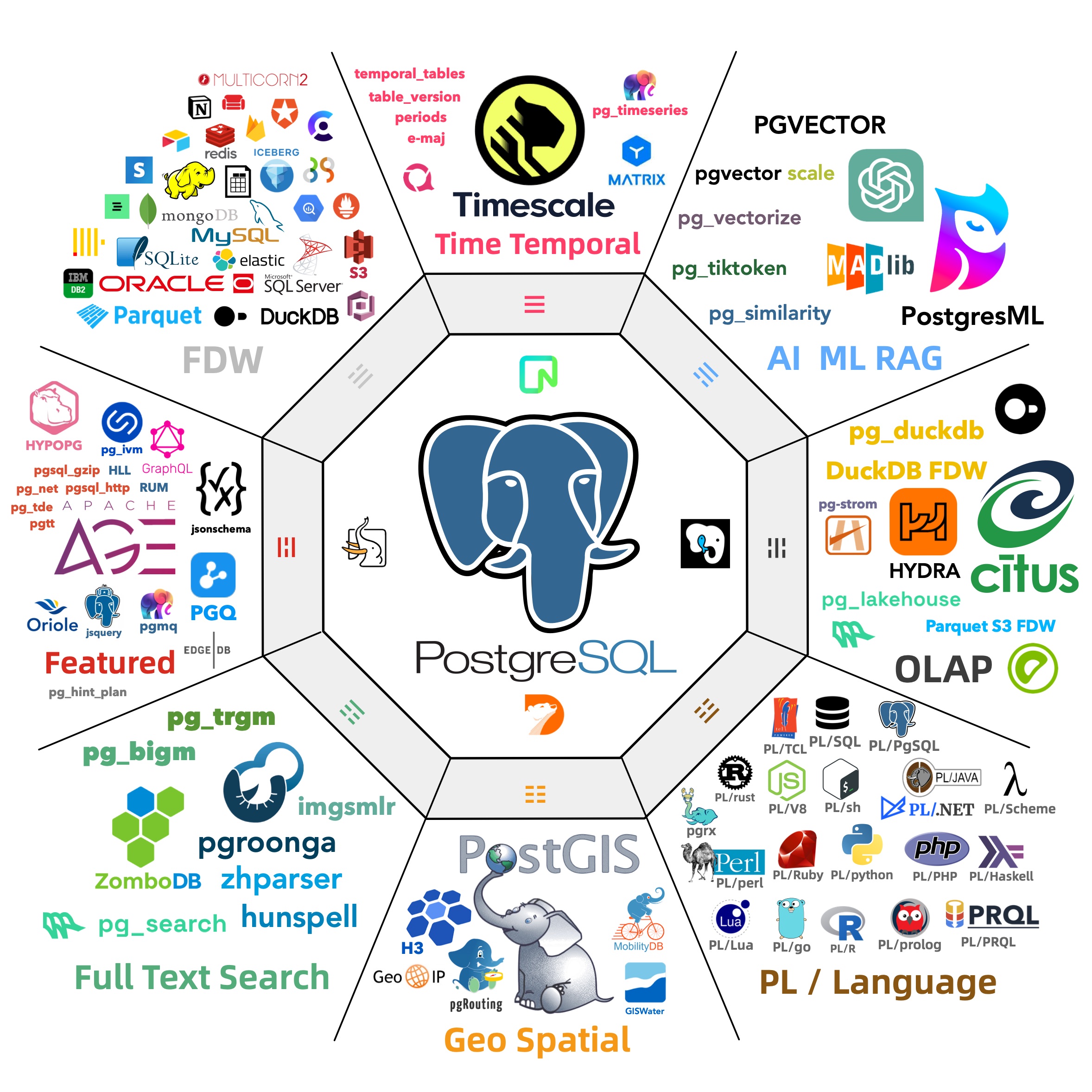

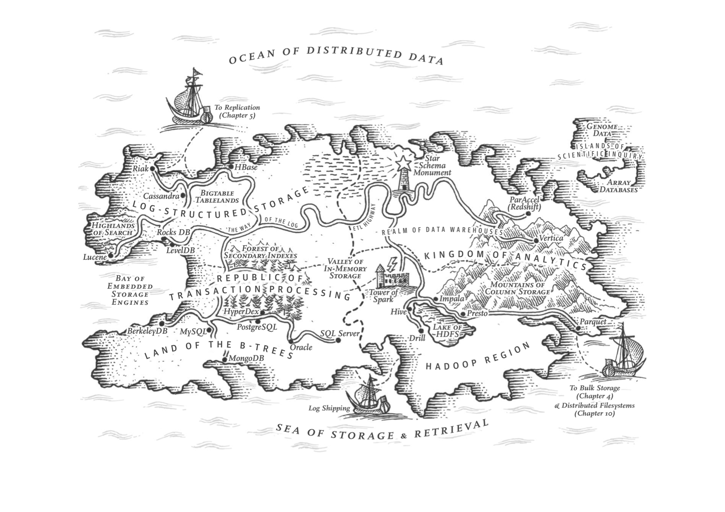

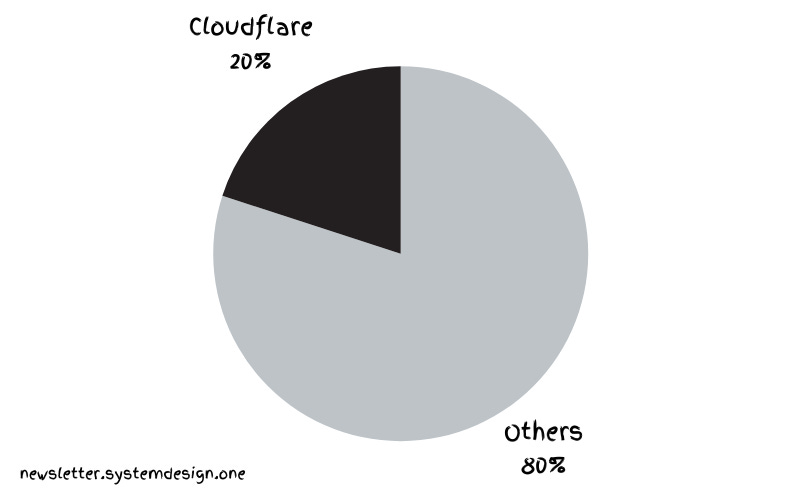

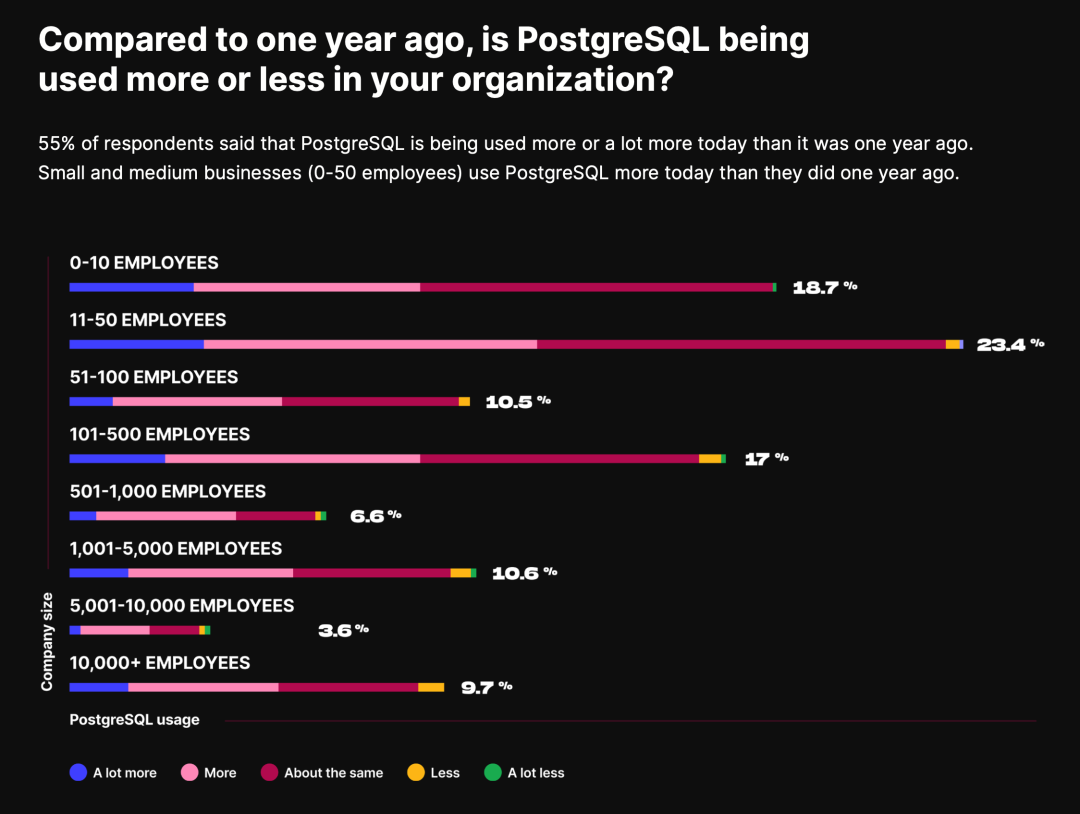

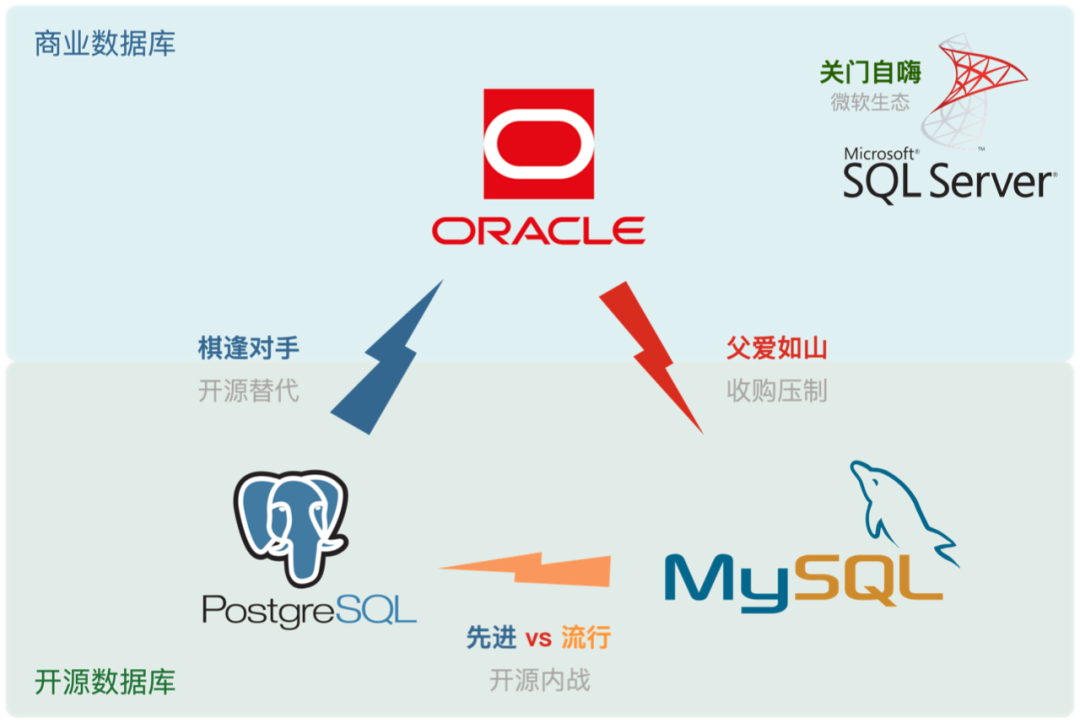

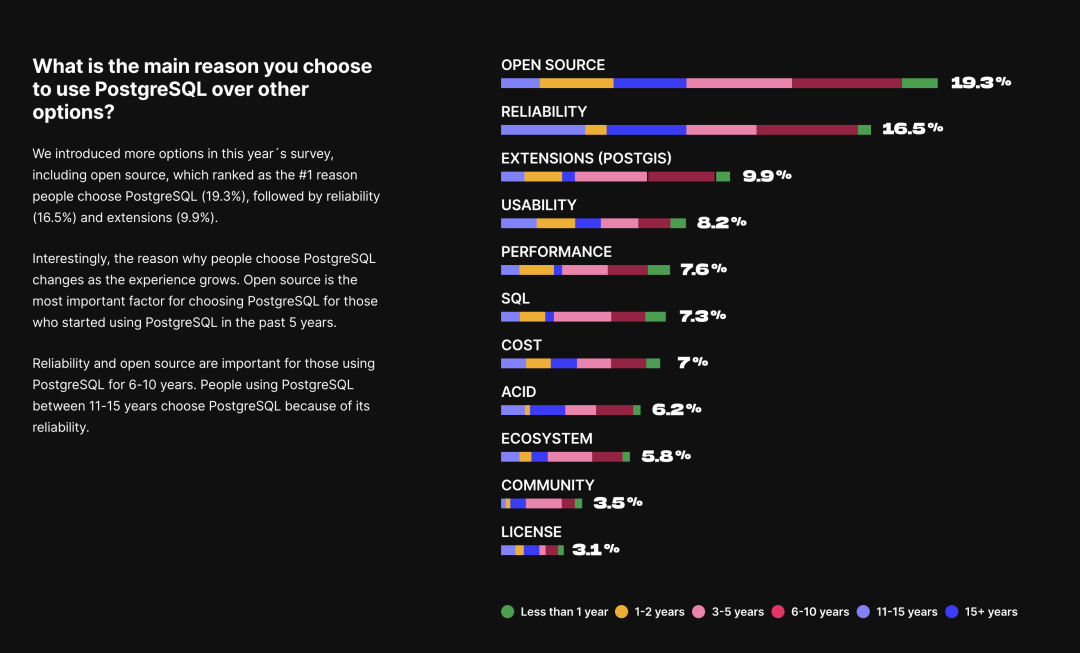

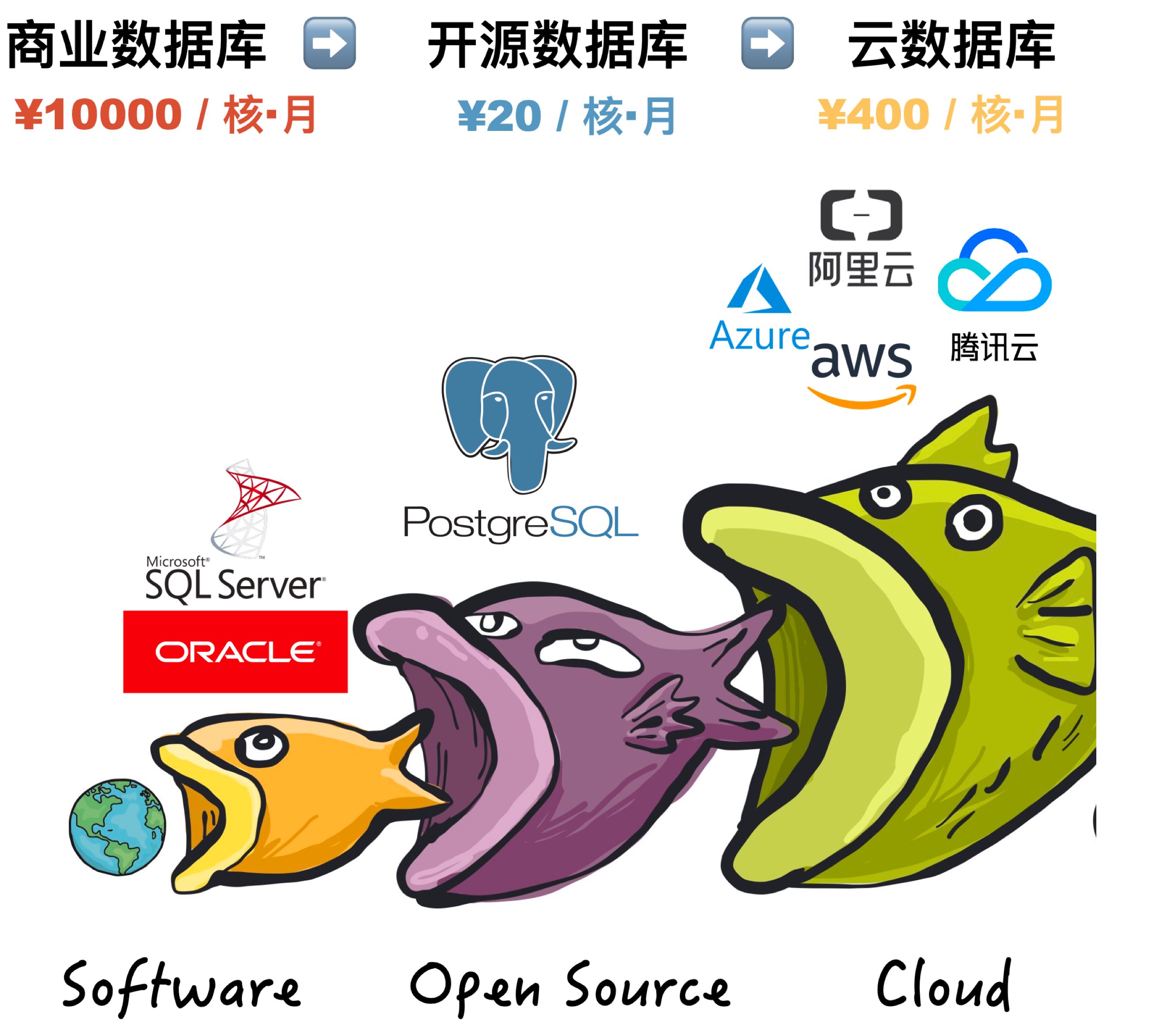

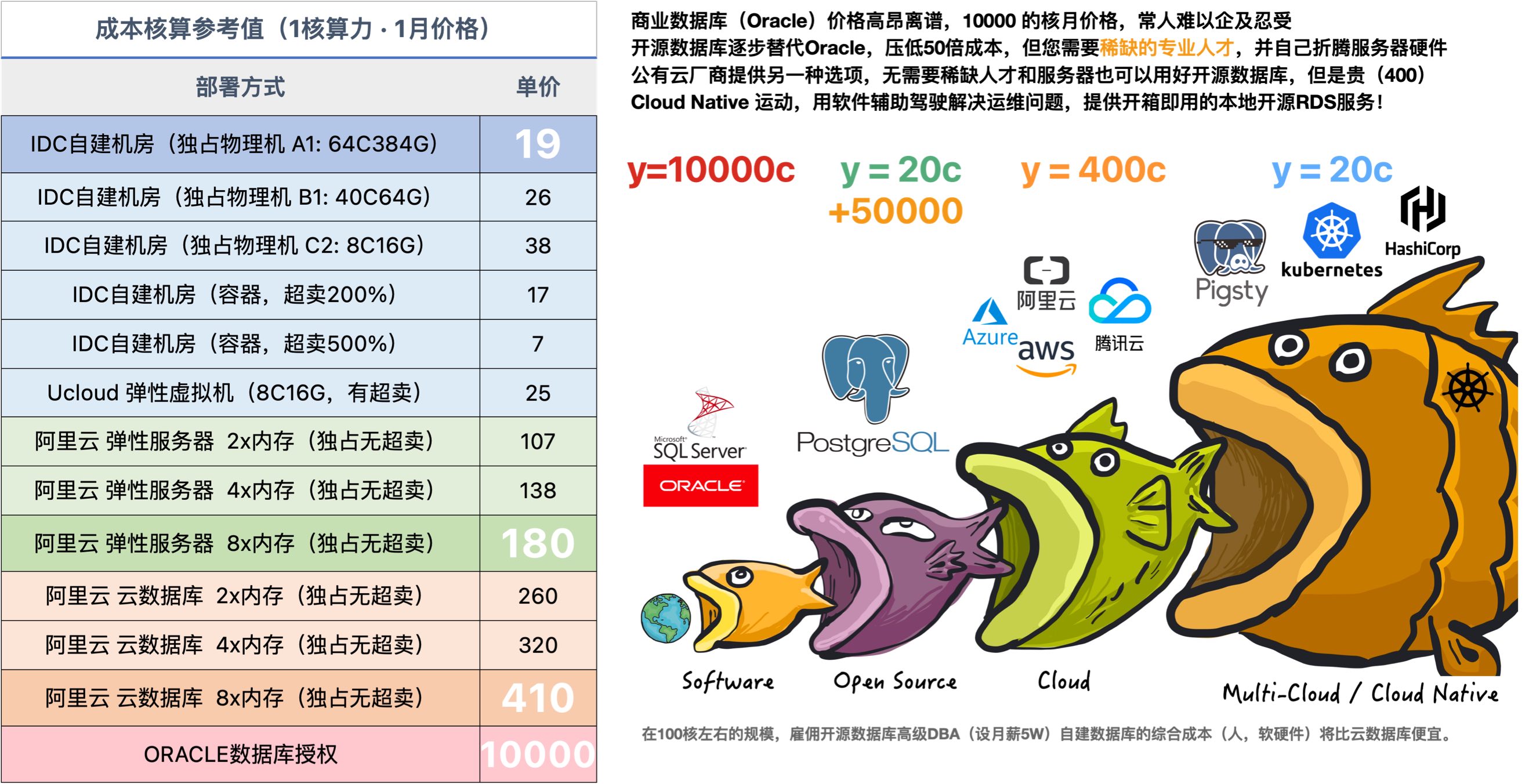

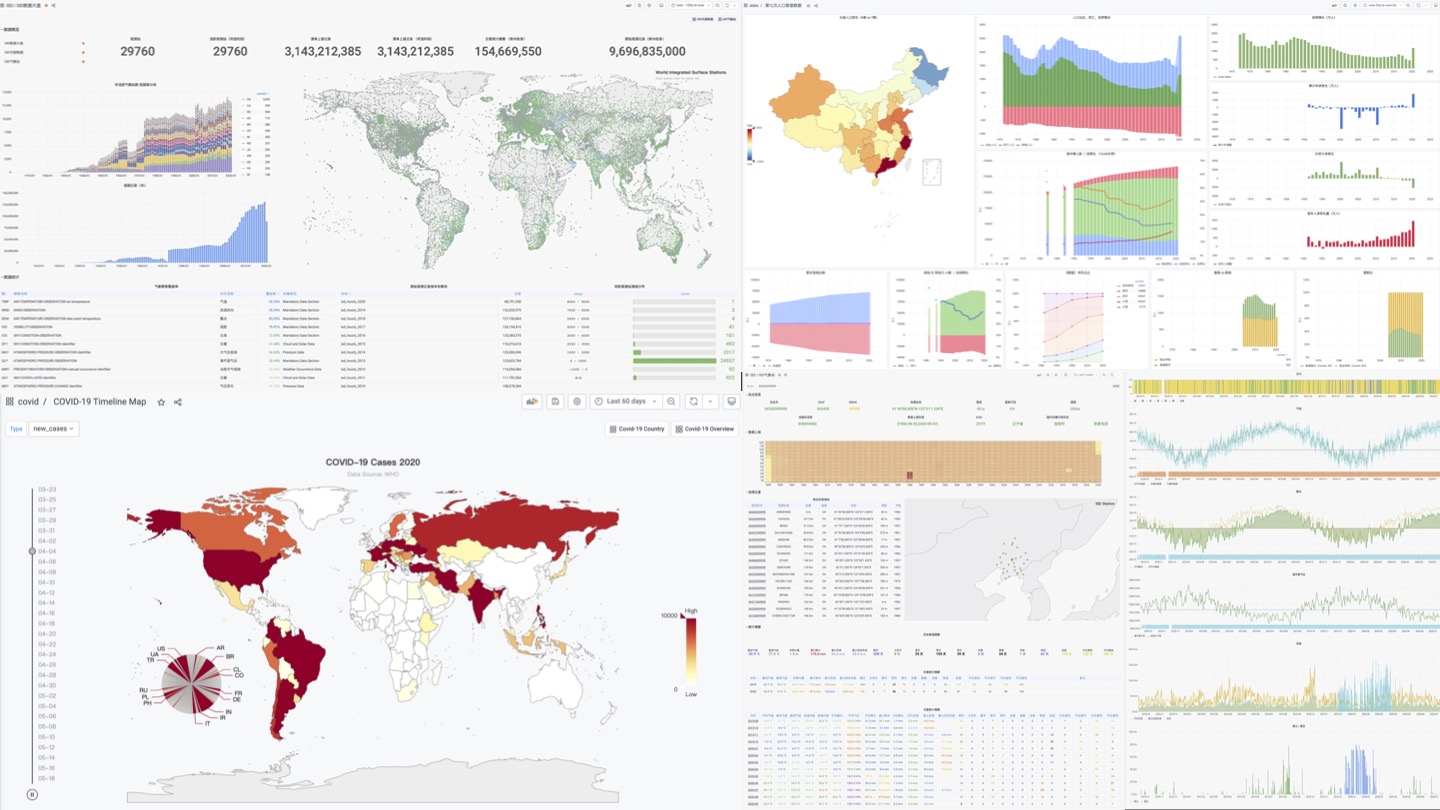

- Postgres is eating the database world

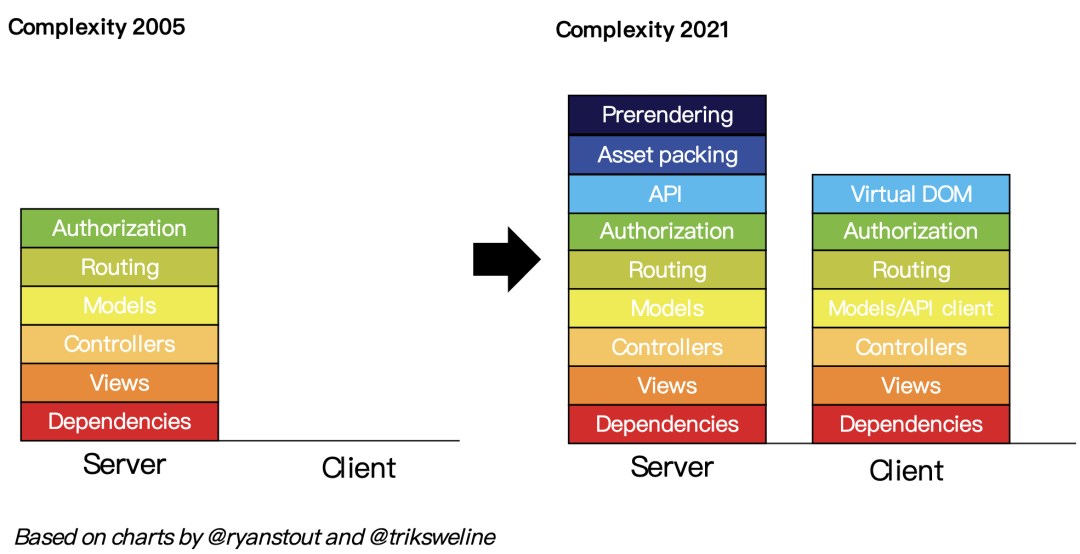

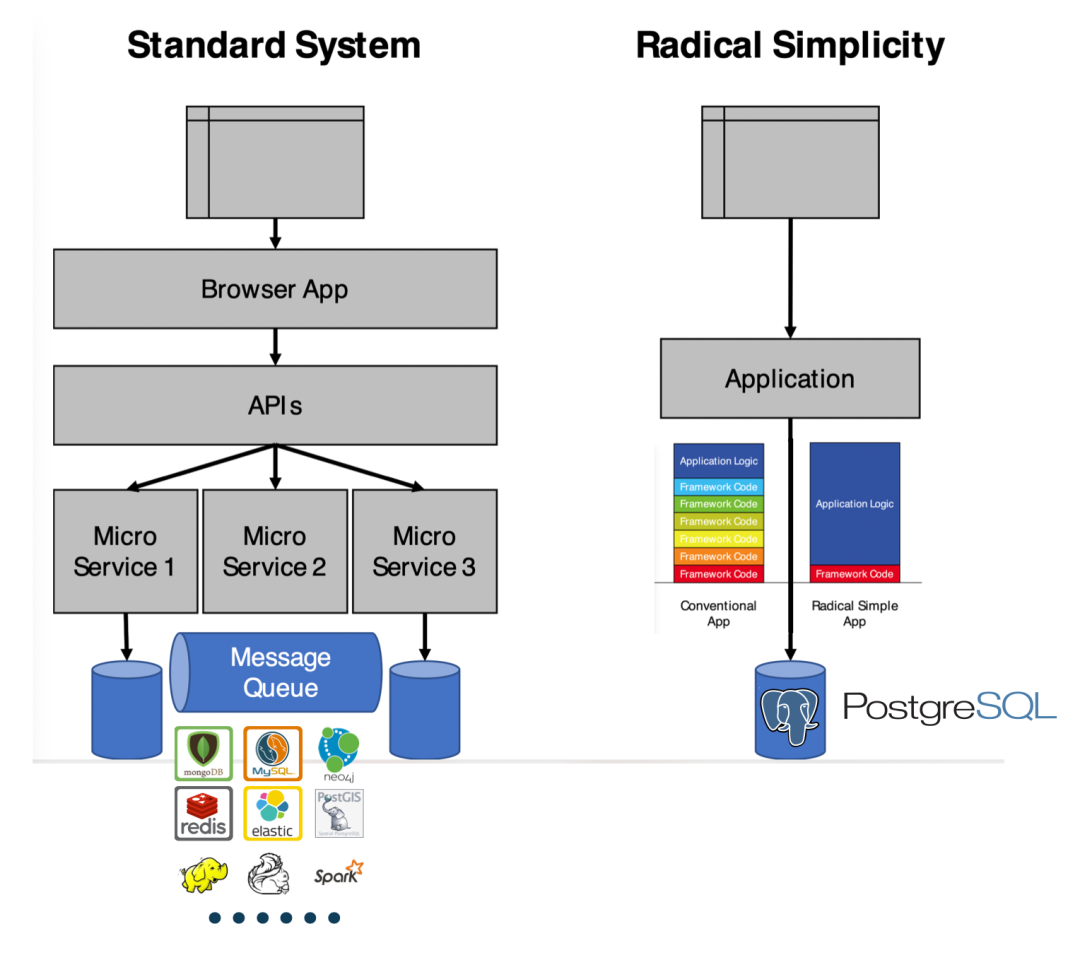

- Technical Minimalism: Just Use PostgreSQL for Everything

- ParadeDB: ElasticSearch Alternative in PG Ecosystem

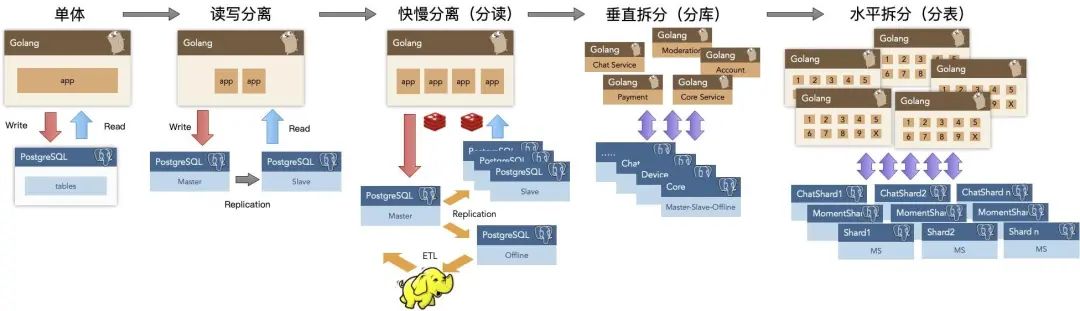

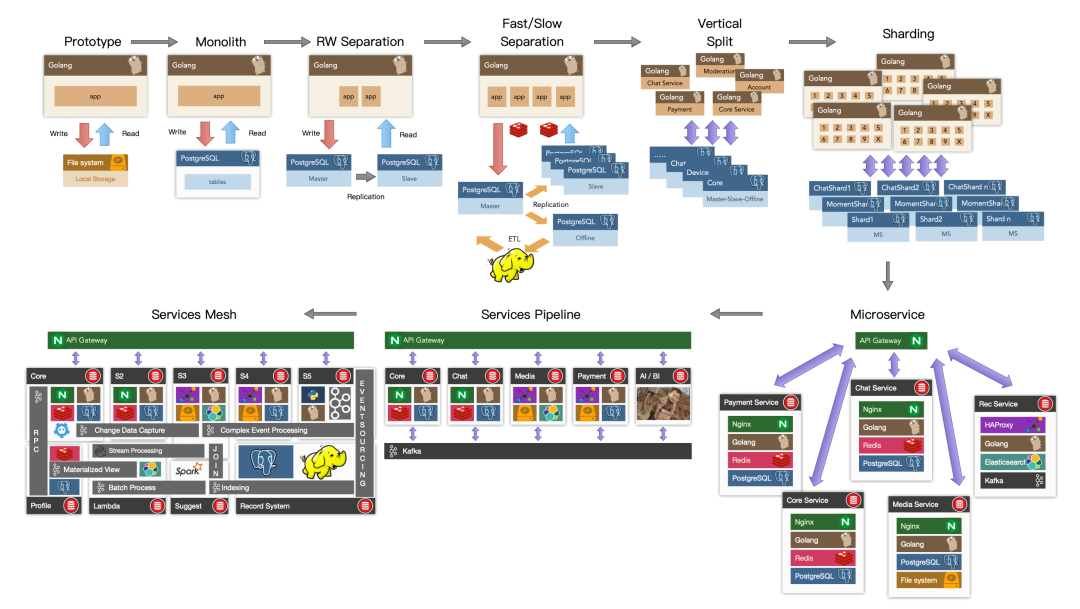

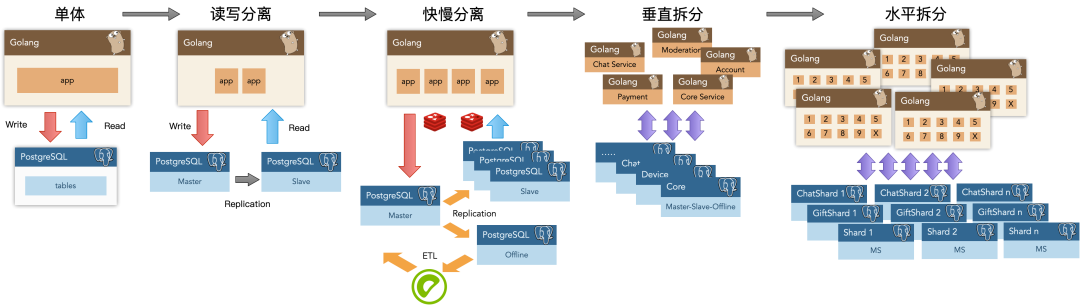

- The Astonishing Scalability of PostgreSQL

- PostgreSQL Crowned Database of the Year 2024!

- PostgreSQL Convention 2024

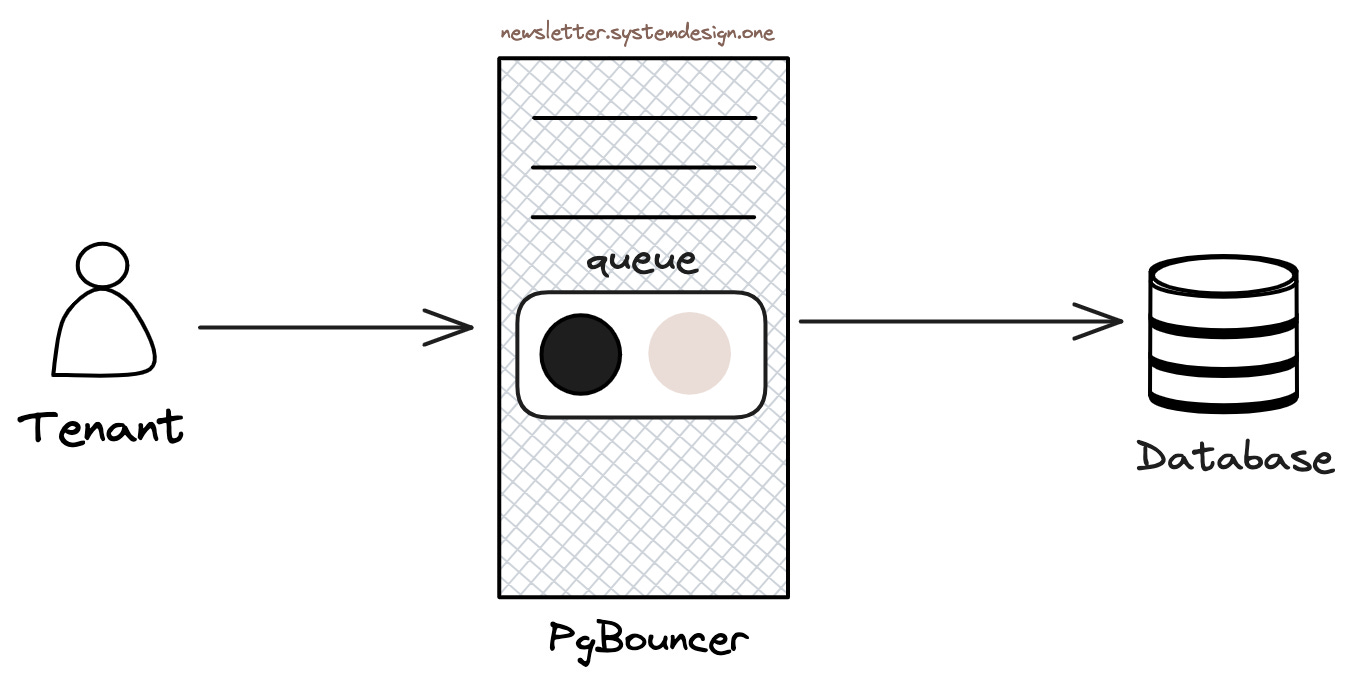

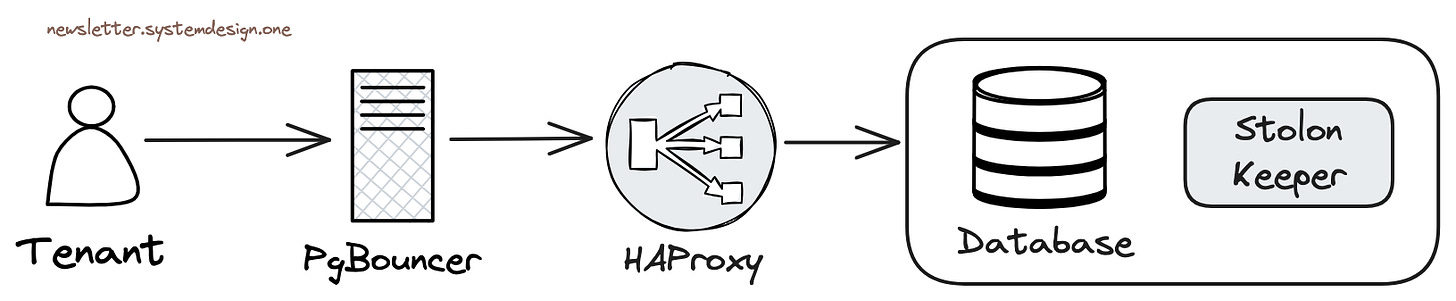

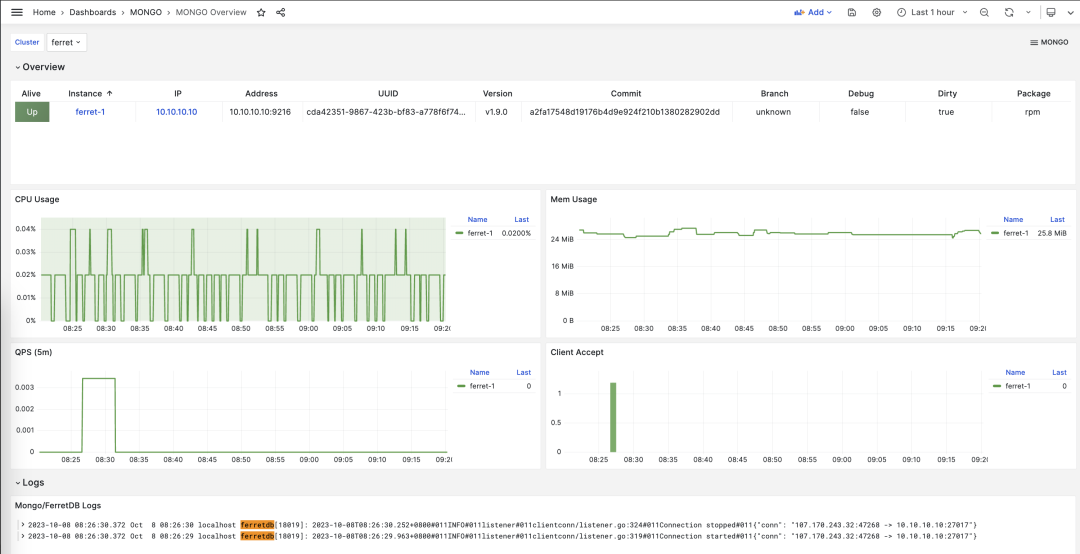

- FerretDB: When PG Masquerades as MongoDB

- PostgreSQL, The most successful database

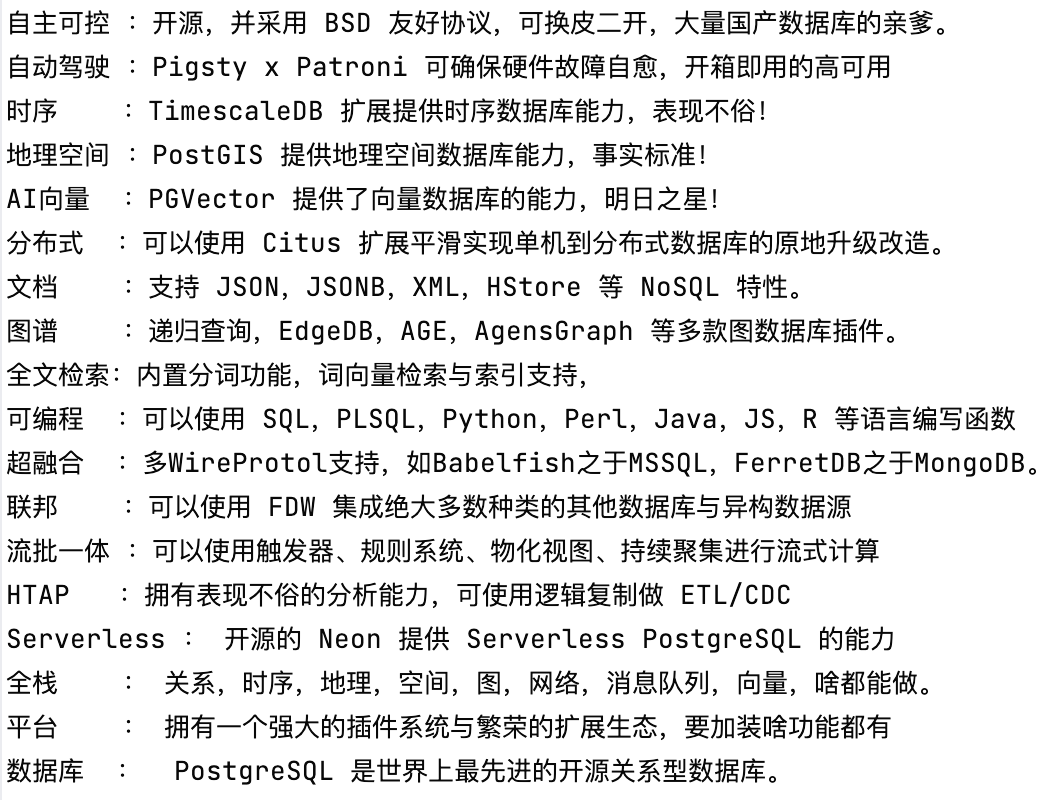

- How Powerful Is PostgreSQL?

- Why Is PostgreSQL the Most Successful Database?

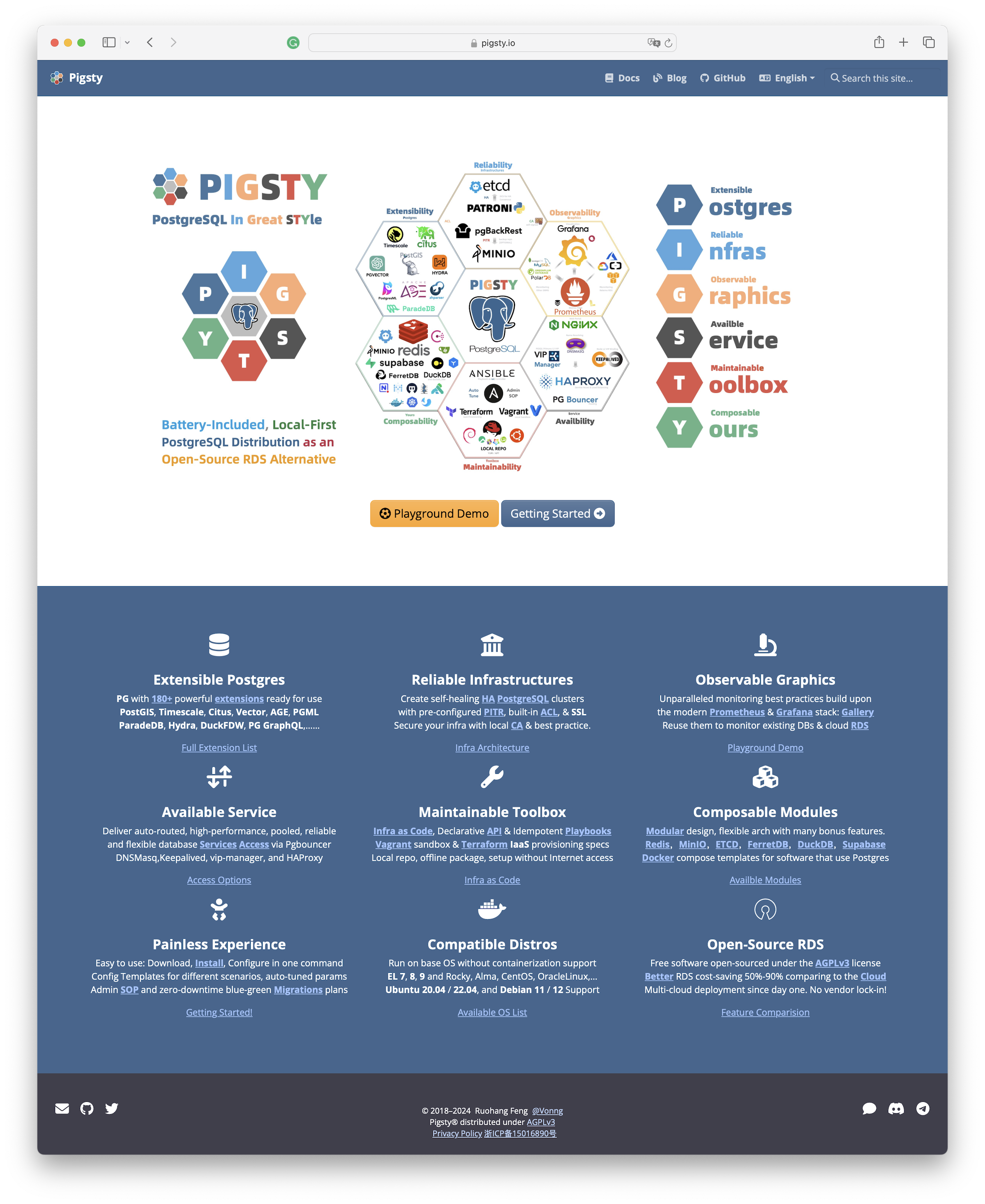

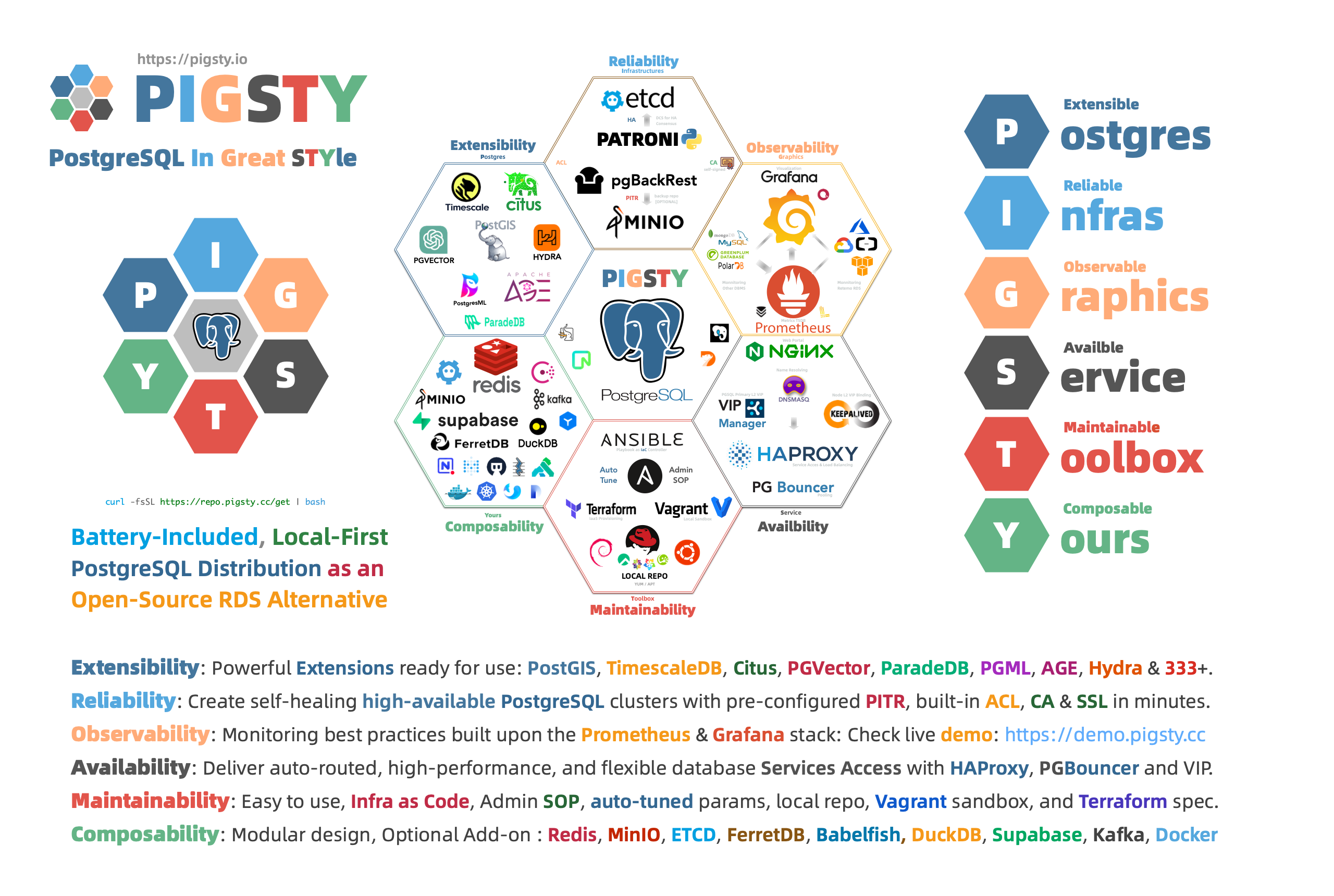

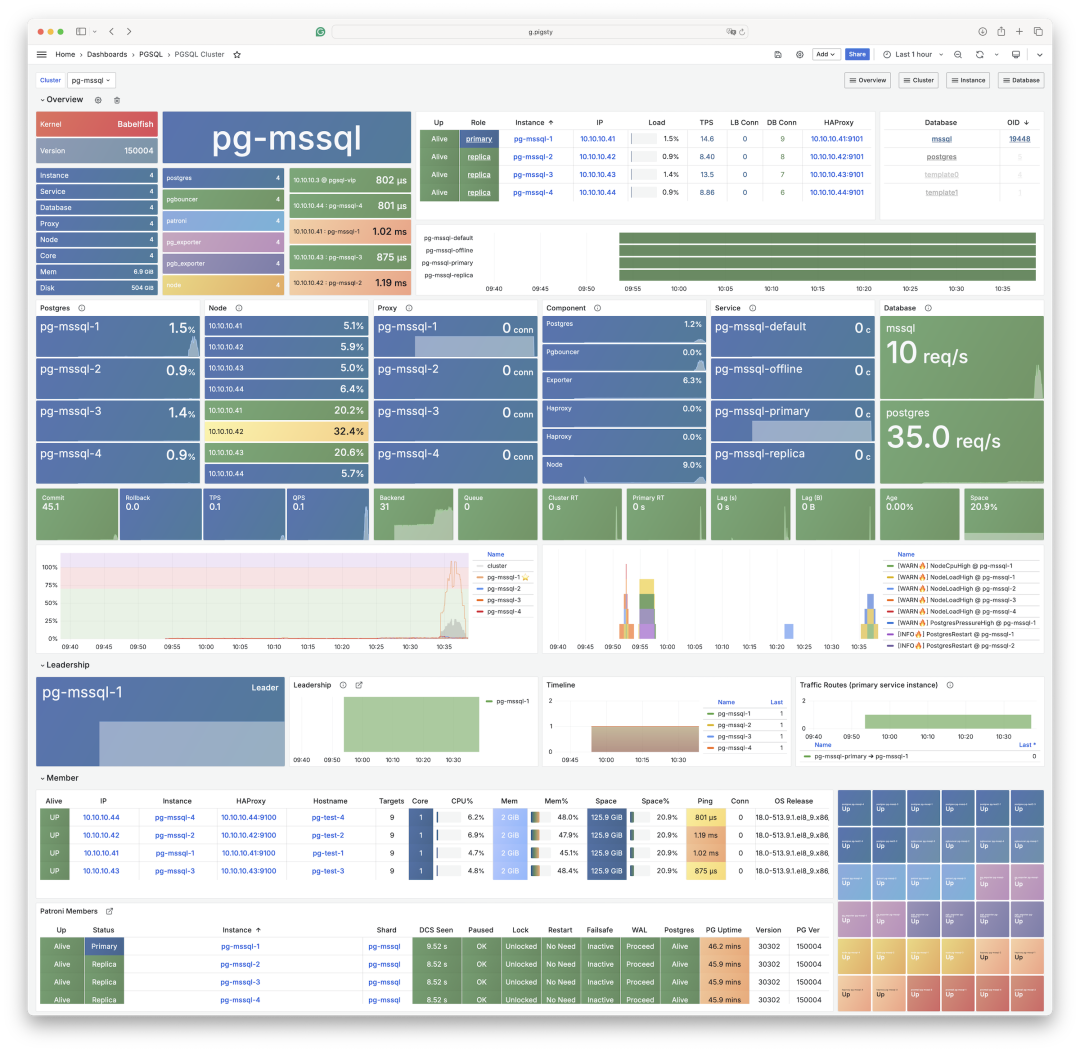

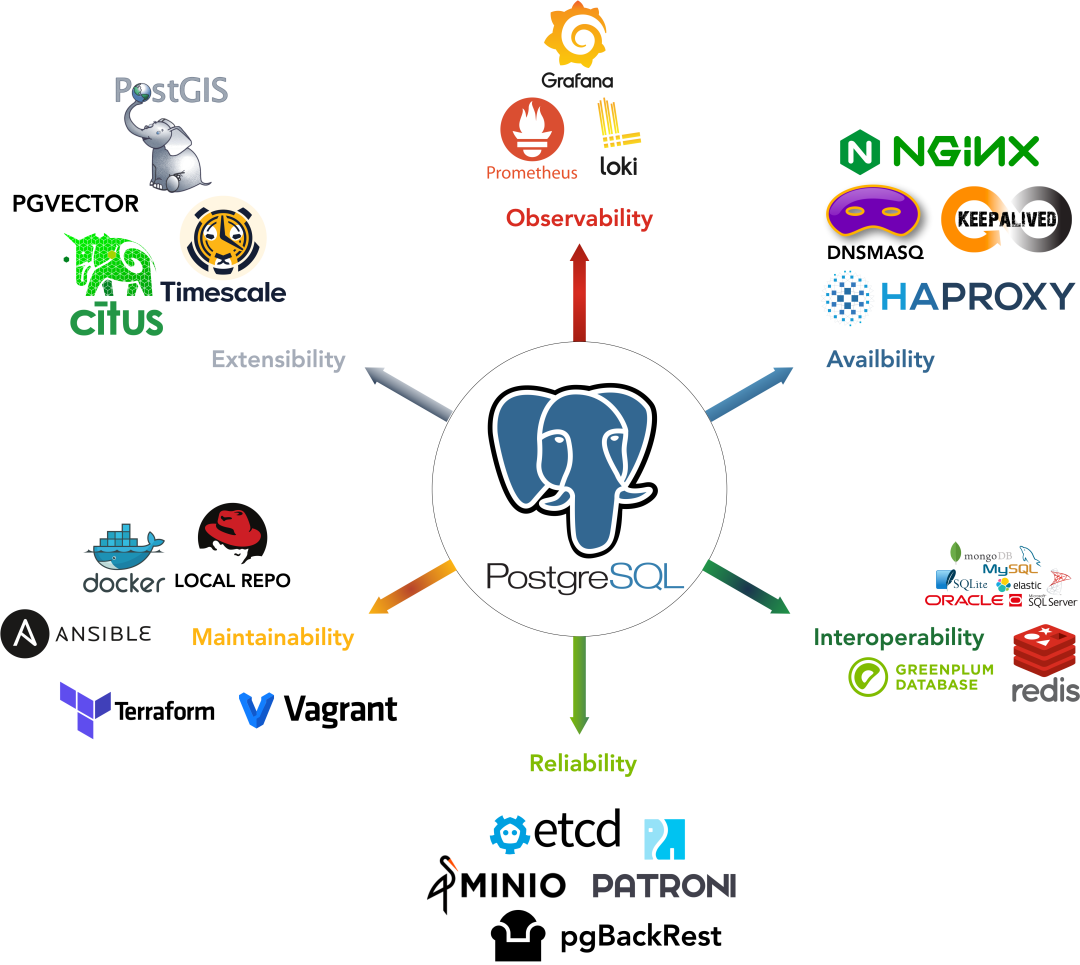

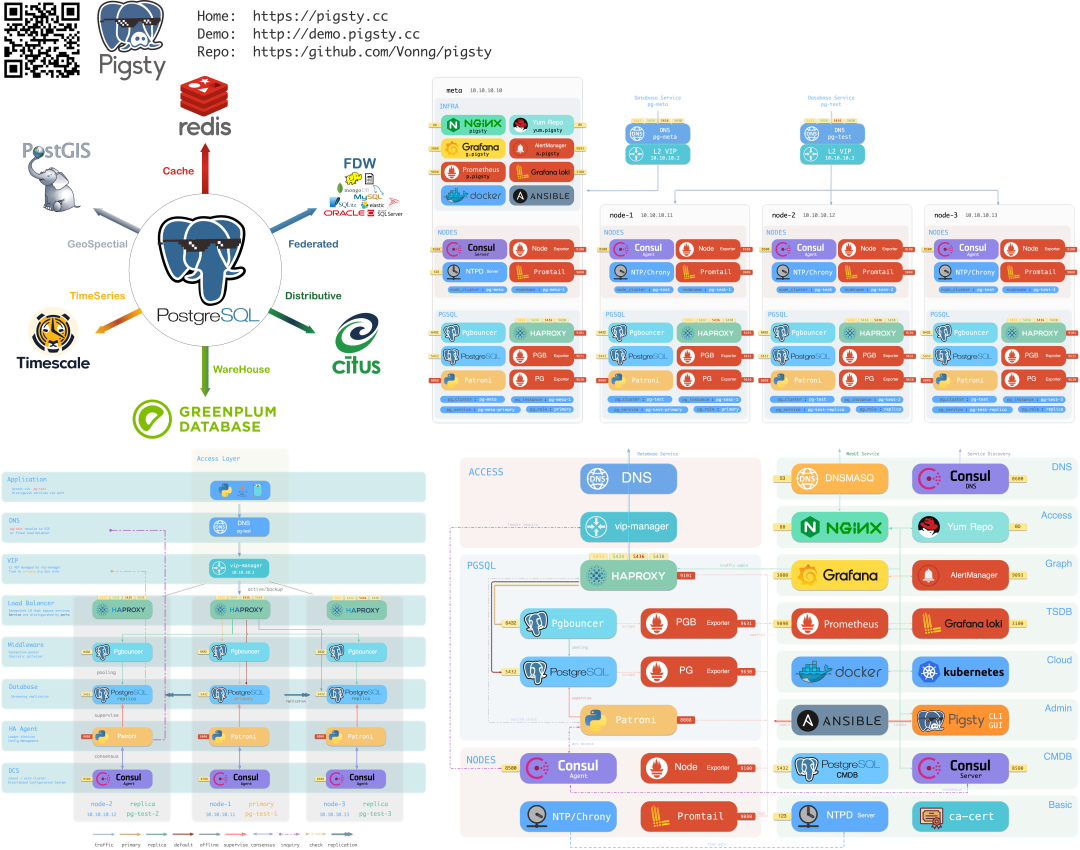

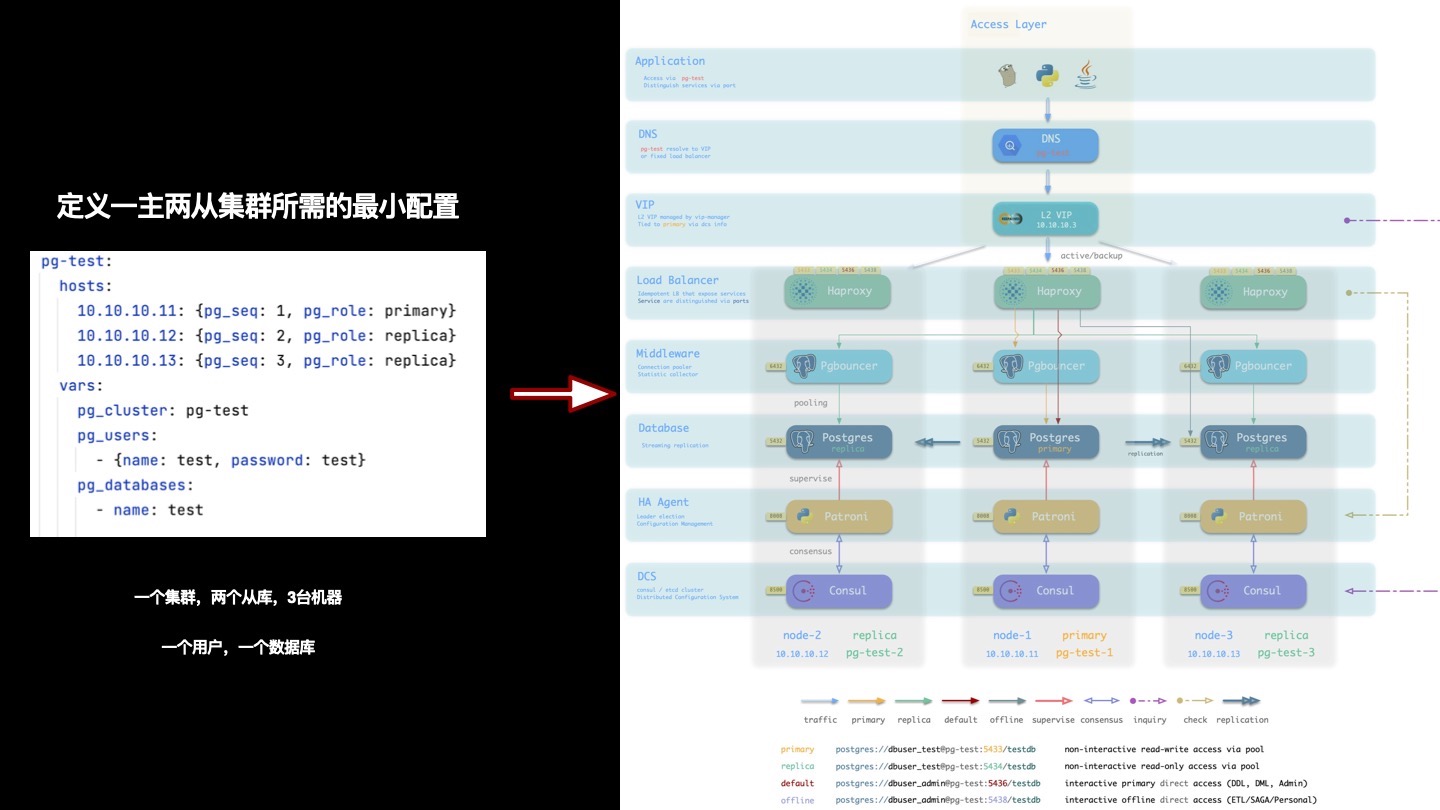

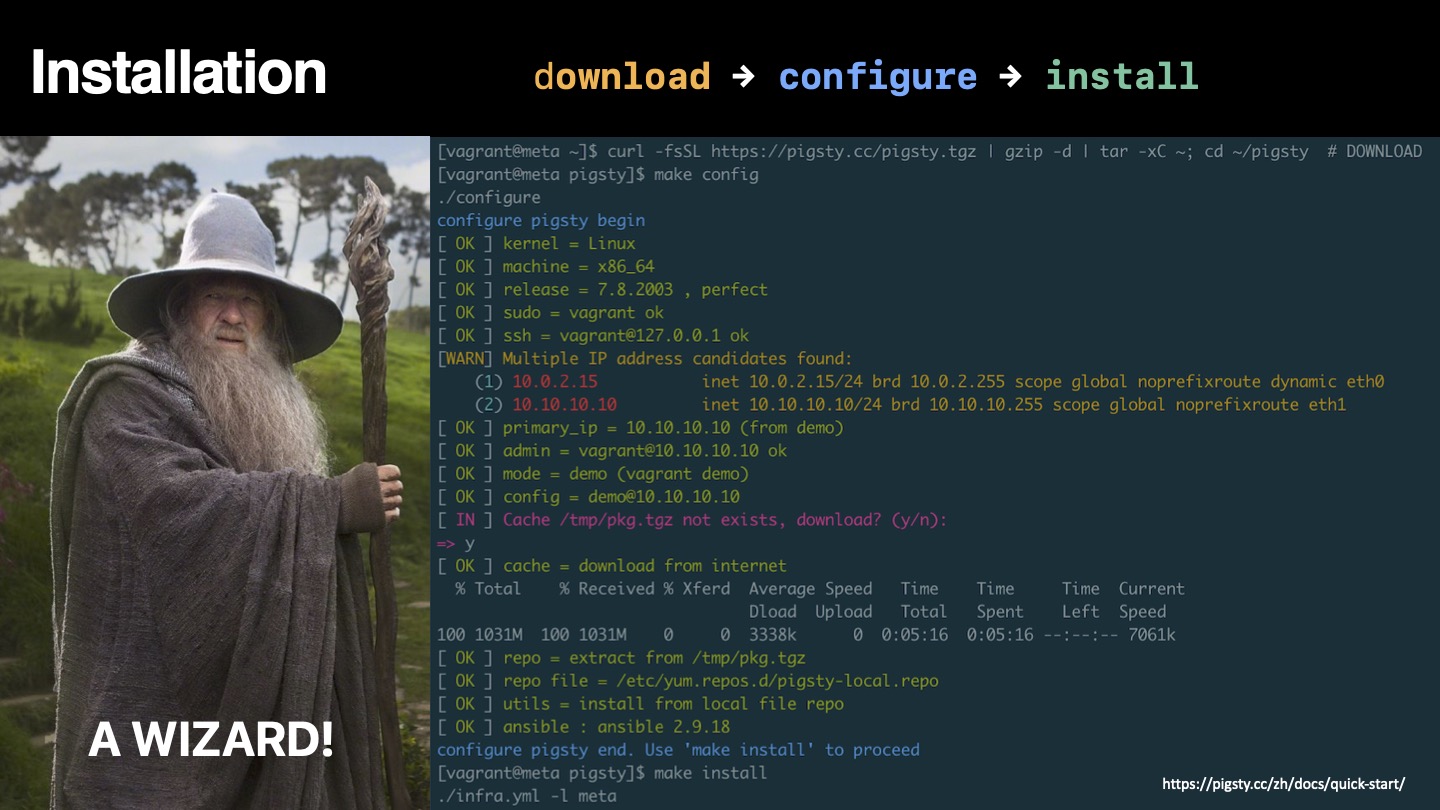

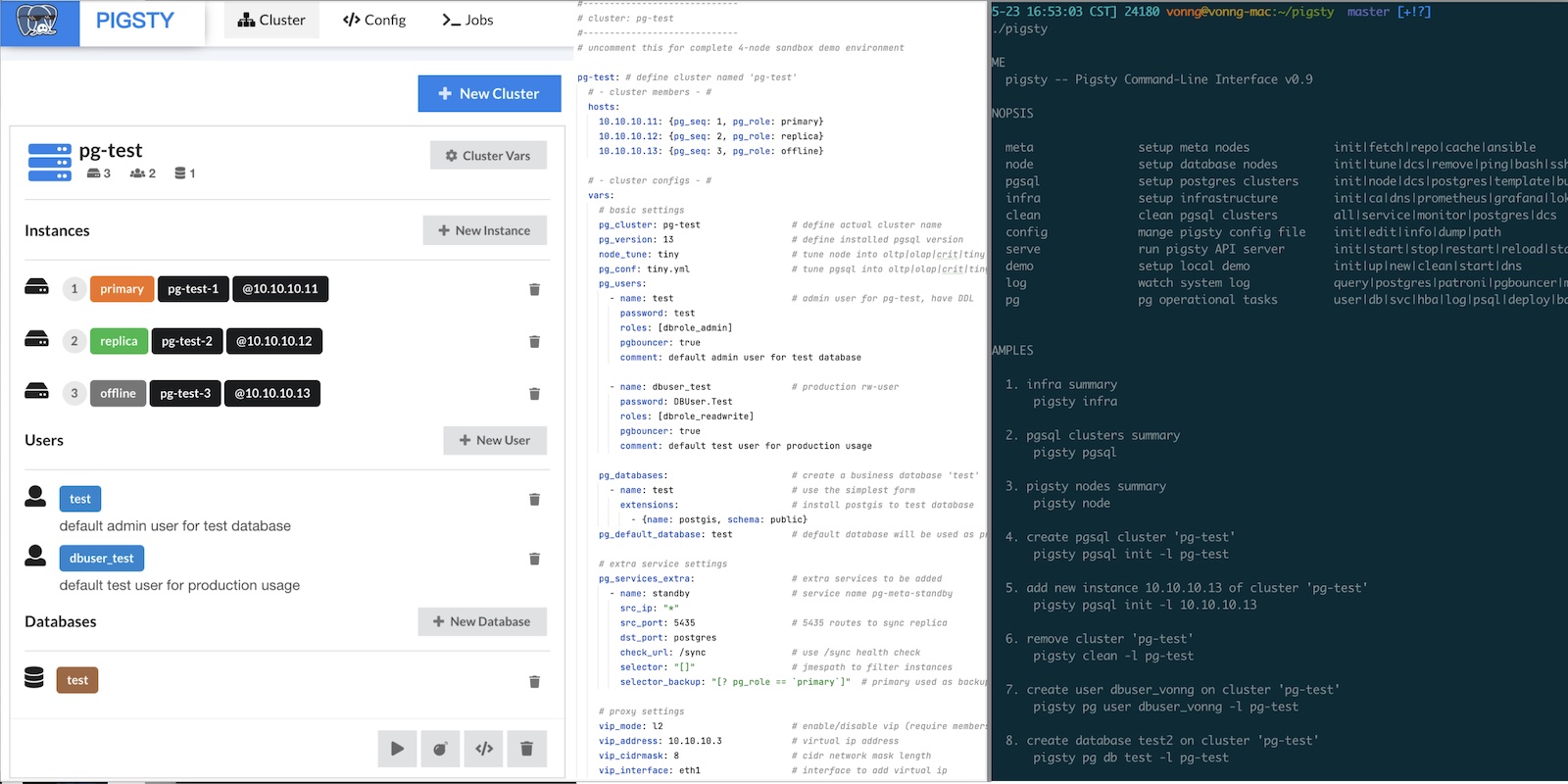

- Pigsty: The Production-Ready PostgreSQL Distribution

- Why PostgreSQL Has a Bright Future

- What Makes PostgreSQL So Awesome?

pg_exporter v1.0.0 Released – Next-Level PostgreSQL Observability

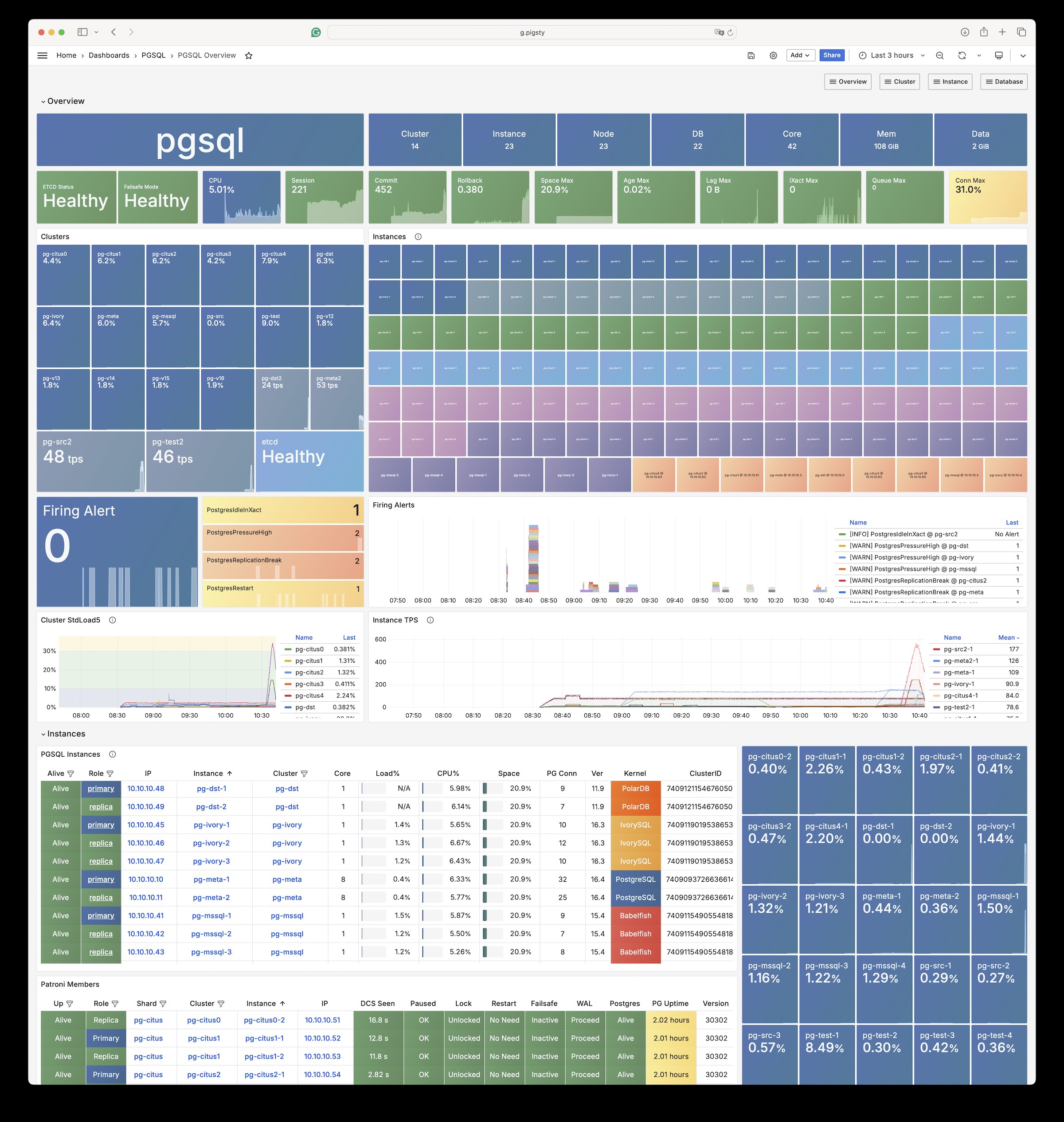

We’re delighted to announce pg_exporter v1.0.0, an advanced open-source Prometheus exporter that takes PostgreSQL observability to the next level.

Built for DBAs and developers who need deep insight, pg_exporter exposes 600 + metrics—roughly 3 K – 20 K time series per instance — covering core PostgreSQL internals, popular extensions such as TimescaleDB, Citus, pg_stat_statements, pg_wait_sampling, and even pgBouncer, all through a single, fully customizable exporter.

Unlike other exporters, pg_exporter values customizability: every metric lives in a YAML definition, so you can add, modify, or extend metrics without recompiling. The configuration allows fine-grained control over collection logic — PostgreSQL version branching, caching, timeouts, pre-condition queries, a health-check API, and live reload & replanning are all built in.

Battle-tested for more than six years in production clusters exceeding 25K+ CPU cores, pg_exporter also powers the Pigsty observability stack — see it in action in the live demo.

Version 1.0.0 brings a host of new features, including early support for PostgreSQL 18 — ready even before PG 18 beta release. Explore 50 + pre-defined collectors, or create your own (including app-specific metrics via SQL) simply by adding new configs.

Enjoy next-level insight into your PostgreSQL ecosystem with pg_exporter v1.0.0!

Features

- Highly Customizable: Define almost all metrics through declarative YAML configs

- Full Coverage: Monitor both PostgreSQL (10-18+) and pgBouncer (1.8-1.24+) in single exporter

- Fine-grained Control: Configure timeout, caching, skip conditions, and fatality per collector

- Dynamic Planning: Define multiple query branches based on different conditions

- Self-monitoring: Rich metrics about pg_exporter itself for complete observability

- Production-Ready: Battle-tested in real-world environments across 12K+ cores for 6+ years

- Auto-discovery: Automatically discover and monitor multiple databases within an instance

- Health Check APIs: Comprehensive HTTP endpoints for service health and traffic routing

- Extension Support:

timescaledb,citus,pg_stat_statements,pg_wait_sampling,…

OrioleDB is Here! 4x Performance, Zero Bloat, Decoupled Storage

OrioleDB - while the name might make you think of cookies, it’s actually named after the songbird. But whether you call it Cookie DB or Bird DB doesn’t matter - what matters is that this PG storage engine extension + kernel fork is genuinely fascinating, and it’s about to hit prime time.

As Zheap’s successor, I’ve been watching OrioleDB for quite a while. It has three major selling points: performance, operability, and cloud-native capabilities. Let me give you a quick tour of this PG kernel newcomer, along with some recent work I’ve done to help you get it up and running.

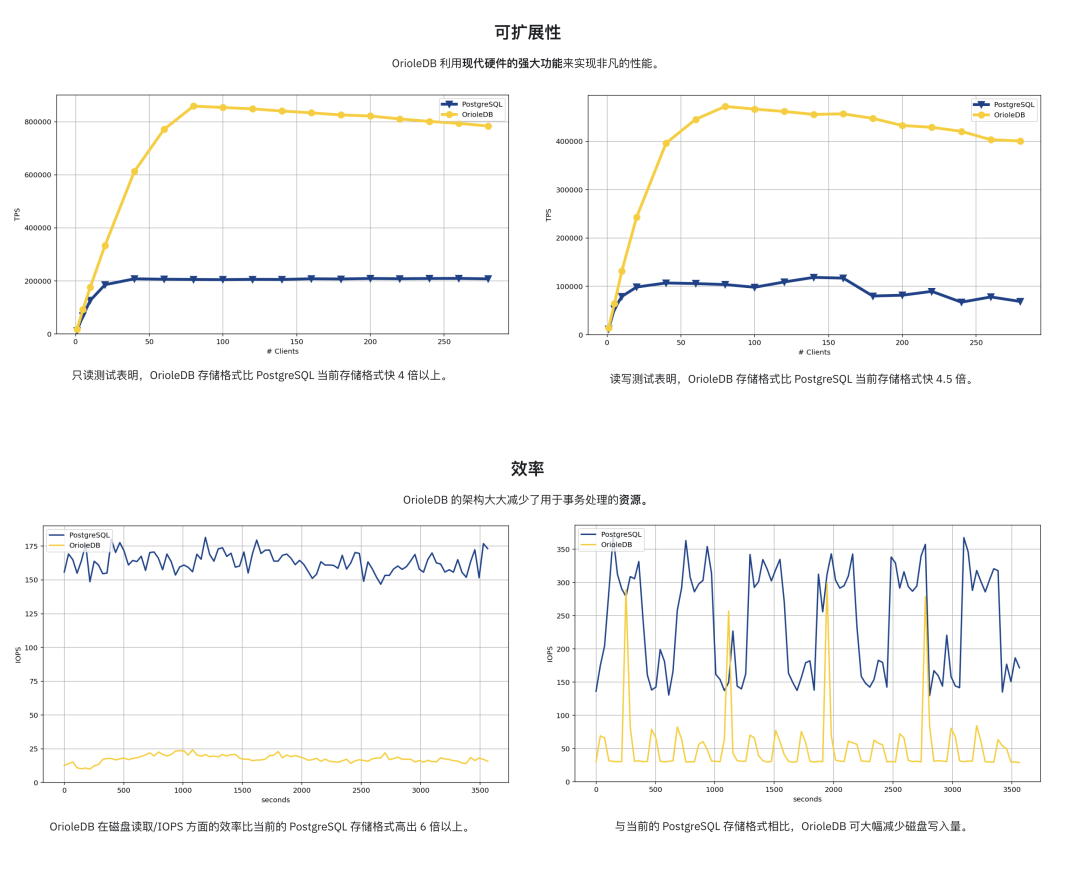

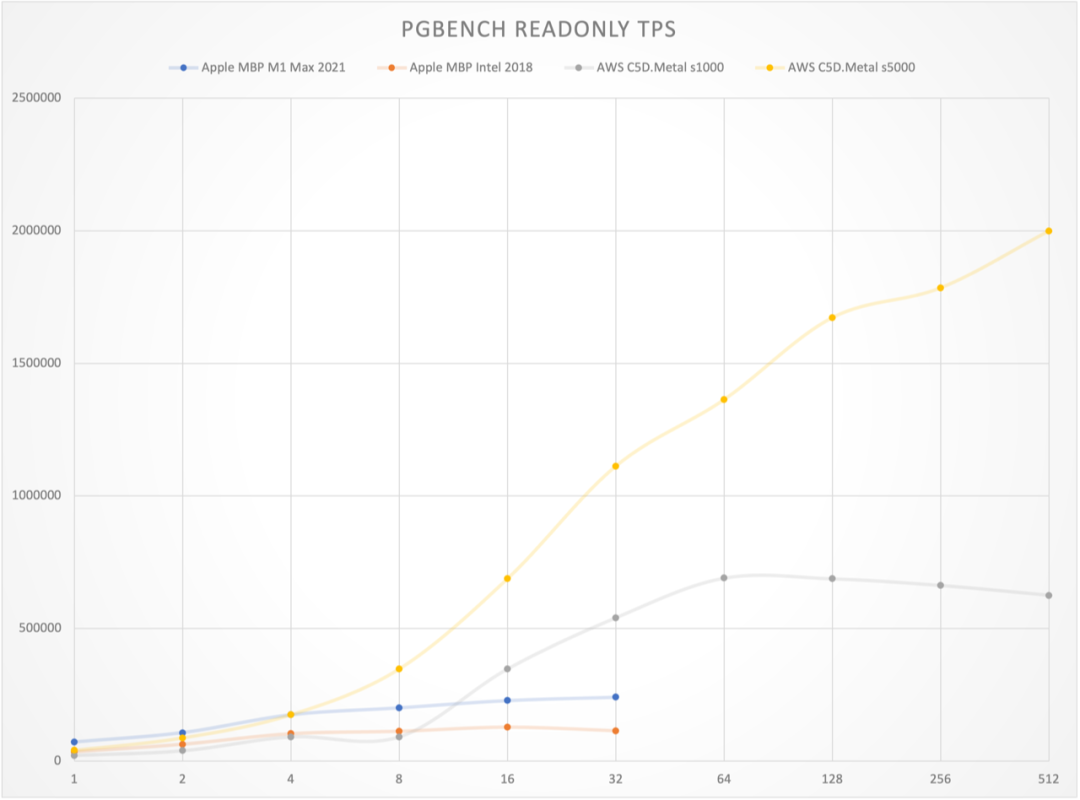

Extreme Performance: 4x Throughput

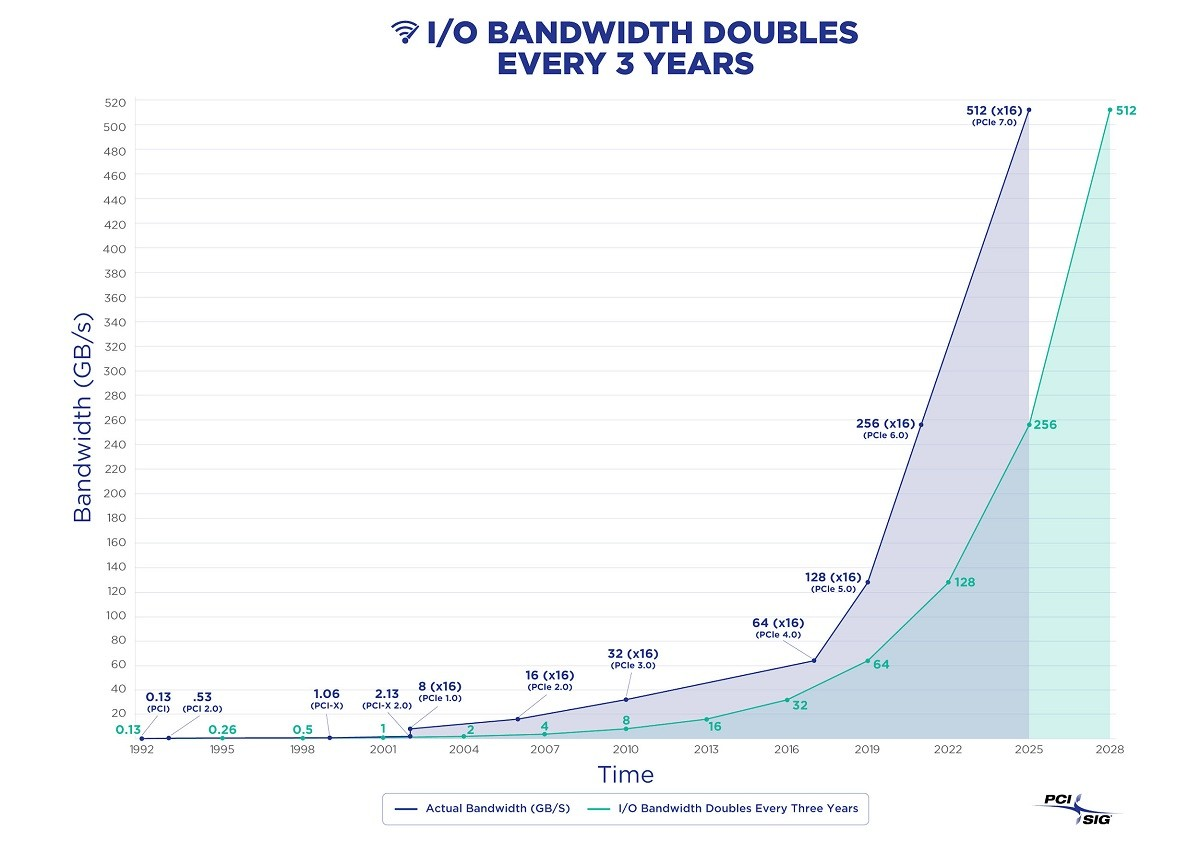

While hardware performance is overkill for most OLTP databases these days, hitting the single-node write throughput ceiling isn’t exactly rare - it’s usually what drives people to shard their databases.

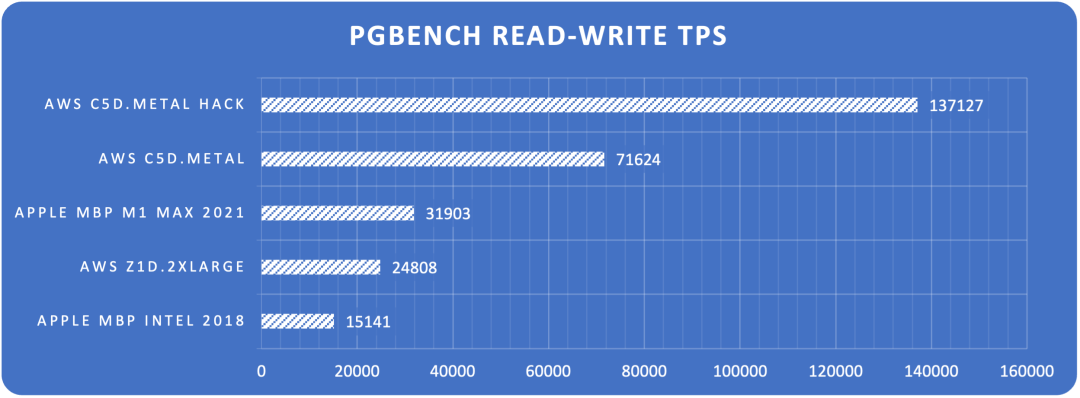

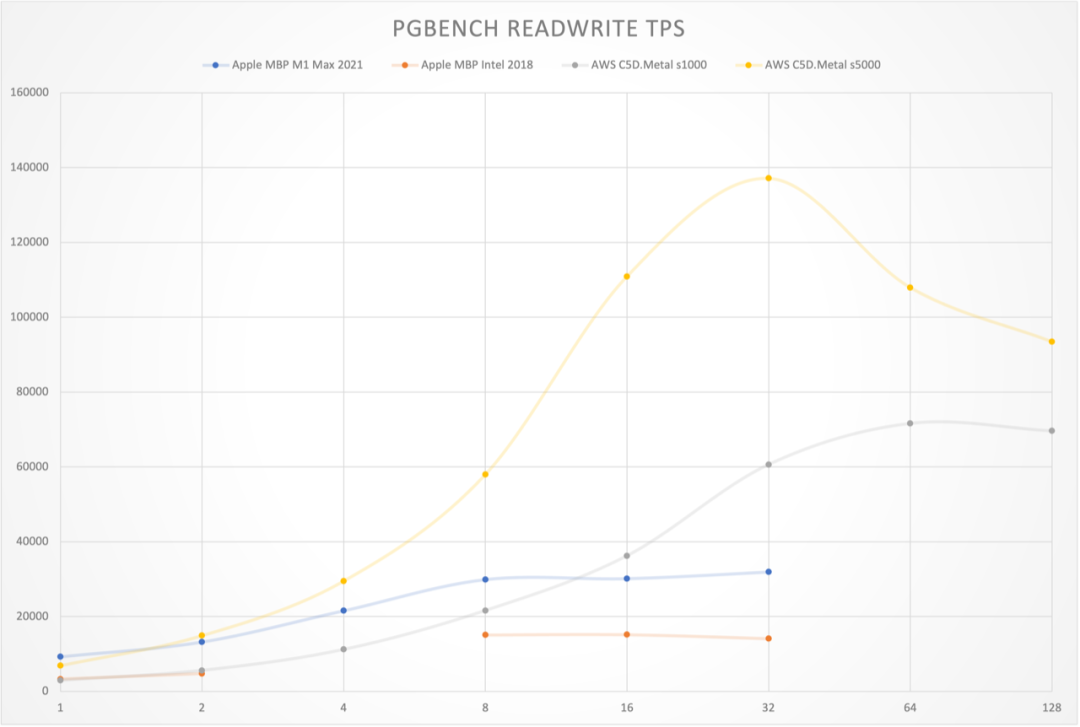

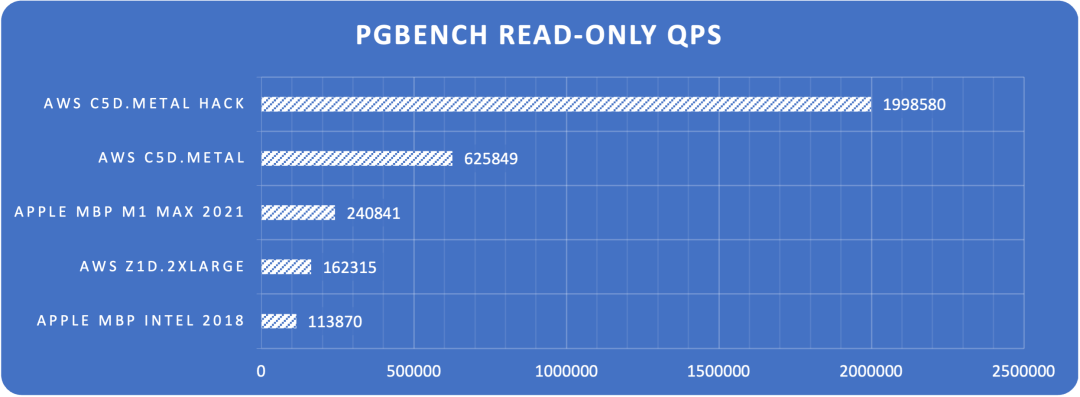

OrioleDB aims to solve this. According to their homepage, they achieve 4x PostgreSQL’s read/write throughput - a pretty wild number. A 40% performance boost wouldn’t justify adopting a new storage engine, but 400%? Now that’s an interesting proposition.

Plus, OrioleDB claims to significantly reduce resource consumption in OLTP scenarios, notably lowering disk IOPS usage.

The secret sauce includes several optimizations over PG heap tables: ditching FS Cache, direct memory-to-storage page linking, lock-free memory page access, MVCC via UNDO logs/rollback segments instead of PG’s REDO, and row-level WAL that’s easier to parallelize.

Haven’t benchmarked it myself yet, but it’s tempting. Might grab a server and give it a spin soon.

Zero Headaches: Simplified Ops

PostgreSQL’s most notorious pain points are XID Wraparound and table bloat - both stemming from its MVCC design.

PostgreSQL’s default storage engine was designed with “infinite time travel” in mind, using an append-only MVCC approach - DELETEs are marked-for-deletion, and UPDATEs are delete-mark-plus-new-version.

While this design has perks - non-blocking reads/writes, instant rollbacks regardless of transaction size, and minimal replication lag - it’s given PostgreSQL users their fair share of headaches. Even with modern hardware and automatic vacuum, a high-standard PostgreSQL setup still needs to keep an eye on bloat and garbage collection.

OrioleDB tackles this with a new storage engine - think Oracle/MySQL-style approach, inheriting both their pros and cons. Using new MVCC practices, OrioleDB tables say goodbye to bloat and XID wraparound concerns.

Of course, there’s no free lunch - you inherit the downsides too: large transaction issues, slower rollbacks, and analytical performance trade-offs. But it excels at what it aims for: maximum OLTP CRUD performance.

Most importantly, it’s a PG extension - an optional storage engine that plays nice with PG’s native heap tables. You can mix and match based on your needs, letting your extreme OLTP tables shine where it counts.

-- Enable OrioleDB extension (Pigsty has it ready)

CREATE EXTENSION orioledb;

CREATE TABLE blog_post

(

id int8 NOT NULL,

title text NOT NULL,

body text NOT NULL,

PRIMARY KEY(id)

) USING orioledb; -- Use OrioleDB storage engine

Using OrioleDB is dead simple - just add the

USINGkeyword when creating tables.

Currently, OrioleDB is a storage engine extension requiring a patched PG kernel, as some necessary storage engine APIs haven’t landed in PG core yet. If all goes well, PostgreSQL 18 will include these patches, eliminating the need for kernel modifications.

| Name | Link | Version | |

|---|---|---|---|

| ✅ | Add missing inequality searches to rbtree | Link | PostgreSQL 16 |

| ✅ | Document the ability to specify TableAM for pgbench | Link | PostgreSQL 16 |

| ✅ | Remove Tuplesortstate.copytup function | Link | PostgreSQL 16 |

| ✅ | Add new Tuplesortstate.removeabbrev function | Link | PostgreSQL 16 |

| ✅ | Put abbreviation logic into puttuple_common() | Link | PostgreSQL 16 |

| ✅ | Move memory management away from writetup() and tuplesort_put*() | Link | PostgreSQL 16 |

| ✅ | Split TuplesortPublic from Tuplesortstate | Link | PostgreSQL 16 |

| ✅ | Split tuplesortvariants.c from tuplesort.c | Link | PostgreSQL 16 |

| ✅ | Fix typo in comment for writetuple() function | Link | PostgreSQL 16 |

| ✅ | Support for custom slots in the custom executor nodes | Link | PostgreSQL 16 |

| ✉️ | Allow table AM to store complex data structures in rd_amcache | Link | PostgreSQL 18 |

| ✉️ | Allow table AM tuple_insert() method to return the different slot | Link | PostgreSQL 18 |

| ✉️ | Add TupleTableSlotOps.is_current_xact_tuple() method | Link | PostgreSQL 18 |

| ✉️ | Allow locking updated tuples in tuple_update() and tuple_delete() | Link | PostgreSQL 18 |

| ✉️ | Add EvalPlanQual delete returning isolation test | Link | PostgreSQL 18 |

| ✉️ | Generalize relation analyze in table AM interface | Link | PostgreSQL 18 |

| ✉️ | Custom reloptions for table AM | Link | PostgreSQL 18 |

| ✉️ | Let table AM insertion methods control index insertion | Link | PostgreSQL 18 |

I’ve prepared oriolepg_17 (patched PG) and orioledb_17 (extension) on EL, plus a ready-to-use config template for instant OrioleDB deployment.

Cloud-Native Storage

“Cloud-native” is an overused term that nobody quite understands. But for databases, it usually means one thing: storing data in object storage.

OrioleDB recently pivoted their slogan from “High-performance OLTP storage engine” to “Cloud-native storage engine”. I get why - Supabase acquired OrioleDB, and the sugar daddy’s needs come first.

As a “cloud database provider”, offloading cold data to “cheap” object storage instead of “premium” EBS block storage is quite profitable. Plus, it makes databases stateless “cattle” that can be freely scaled in K8s. Their motivation is crystal clear.

So I’m pretty excited that OrioleDB not only offers a new storage engine but also supports object storage. While PG-over-S3 projects exist, this is the first mature, mainline-compatible, open-source solution.

So, How Do I Try It?

OrioleDB sounds great - solving key PG issues, (future) mainline compatibility, open-source, well-funded, and led by Alexander Korotkov who has serious PG community cred.

Obviously, OrioleDB isn’t “production-ready” yet. I’ve watched it from Alpha1 three years ago to Beta10 now, each release making me more antsy. But I noticed it’s now in Supabase’s postgres mainline - release can’t be far off.

So when OrioleDB dropped beta10 on April 1st, I decided to package it. Fresh off building OpenHalo RPMs and a MySQL-compatible PG kernel, what’s one more? I created RPM packages for the patched PG kernel (oriolepg_17) and extension (orioledb_17), available for EL8/EL9 on x86/ARM64.

Better yet, I added native OrioleDB support to Pigsty, meaning OrioleDB gets the full PG ecosystem - Patroni for HA, pgBackRest for backups, pg_exporter for monitoring, pgbouncer for connection pooling, all wrapped up in a one-click production-grade RDS service:

This Qingming Festival, I released Pigsty v3.4.1 with built-in OrioleDB and OpenHalo kernel support. Spinning up an OrioleDB cluster is as simple as a regular PostgreSQL cluster:

all:

children:

pg-orio:

vars:

pg_databases:

- {name: meta ,extensions: [orioledb]}

vars:

pg_mode: oriole

pg_version: 17

pg_packages: [ orioledb, pgsql-common ]

pg_libs: 'orioledb.so, pg_stat_statements, auto_explain'

repo_extra_packages: [ orioledb ]

More Kernel Tricks

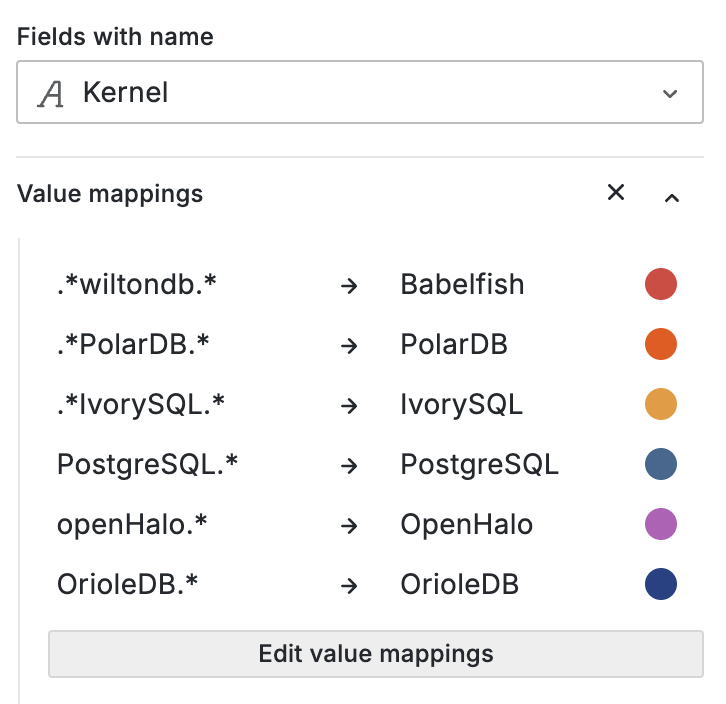

Of course, OrioleDB isn’t the only PG fork we support. You can also use:

- Microsoft SQL Server-compatible Babelfish (by AWS)

- Oracle-compatible IvorySQL (by HighGo)

- MySQL-compatible openHalo (by EsgynDB)

- Aurora RAC-flavored PolarDB (by Alibaba Cloud)

- Officially certified Oracle-compatible PolarDB O 2.0

- FerretDB + Microsoft’s DocumentDB to emulate MongoDB

- One-click local Supabase (OrioleDB’s parent!) deployment using Pigsty templates

Plus, my friend Yurii, Omnigres founder, is adding ETCD protocol support to PostgreSQL. Soon, you might be able to use PG as a better-performing, more reliable etcd for Kubernetes/Patroni.

Best of all, everything’s open-source and ready to roll in Pigsty, free of charge. So if you’re curious about OrioleDB, grab a server and give it a shot - 10-minute setup, one command. Let’s see if it lives up to the hype.

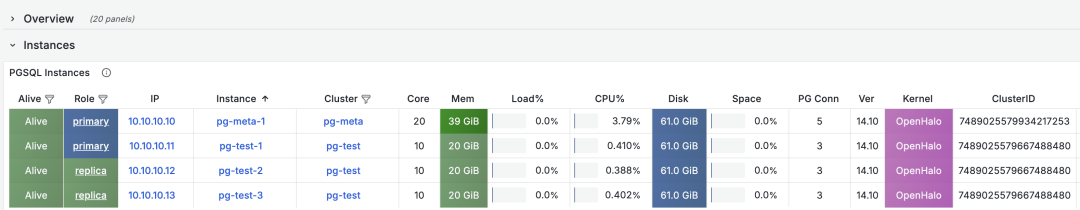

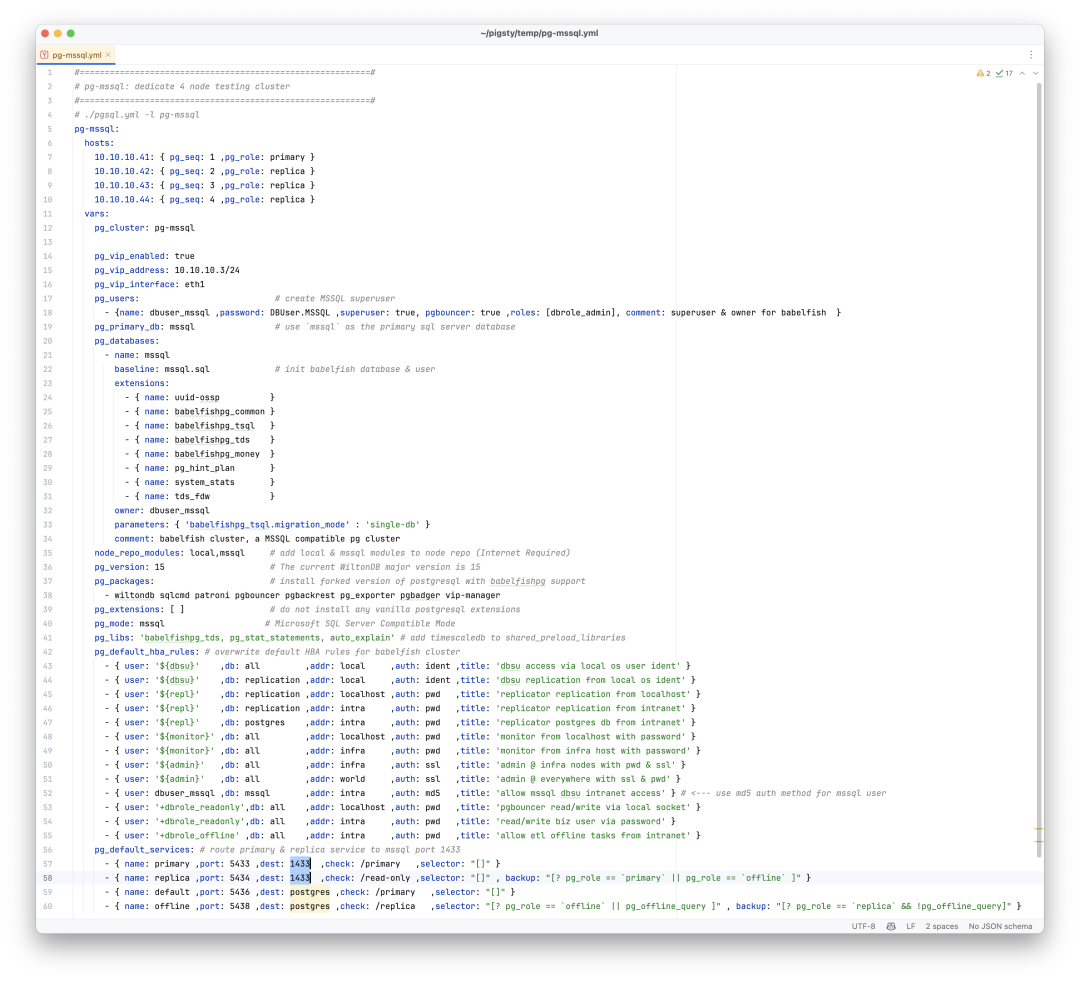

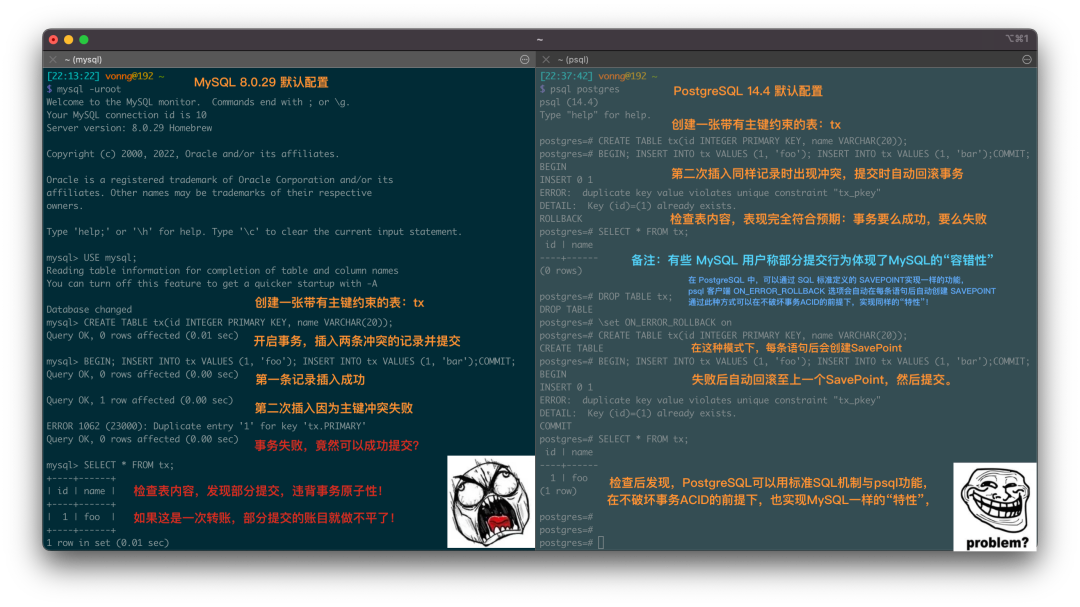

OpenHalo: PostgreSQL Now Speaks MySQL Wire Protocol!

PostgreSQL speaking MySQL? Yes, you read that right. OpenHalo, freshly open-sourced on April Fools’ Day, brings this capability to life - allowing users to read, write, and manage the same database using both MySQL and PostgreSQL clients. Built on PG 14.10, it provides MySQL 5.7 wire protocol compatibility.

OpenHalo just open-sourced their MySQL-compatible PG kernel. I’ve packaged it into RPMs and integrated it into Pigsty. The deployment is butter-smooth, and after a few code tweaks, it plays nicely with HA, monitoring, and backup components.

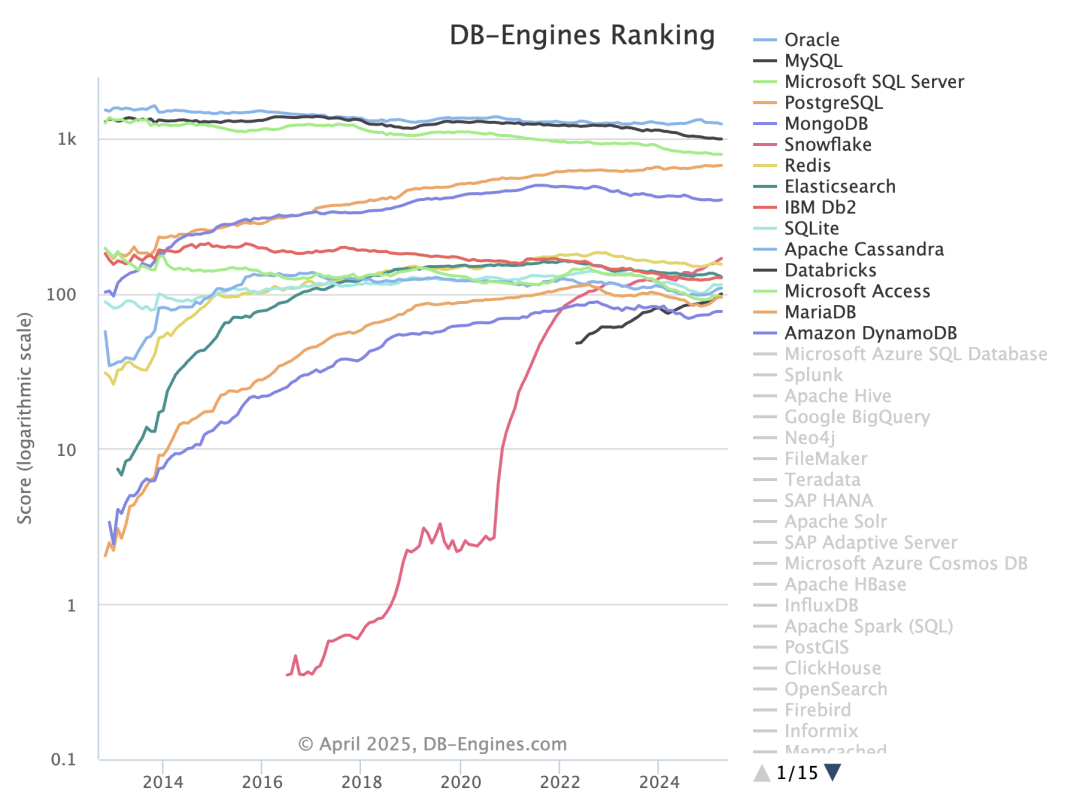

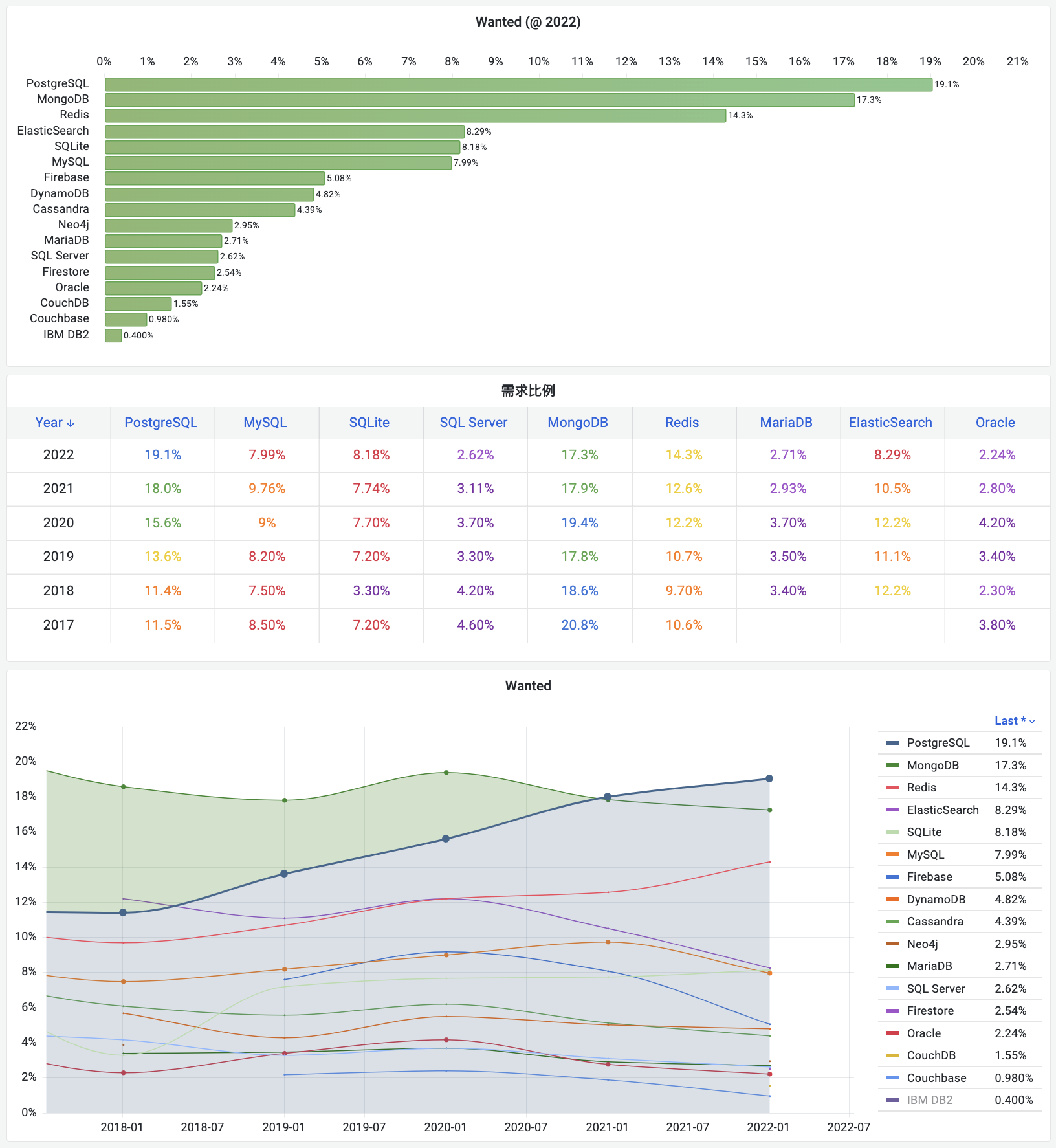

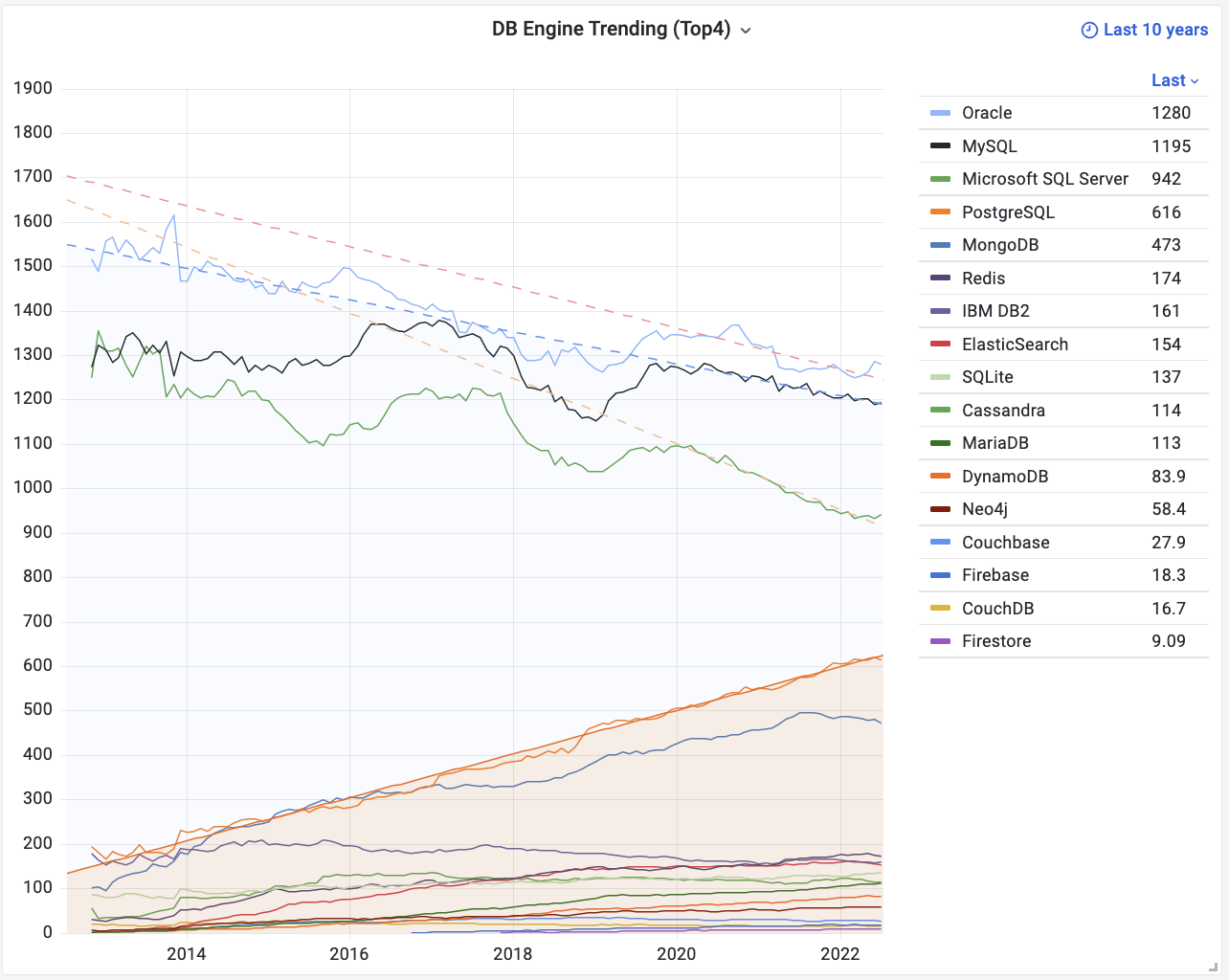

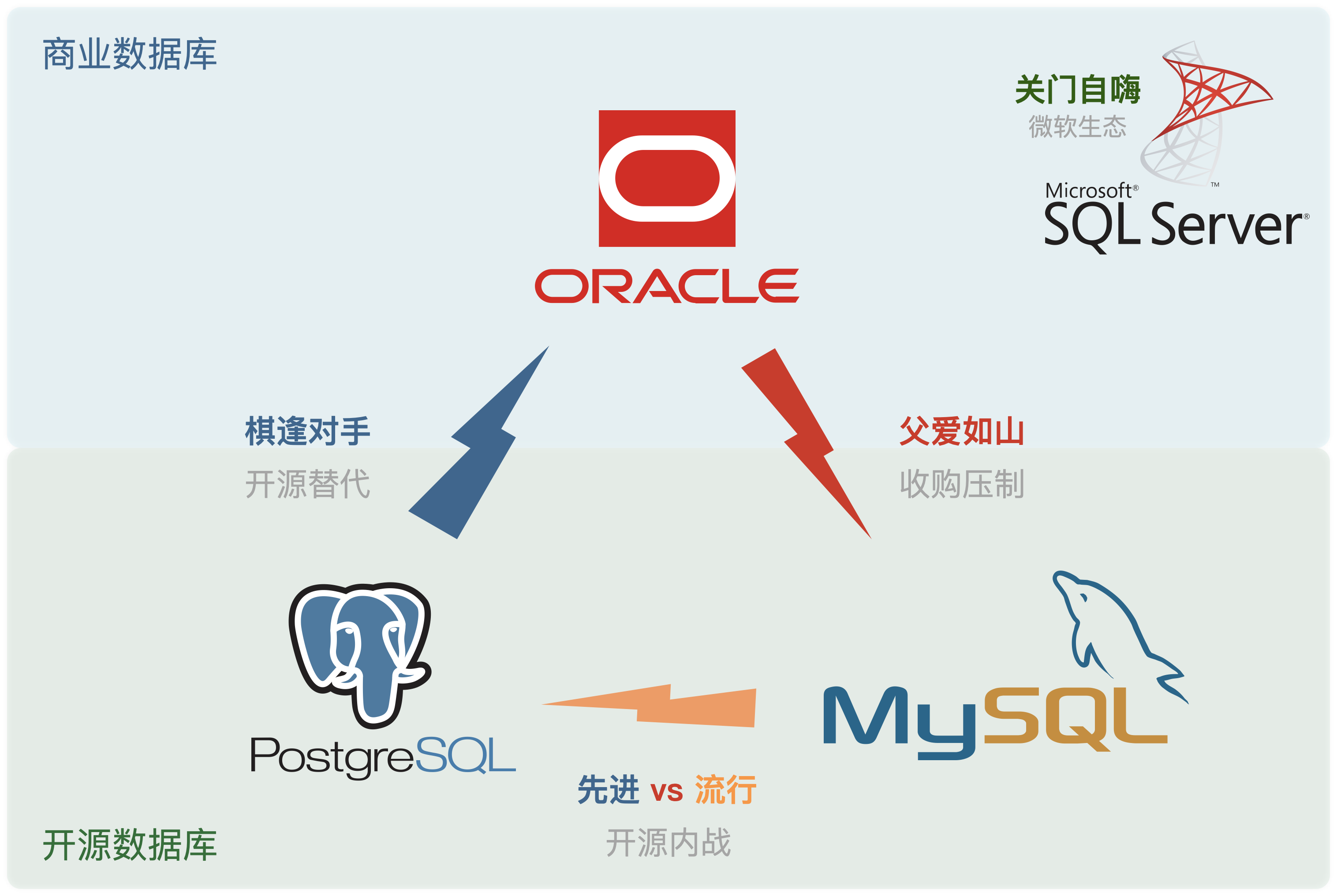

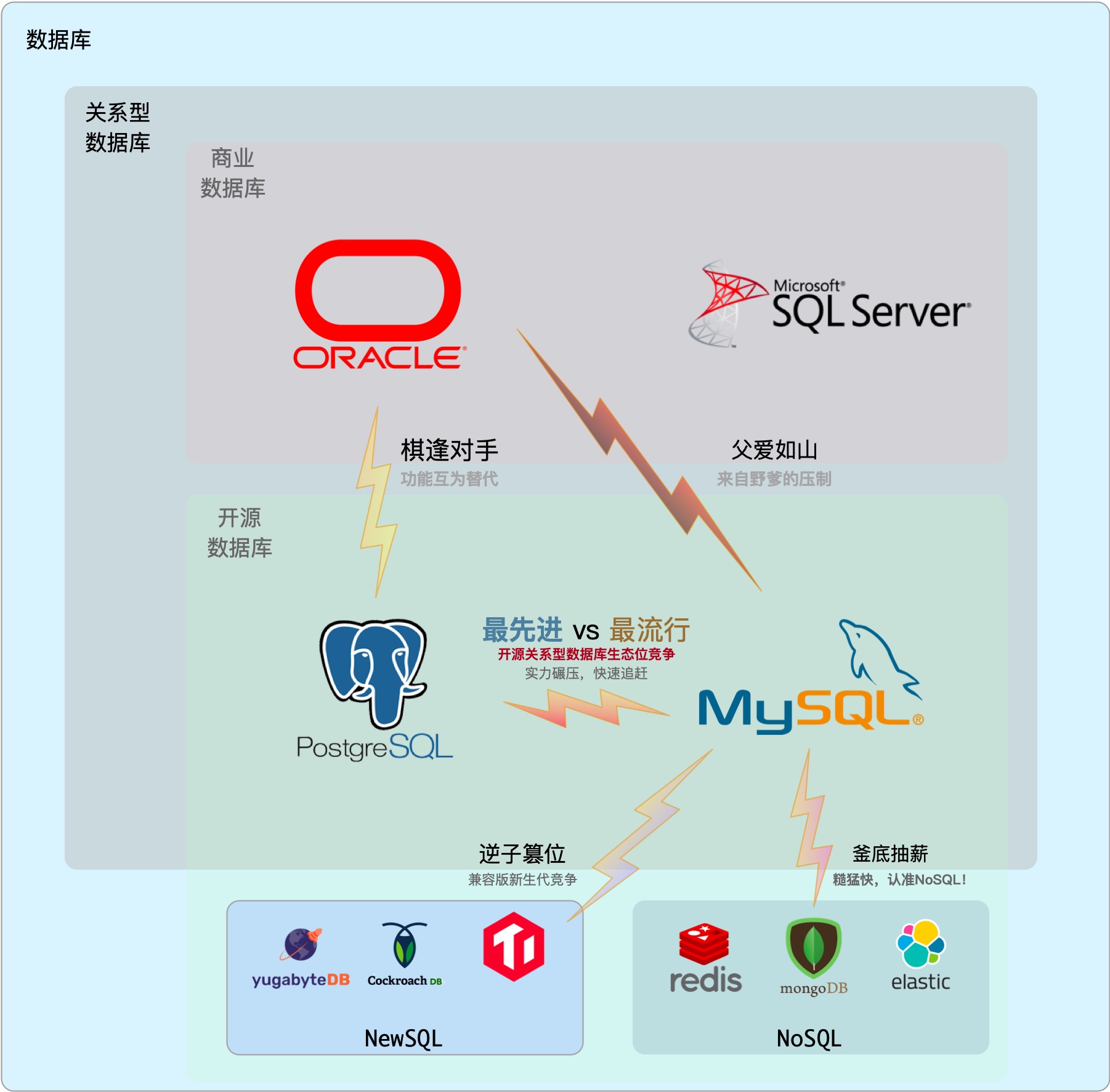

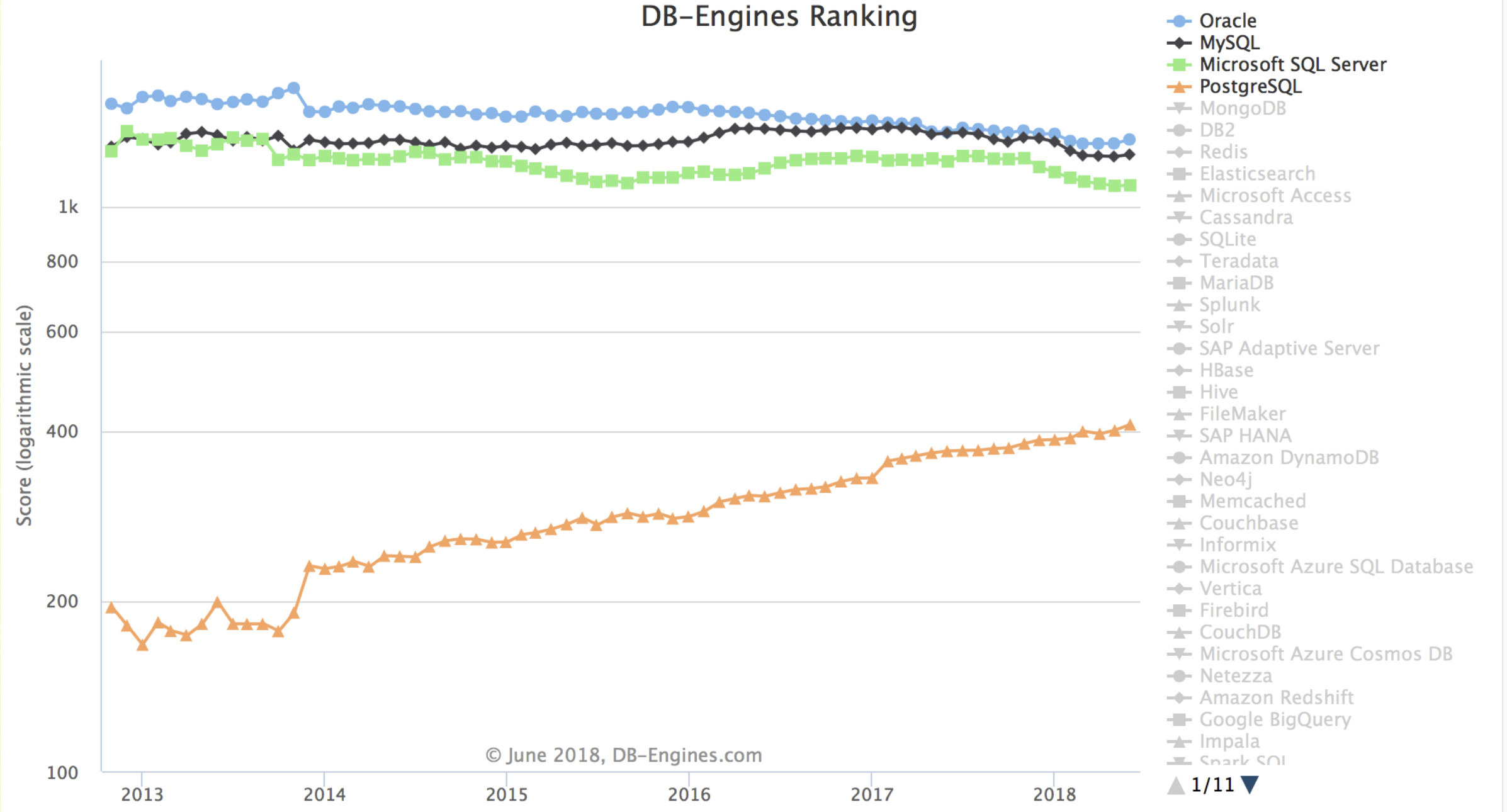

On the DB-Engines ranking, five databases stand head and shoulders above the rest: Oracle, SQL Server, MySQL, PostgreSQL, and MongoDB.

Here’s the kicker - PostgreSQL can now emulate all four of its top competitors:

- OpenHalo speaks MySQL

- AWS Babelfish speaks SQL Server

- IvorySQL and Alibaba PolarDB O speak Oracle

- FerretDB/Microsoft DocumentDB speaks MongoDB

Fun fact: All these capabilities are available out-of-the-box in Pigsty.

Want to Try It Out?

Pigsty now supports OpenHalo on EL systems. Here’s how to get started:

Follow the Pigsty standard installation process with the mysql config template:

curl -fsSL https://repo.pigsty.cc/get | bash; cd ~/pigsty

./bootstrap # Prepare dependencies

./configure -c mysql # Use MySQL (OpenHalo) template

./install.yml # Install (modify passwords in pigsty.yml for prod)

Pro tip: For production deployments, modify the passwords in pigsty.yml before running the installation playbook.

OpenHalo’s configuration mirrors PostgreSQL’s. You can use psql to connect to the postgres database and mysql CLI to connect to the mysql database.

all:

children:

pg-orio:

vars:

pg_databases:

- {name: postgres ,extensions: [aux_mysql]}

vars:

pg_mode: mysql # MySQL Compatible Mode by HaloDB

pg_version: 14 # The current HaloDB is compatible with PG Major Version 14

pg_packages: [ openhalodb, pgsql-common, mysql ] # also install mysql client shell

repo_modules: node,pgsql,infra,mysql

repo_extra_packages: [ openhalodb, mysql ] # replace default postgresql kernel with openhalo packages

MySQL’s default port is 3306, and when accessing MySQL, you’re actually connecting to the postgres database. Note that MySQL’s “database” concept maps to PostgreSQL’s “schema”. So use mysql actually uses the mysql schema in the postgres database.

MySQL credentials mirror PostgreSQL’s - manage users and permissions the PostgreSQL way. Currently, OpenHalo officially supports Navicat, though IntelliJ IDEA’s DataGrip might throw some tantrums.

mysql -h 127.0.0.1 -u dbuser_dba

Pigsty’s OpenHalo fork includes some QoL improvements over HaloTech-Co-Ltd/openHalo:

- Default database renamed from

halo0roottopostgres - Version number simplified from

1.0.14.10to14.10 - MySQL compatibility and port 3306 enabled by default

Disclaimer: Pigsty provides no warranty for OpenHalo - direct support queries to the original vendor.

More Kernel Tricks Up Our Sleeve

OpenHalo isn’t the only PG fork Pigsty supports. Check out:

- Babelfish by AWS (SQL Server compatibility)

- IvorySQL by Highgo (Oracle compatibility)

- OrioleDB by Supabase (OLTP performance beast)

- PolarDB by Alibaba Cloud (Aurora RAC flavor)

- PolarDB O 2.0 (Oracle-compatible with Chinese certification)

- FerretDB + Microsoft’s DocumentDB (MongoDB emulation)

- One-click Supabase local deployment (OrioleDB’s parent!)

BTW, my friend Yurii, Omnigres founder, is working on ETCD protocol support for PostgreSQL. Soon you might be able to use PG as a beefed-up etcd for Kubernetes/Patroni.

Best part? All this goodness is open-source and ready to roll in Pigsty. Want to take OpenHaloDB for a spin? Grab a server, and you’re 10 minutes away from finding out if it lives up to the hype.

PGFS: Using PostgreSQL as a File System

Recently, I received an interesting request from the Odoo community. They were grappling with a fascinating challenge: “If databases can do PITR (Point-in-Time Recovery), is there a way to roll back the file system as well?”

The Birth of “PGFS”

From a database veteran’s perspective, this is both challenging and exciting. We all know that in systems like Odoo, the most valuable asset is the core business data stored in PostgreSQL.

However, many “enterprise applications” also deal with file operations — attachments, images, documents, and the like. While these files might not be as “mission-critical” as the database, having the ability to roll back both the database and files to a specific point in time would be incredibly valuable from security, data integrity, and operational perspectives.

This led me to an intriguing thought: Could we give file systems PITR capabilities similar to databases? Traditional approaches often point to expensive and complex CDP (Continuous Data Protection) solutions, requiring specialized hardware or block-level storage logging. But I wondered: Could we solve this elegantly with open-source technology for the “rest of us”?

After much contemplation, a brilliant combination emerged: JuiceFS + PostgreSQL. By transforming PostgreSQL into a file system, all file writes would be stored in the database, sharing the same WAL logs and enabling rollback to any historical point. This might sound like science fiction, but hold on — it actually works. Let’s see how JuiceFS makes this possible.

Meet JuiceFS: When Database Becomes a File System

JuiceFS is a high-performance, cloud-native distributed file system that can mount object storage (like S3/MinIO) as a local POSIX file system. It’s incredibly lightweight to install and use, requiring just a few commands to format, mount, and start reading/writing.

For example, these commands will use SQLite as JuiceFS’s metadata store and a local path as object storage for testing:

juicefs format sqlite3:///tmp/jfs.db myjfs # Use SQLite3 for metadata, local FS for data

juicefs mount sqlite3:///tmp/jfs.db ~/jfs -d # Mount the filesystem to ~/jfs

The magic happens when you realize that JuiceFS also supports PostgreSQL as both metadata and object data storage backend! This means you can transform any PostgreSQL instance into a “file system” by simply changing JuiceFS’s backend.

So, if you have a PostgreSQL database (like one installed via Pigsty), you can spin up a “PGFS” with just a few commands:

METAURL=postgres://dbuser_meta:DBUser.Meta@:5432/meta

OPTIONS=(

--storage postgres

--bucket :5432/meta

--access-key dbuser_meta

--secret-key DBUser.Meta

${METAURL}

jfs

)

juicefs format "${OPTIONS[@]}" # Create a PG filesystem

juicefs mount ${METAURL} /data2 -d # Mount in background to /data2

juicefs bench /data2 # Test performance

juicefs umount /data2 # Unmount

Now, any data written to /data2 is actually stored in the jfs_blob table in PostgreSQL. In other words, this file system and PG database have become one!

PGFS in Action: File System PITR

Imagine we have an Odoo instance that needs to store file data in /var/lib/odoo or similar.

Traditionally, if you needed to roll back Odoo’s database, while the database could use WAL logs for point-in-time recovery, the file system would still rely on external snapshots or CDP.

But now, if we mount /var/lib/odoo to PGFS, all file system writes become database writes.

The database is no longer just storing SQL data; it’s also hosting the file system information.

This means: When performing PITR, not only does the database roll back to a specific point, but files instantly “roll back” with the database to the same moment.

Some might ask, “Can’t ZFS do snapshots too?” Yes, ZFS can create and roll back snapshots, but that’s still based on specific snapshot points. For precise rollback to a specific second or minute, you need true log-based solutions or CDP capabilities. The JuiceFS+PG combination effectively writes file operation logs into the database’s WAL, which is something PostgreSQL is naturally great at.

Let’s demonstrate this with a simple experiment. First, we’ll write timestamps to the file system while continuously inserting heartbeat records into the database:

while true; do date "+%H-%M-%S" >> /data2/ts.log; sleep 1; done

/pg/bin/pg-heartbeat # Generate database heartbeat records

tail -f /data2/ts.log

Then, let’s verify the JuiceFS table in PostgreSQL:

postgres@meta:5432/meta=# SELECT min(modified),max(modified) FROM jfs_blob;

min | max

----------------------------+----------------------------

2025-03-21 02:26:00.322397 | 2025-03-21 02:40:45.688779

When we decide to roll back to, say, one minute ago (2025-03-21 02:39:00), we just execute:

pg-pitr --time="2025-03-21 02:39:00" # Using pgbackrest to roll back to specific time, actual command:

pgbackrest --stanza=pg-meta --type=time --target='2025-03-21 02:39:00+00' restore

What? You’re asking where PITR and pgBackRest came from? Pigsty has already configured monitoring, backup, high availability, and more out of the box! You can set it up manually too, but it’s a bit more work.

Then when we check the file system logs and database heartbeat table, both have stopped at 02:39:00:

$ tail -n1 /data2/ts.log

02-38-59

$ psql -c 'select * from monitor.heartbeat'

id | ts | lsn | txid

---------+-------------------------------+-----------+------

pg-meta | 2025-03-21 02:38:59.129603+00 | 251871544 | 2546

This proves our approach works! We’ve successfully achieved FS/DB consistent PITR through PGFS!

How’s the Performance?

So we’ve got the functionality, but how does it perform?

I ran some tests on a development server with SSD using the built-in juicefs bench, and the results look promising — more than enough for applications like Odoo:

$ juicefs bench ~/jfs # Simple single-threaded performance test

BlockSize: 1.0 MiB, BigFileSize: 1.0 GiB,

SmallFileSize: 128 KiB, SmallFileCount: 100, NumThreads: 1

Time used: 42.2 s, CPU: 687.2%, Memory: 179.4 MiB

+------------------+------------------+---------------+

| ITEM | VALUE | COST |

+------------------+------------------+---------------+

| Write big file | 178.51 MiB/s | 5.74 s/file |

| Read big file | 31.69 MiB/s | 32.31 s/file |

| Write small file | 149.4 files/s | 6.70 ms/file |

| Read small file | 545.2 files/s | 1.83 ms/file |

| Stat file | 1749.7 files/s | 0.57 ms/file |

| FUSE operation | 17869 operations | 3.82 ms/op |

| Update meta | 1164 operations | 1.09 ms/op |

| Put object | 356 operations | 303.01 ms/op |

| Get object | 256 operations | 1072.82 ms/op |

| Delete object | 0 operations | 0.00 ms/op |

| Write into cache | 356 operations | 2.18 ms/op |

| Read from cache | 100 operations | 0.11 ms/op |

+------------------+------------------+---------------+

Another sample: Alibaba Cloud ESSD PL1 basic disk test results

+------------------+------------------+---------------+

| ITEM | VALUE | COST |

+------------------+------------------+---------------+

| Write big file | 18.08 MiB/s | 56.64 s/file |

| Read big file | 98.07 MiB/s | 10.44 s/file |

| Write small file | 268.1 files/s | 3.73 ms/file |

| Read small file | 1654.3 files/s | 0.60 ms/file |

| Stat file | 7465.7 files/s | 0.13 ms/file |

| FUSE operation | 17855 operations | 4.28 ms/op |

| Update meta | 1192 operations | 16.28 ms/op |

| Put object | 357 operations | 2845.34 ms/op |

| Get object | 255 operations | 327.37 ms/op |

| Delete object | 0 operations | 0.00 ms/op |

| Write into cache | 357 operations | 2.05 ms/op |

| Read from cache | 102 operations | 0.18 ms/op |

+------------------+------------------+---------------+

While the throughput might not match native file systems, it’s more than sufficient for applications with moderate file volumes and lower access frequencies. After all, using a “database as a file system” isn’t about running large-scale storage or high-concurrency writes — it’s about keeping your database and file system “in sync through time.” If it works, it works.

Completing the Vision: One-Click “Enterprise” Deployment

Now, let’s put this all together in a practical scenario — like one-click deploying an “enterprise-grade” Odoo with “automatic” CDP capabilities for files.

Pigsty provides PostgreSQL with external high availability, automatic backup, monitoring, PITR, and more. Installing it is a breeze:

curl -fsSL https://repo.pigsty.cc/get | bash; cd ~/pigsty

./bootstrap # Install Pigsty dependencies

./configure -c app/odoo # Use Odoo configuration template

./install.yml # Install Pigsty

That’s the standard Pigsty installation process. Next, we’ll use playbooks to install Docker, create the PGFS mount, and launch stateless Odoo with Docker Compose:

./docker.yml -l odoo # Install Docker module, launch Odoo stateless components

./juice.yml -l odoo # Install JuiceFS module, mount PGFS to /data2

./app.yml -l odoo # Launch Odoo stateless components, using external PG/PGFS

Yes, it’s that simple. Everything is ready, though the key lies in the configuration file.

The pigsty.yml configuration file would look something like this, with the only modification being the addition of JuiceFS configuration to mount PGFS to /data/odoo:

odoo:

hosts: { 10.10.10.10: {} }

vars:

# ./juice.yml -l odoo

juice_fsname: jfs

juice_mountpoint: /data/odoo

juice_options:

- --storage postgres

- --bucket :5432/meta

- --access-key dbuser_meta

- --secret-key DBUser.Meta

- postgres://dbuser_meta:DBUser.Meta@:5432/meta

- ${juice_fsname}

# ./app.yml -l odoo

app: odoo # specify app name to be installed (in the apps)

apps: # define all applications

odoo: # app name, should have corresponding ~/app/odoo folder

file: # optional directory to be created

- { path: /data/odoo ,state: directory, owner: 100, group: 101 }

- { path: /data/odoo/webdata ,state: directory, owner: 100, group: 101 }

- { path: /data/odoo/addons ,state: directory, owner: 100, group: 101 }

conf: # override /opt/<app>/.env config file

PG_HOST: 10.10.10.10 # postgres host

PG_PORT: 5432 # postgres port

PG_USERNAME: odoo # postgres user

PG_PASSWORD: DBUser.Odoo # postgres password

ODOO_PORT: 8069 # odoo app port

ODOO_DATA: /data/odoo/webdata # odoo webdata

ODOO_ADDONS: /data/odoo/addons # odoo plugins

ODOO_DBNAME: odoo # odoo database name

ODOO_VERSION: 18.0 # odoo image version

After this, you have an “enterprise-grade” Odoo running on the same server: backend database managed by Pigsty, file system mounted via JuiceFS, with JuiceFS’s backend connected to PostgreSQL. When a “rollback need” arises, simply perform PITR on PostgreSQL, and both files and database will “roll back to the specified moment.” This approach works equally well for similar applications like Dify, Gitlab, Gitea, MatterMost, and others.

Looking back at all this, you’ll realize: What once required expensive hardware and high-end storage solutions to achieve CDP can now be accomplished with a lightweight open-source combination. While it might have a “DIY for the rest of us” feel, it’s simple, stable, and practical enough to be worth exploring in more scenarios.

PostgreSQL Frontier

Dear readers, I’m off on vacation starting today—likely no new posts for about two weeks. Let me wish everyone a Happy New Year in advance.

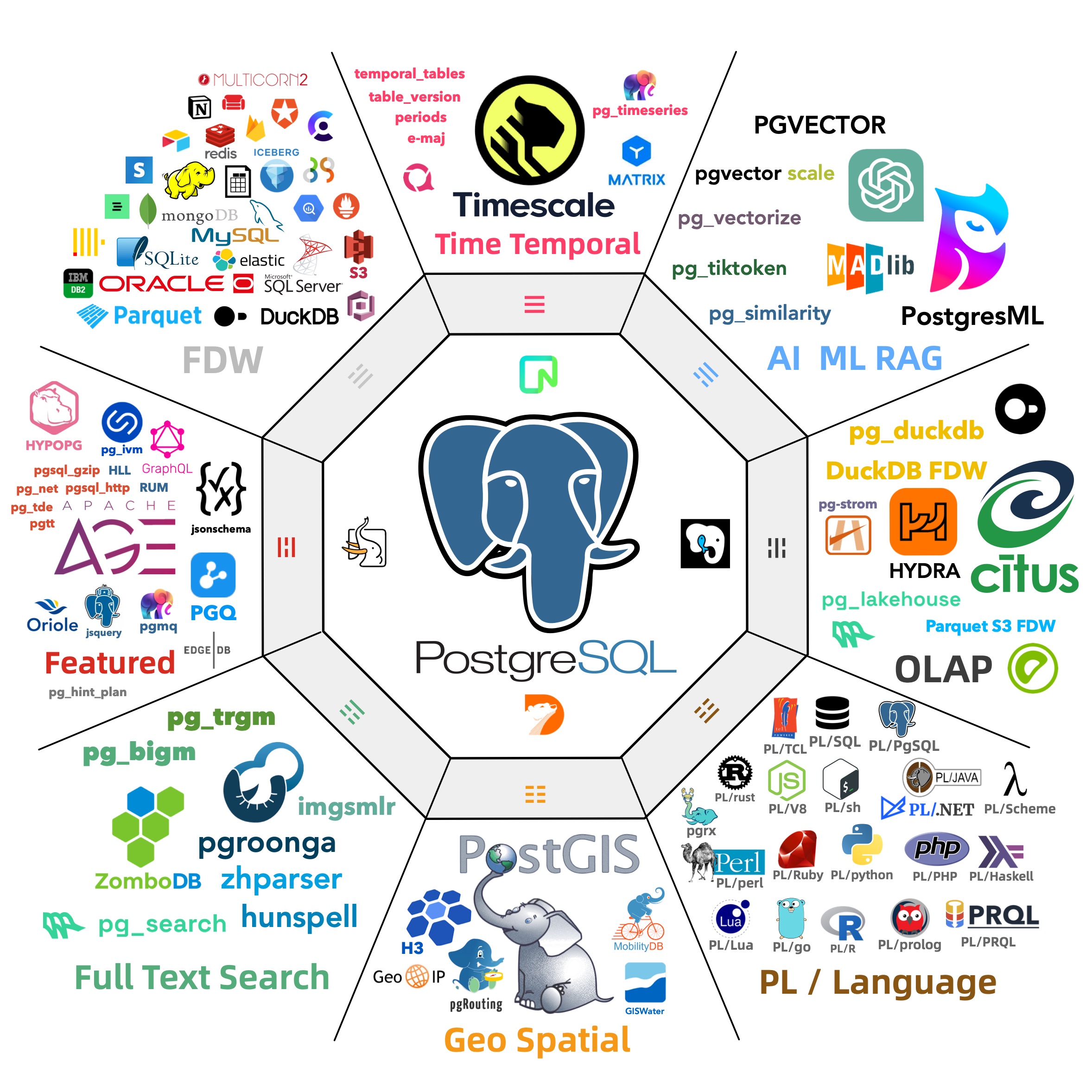

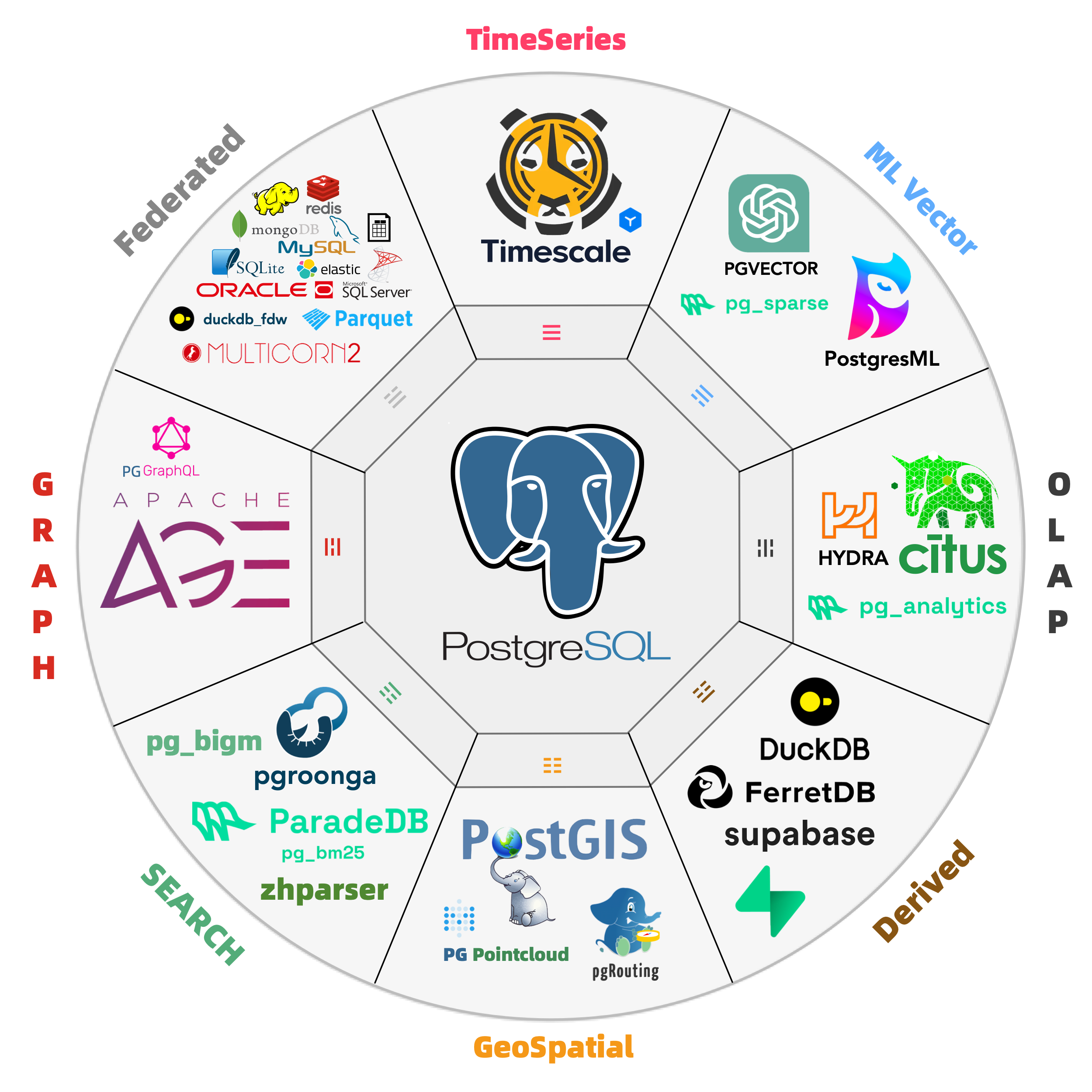

Of course, before heading out, I wanted to share some recent interesting developments in the Postgres (PG) ecosystem. Just yesterday, I hurried to release Pigsty 3.2.2 and Pig v0.1.3 before my break. In this new version, the number of available PG extensions has shot up from 350 to 400, bundling many fascinating toys. Below is a quick rundown:

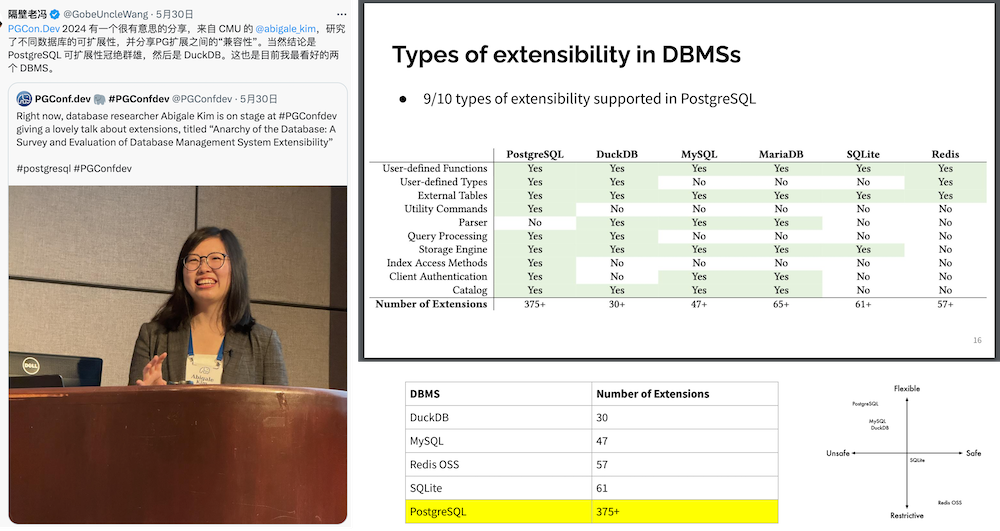

Omnigres: Full-stack web development inside PostgreSQL

PG Mooncake: Bringing ClickHouse-level analytical performance into PG

Citus: Distributed extension for PG17—Citus 13 is out!

FerretDB: MongoDB “compatibility” layer on PG with 20x performance boost in 2.0

ParadeDB: ES-like full-text search in PG with PG block storage

Pigsty 3.2.2: Packs all of the above into one box for immediate use

Omnigres

I introduced Omnigres in a previous article, “Database as Architecture”. In short, it allows you to cram all your business logic—including a web server and the entire backend—into PostgreSQL.

For example, the following SQL will launch a web server and expose /www as the root directory. This means you can package what’s normally a classic three-tier architecture (frontend–backend–database) entirely into a single database!

If you’re familiar with Oracle, you might notice it’s somewhat reminiscent of Oracle Apex. But in PostgreSQL, you have over twenty different languages to develop your stored procedures in—not just PL/SQL! Plus, Omnigres gives you far more than just an HTTPD server; it actually ships 33 extension plugins that function almost like a “standard library” for web development within PG.

They say, “what’s split apart will eventually recombine, what’s recombined will eventually split apart.” In ancient times, many C/S or B/S applications were basically a few clients directly reading/writing to the database. Later, as business logic grew more complex and hardware (relative to business needs) got stretched, we peeled away a lot of functionality from the database, forming the traditional three-tier model.

Now, with significant improvements in hardware performance—giving us surplus capacity on database servers—and with easier ways to write stored procedures, this “split” trend may well reverse. Business logic once stripped out of the database might come back in. I see Omnigres (and Supabase, too) as a renewed attempt at “recombination.”

If you’re running tens of thousands of TPS, dealing with tens of terabytes of data, or handling a life-critical mega-sized core system, this might not be your best approach. But if you’re developing personal projects, small websites, or an early-stage startup with an innovative, smaller-scale system, this architecture can dramatically speed up your iteration cycle, simplifying both development and operations.

Pigsty v3.2.2 comes with the Omnigres extension included—this took quite some effort. With hands-on help from the original author, Yurii, we managed to build and package it for 10 major Linux distributions. Note that these extensions come from an independent repo you can use on its own—you’re not required to run Pigsty just to get them. (Omnigres and AutoBase PG both rely on this repo for extension distribution, a terrific example of open-source ecosystems thriving on mutual benefit.)

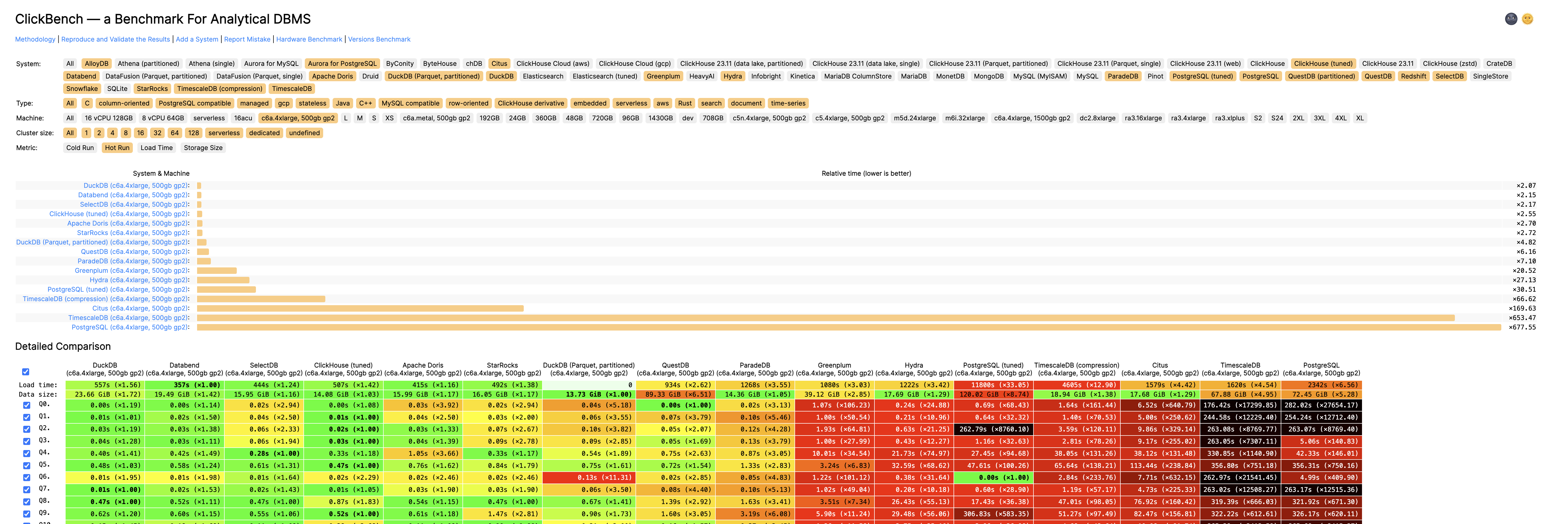

pg_mooncake

Ever since the “DuckDB Mash-up Contest” kicked off, pg_mooncake was the last entrant. At one point, I almost thought they had gone dormant. But last week, they dropped a bombshell with their new 0.1.0 release, catapulting themselves directly into the top 10 on the ClickBench leaderboard, right alongside ClickHouse.

This is the first time a PostgreSQL setup—plus an extension—has broken into that Tier 0 bracket on an analytical leaderboard. It’s a milestone worth noting. Looks like pg_duckdb just met a fierce contender—and that’s good news for everyone, since we now have multiple ways to do high-performance analytics in PG. Internal competition keeps the ecosystem thriving, and it also widens the gap between the entire Postgres ecosystem and other DBMSs.

Most people still see PostgreSQL as a rock-solid OLTP database, rarely associating it with “real-time analytics.” Yet PostgreSQL’s extensibility allows it to transcend that image and carve out new territory in real-time analytics. The pg_mooncake team leveraged PG’s extensibility to write a native extension that embeds DuckDB’s query engine for columnar queries. This means queries can process data in batches (instead of row-by-row) and utilize SIMD instructions, yielding significant speedups in scanning, grouping, and aggregation.

pg_mooncake also employs a more efficient metadata mechanism: instead of fetching metadata and statistics externally from Parquet or some other storage, it stores them directly in PostgreSQL. This speeds up query optimization and execution, and enables higher-level features such as file-level skipping to accelerate scans.

All these optimizations have yielded impressive performance results—reportedly up to 1000x faster. That means PostgreSQL is no longer just a “heavy-duty workhorse” for OLTP. With the right engineering and optimization, it can go head-to-head with specialized analytical databases while retaining PG’s hallmark flexibility and vast ecosystem. This could simplify the entire data stack—no more complicated big-data toolkits or ETL pipelines. Top-tier analytics can happen directly inside Postgres.

Pigsty v3.2.2 now officially includes the mooncake 0.1 binary. Note that this extension conflicts with pg_duckdb since both bundle their own libduckdb. You can only choose one of them on a given system. That’s a bit of a pity—I filed an issue suggesting they share a single libduckdb. It’s exhausting that each extension builds DuckDB from scratch, especially when you’re compiling them both.

Finally, you can tell from the name “mooncake” that it’s led by a Chinese-speaking team. It’s awesome to see more people from China contributing and standing out in the Postgres ecosystem.

Blog: ClickBench says “Postgres is a great analytics database” https://www.mooncake.dev/blog/clickbench-v0.1

ParadeDB

ParadeDB is an old friend of Pigsty. We’ve supported ParadeDB from its very early days and watched it grow into the leading solution in the PostgreSQL ecosystem to provide an ElasticSearch-like capability.

pg_search is ParadeDB’s extension for Postgres, implementing a custom index that supports full-text search and analytics. It’s powered underneath by a Rust-based search library Tantivy, inspired by Lucene.

pg_search just released version 0.14 in the past two weeks, switching to PG’s native block storage instead of relying on Tantivy’s own file format. This is a huge architectural shift that dramatically boosts reliability and yields multiple times the performance. It’s no longer just some “stitch-it-together hack”—it’s now deeply embedded into PG.

Prior to v0.14.0, pg_search did not use Postgres’s block storage or buffer cache. The extension managed its own files outside Postgres control, reading them directly from disk. While it’s not unusual for an extension to access the file system directly (see note 1), migrating to block storage delivers:

- Deep integration with Postgres WAL (write-ahead logging), enabling physical replication of indexes.

- Support for crash recovery and point-in-time recovery (PITR).

- Full support for Postgres MVCC (multi-version concurrency control).

- Integration with Postgres’s buffer cache, significantly boosting index build speed and write throughput.

The latest version of pg_search is now included in Pigsty. Of course, we also bundle other full-text search / tokenizing extensions like pgroonga, pg_bestmatch, hunspell, and Chinese tokenizer zhparser, so you can pick the best fit.

Blog: Full-text search with Postgres block storage layout https://www.paradedb.com/blog/block_storage_part_one

citus

While pg_duckdb and pg_mooncake represent the new wave of OLAP in the PG ecosystem, Citus (and Hydra) are more old-school OLAP— or perhaps HTAP—extensions. Just the day before yesterday, Citus 13.0.0 was released, officially supporting the latest PostgreSQL version 17. That means all the major extensions now have PG17-compatible releases. Full speed ahead for PG17!

Citus is a distributed extension for PG, letting you seamlessly turn a single Postgres primary–replica deployment into a horizontally scaled cluster. Microsoft acquired Citus and fully open-sourced it; the cloud version is called Hyperscale PG or CosmosDB PG.

In reality, most users nowadays don’t push the hardware to the point that they absolutely need a distributed database—but such scenarios do exist. For instance, in “Escaping from cloud fraude” (an article about someone trying to escape steep cloud costs), the user ended up considering Citus to offset expensive cloud disk usage. So, Pigsty has also updated and included full Citus support.

Typically, a distributed database is more of a headache to administer than a simple primary–replica setup. But we devised an elegant abstraction so deploying and managing Citus is pretty straightforward—just treat them as multiple horizontal PG clusters. A single configuration file can spin up a 10-node Citus cluster with one command.

I recently wrote a tutorial on how to deploy a highly available Citus cluster. Feel free to check it out: https://pigsty.cc/docs/tasks/citus/

Blog: Release notes for Citus v13.0.0: https://github.com/citusdata/citus/blob/v13.0.0/CHANGELOG.md

FerretDB

Finally, we have FerretDB 2.0. FerretDB is another old friend of Pigsty. Marcin reached out to me right away to share the excitement of the new release. Unfortunately, 2.0 is still in RC, so I couldn’t package it into the Pigsty repo in time for the v3.2.2 release. No worries—it’ll be included next time!

FerretDB turns PostgreSQL into a “wire-protocol compatible” MongoDB. It’s licensed under Apache 2.0—truly open source. FerretDB 2.0 leverages Microsoft’s newly open-sourced DocumentDB PostgreSQL extension, delivering major improvements in performance, compatibility, support, and flexibility. Highlights include:

- Over 20x performance boost

- Greater feature parity

- Vector search support

- Replication support

- Broad community backing and services

FerretDB offers a low-friction path for MongoDB users to migrate to PostgreSQL. You don’t need to touch your application code—just swap out the back end and voilà. You get the MongoDB API compatibility plus the superpowers of the entire PG ecosystem, which offers hundreds of extensions.

Blog: https://blog.ferretdb.io/ferretdb-releases-v2-faster-more-compatible-mongodb-alternative/

Pigsty 3.2.2

And that brings us to Pigsty v3.2.2. This release adds 40 brand-new extensions (33 of which come from Omnigres) and updates many existing ones (Citus, ParadeDB, PGML, etc.). We also contributed to and followed up on PolarDB PG’s ARM64 support, as well as support for Debian systems, and tracked IvorySQL’s latest 4.2 release compatible with PostgreSQL 17.2.

Sure, it may sound like a bunch of version sync chores, but if it weren’t for those chores, I wouldn’t have dropped this release a day before my vacation! Anyway, I hope you’ll give these new extensions a try. If you run into any issues, feel free to let me know—just understand I can’t guarantee a quick response while I’m off.

One more thing: some users told me the old Pigsty website was “ugly”—basically overflowing with “tech-bro aesthetic,” cramming all the info into a single dense page. They have a point, so I recently used a front-end template to give the homepage a fresh coat of paint. Now it looks a bit more “international.”

To be honest, I haven’t touched front-end in seven or eight years. Last time, it was a jQuery-fest. This time around, Next.js / Vercel / all the new stuff had me dazzled. But once I got my bearings (and thanks to GPT o1 pro plus Cursor), it all came together in a day. The productivity gains with AI these days are truly astounding.

Alright, that’s the latest news from the PostgreSQL world. I’m about to pack my bags—my flight to Thailand departs this afternoon, fingers crossed I don’t run into any phone-scam rings. Let me wish everyone a Happy New Year in advance!

Pig, The PostgreSQL Extension Wizard

Ever wished installing or upgrading PostgreSQL extensions didn’t feel like digging through outdated readmes, cryptic configure scripts, or random GitHub forks & patches? The painful truth is that Postgres’s richness of extension often comes at the cost of complicated setups—especially if you’re juggling multiple distros or CPU architectures.

Enter Pig, a Go-based package manager built to tame Postgres and its ecosystem of 400+ extensions in one fell swoop. TimescaleDB, Citus, PGVector, 20+ Rust extensions, plus every must-have piece to self-host Supabase — Pig’s unified CLI makes them all effortlessly accessible. It cuts out messy source builds and half-baked repos, offering version-aligned RPM/DEB packages that work seamlessly across Debian, Ubuntu, and RedHat flavors. No guesswork, no drama.

Instead of reinventing the wheel, Pig piggyback your system’s native package manager (APT, YUM, DNF) and follow official PGDG packaging conventions to ensure a glitch-free fit. That means you don’t have to choose between “the right way” and “the quick way”; Pig respects your existing repos, aligns with standard OS best practices, and fits neatly alongside other packages you already use.

Ready to give your Postgres superpowers without the usual hassle? Check out GitHub for documentation, installation steps, and a peek at its massive extension list. Then, watch your local Postgres instance transform into a powerhouse of specialized modules—no black magic is required. If the future of Postgres is unstoppable extensibility, Pig is the genie that helps you unlock it. Honestly, nobody ever complained that they had too many extensions.

PIG v0.1 Release | GitHub Repo | Blog: The Idea Way to deliver PG Extensions

Get Started

Install the pig package itself with scripts or the traditional yum/apt way.

curl -fsSL https://repo.pigsty.io/pig | bash

Then it’s ready to use; assume you want to install the pg_duckdb extension:

$ pig repo add pigsty pgdg -u # add pgdg & pigsty repo, update cache

$ pig repo set -u # overwrite all existing repos, brute but effective

$ pig ext install pg17 # install native PGDG PostgreSQL 17 kernels packages

$ pig ext install pg_duckdb # install the pg_duckdb extension (for current pg17)

Extension Management

pig ext list [query] # list & search extension

pig ext info [ext...] # get information of a specific extension

pig ext status [-v] # show installed extension and pg status

pig ext add [ext...] # install extension for current pg version

pig ext rm [ext...] # remove extension for current pg version

pig ext update [ext...] # update extension to the latest version

pig ext import [ext...] # download extension to local repo

pig ext link [ext...] # link postgres installation to path

pig ext build [ext...] # setup building env for extension

Repo Management

pig repo list # available repo list (info)

pig repo info [repo|module...] # show repo info (info)

pig repo status # show current repo status (info)

pig repo add [repo|module...] # add repo and modules (root)

pig repo rm [repo|module...] # remove repo & modules (root)

pig repo update # update repo pkg cache (root)

pig repo create # create repo on current system (root)

pig repo boot # boot repo from offline package (root)

pig repo cache # cache repo as offline package (root)

Don't Update! Rollback Issued on Release Day: PostgreSQL Faces a Major Setback

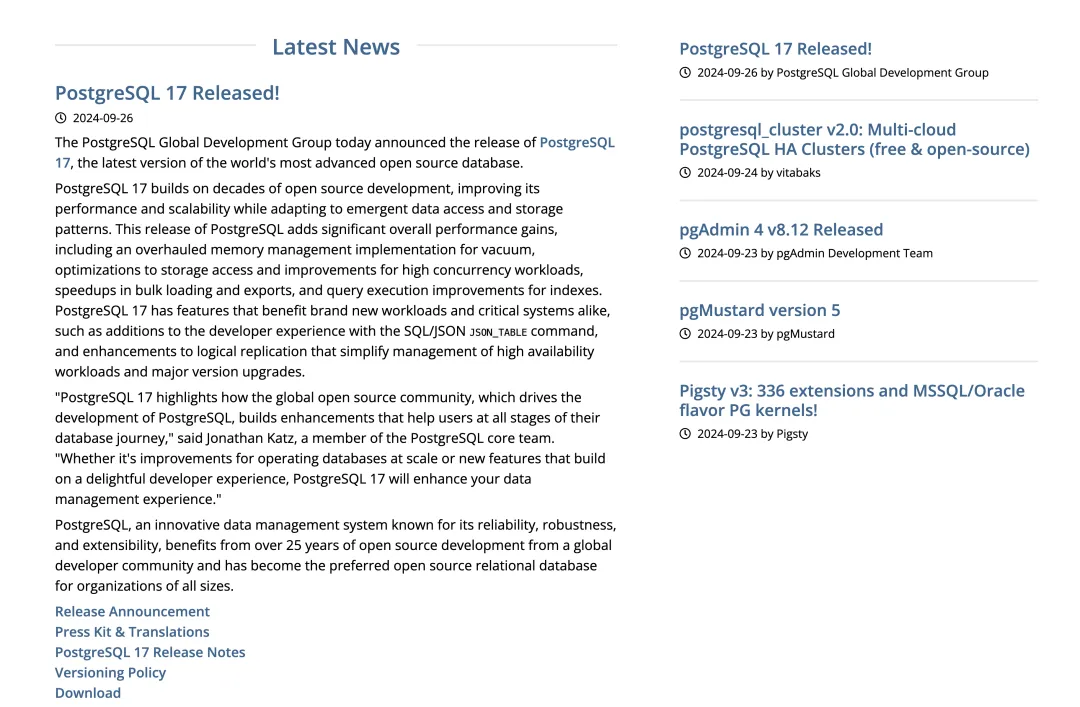

As the old saying goes, never release code on Friday. Although PostgreSQL’s recent minor release carefully avoided a Friday launch, it still gave the community a full week of extra work — PostgreSQL will release an unscheduled emergency update next Thursday: PostgreSQL 17.2, 16.6, 15.10, 14.15, 13.20, and even 12.22 for the just-EOLed PG 12.

This is the first time in a decade that such a situation has occurred: on the very day of PostgreSQL’s release, the new version was pulled due to issues discovered by the community. There are two reasons for this emergency release. First, to fix the CVE-2024-10978 security vulnerability, which isn’t a major concern. The real problem is that the new PostgreSQL minor version modified its ABI, causing extensions that depend on ABI stability — like TimescaleDB — to crash.

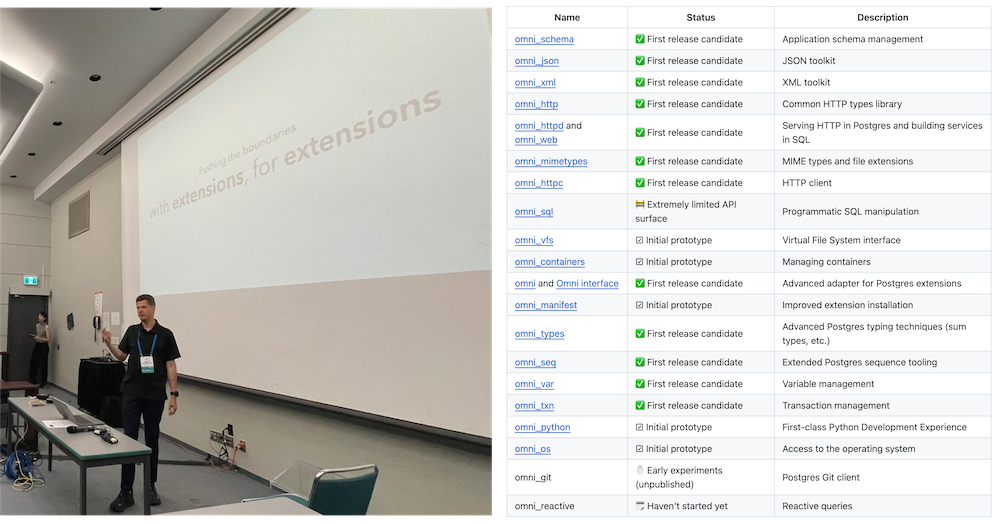

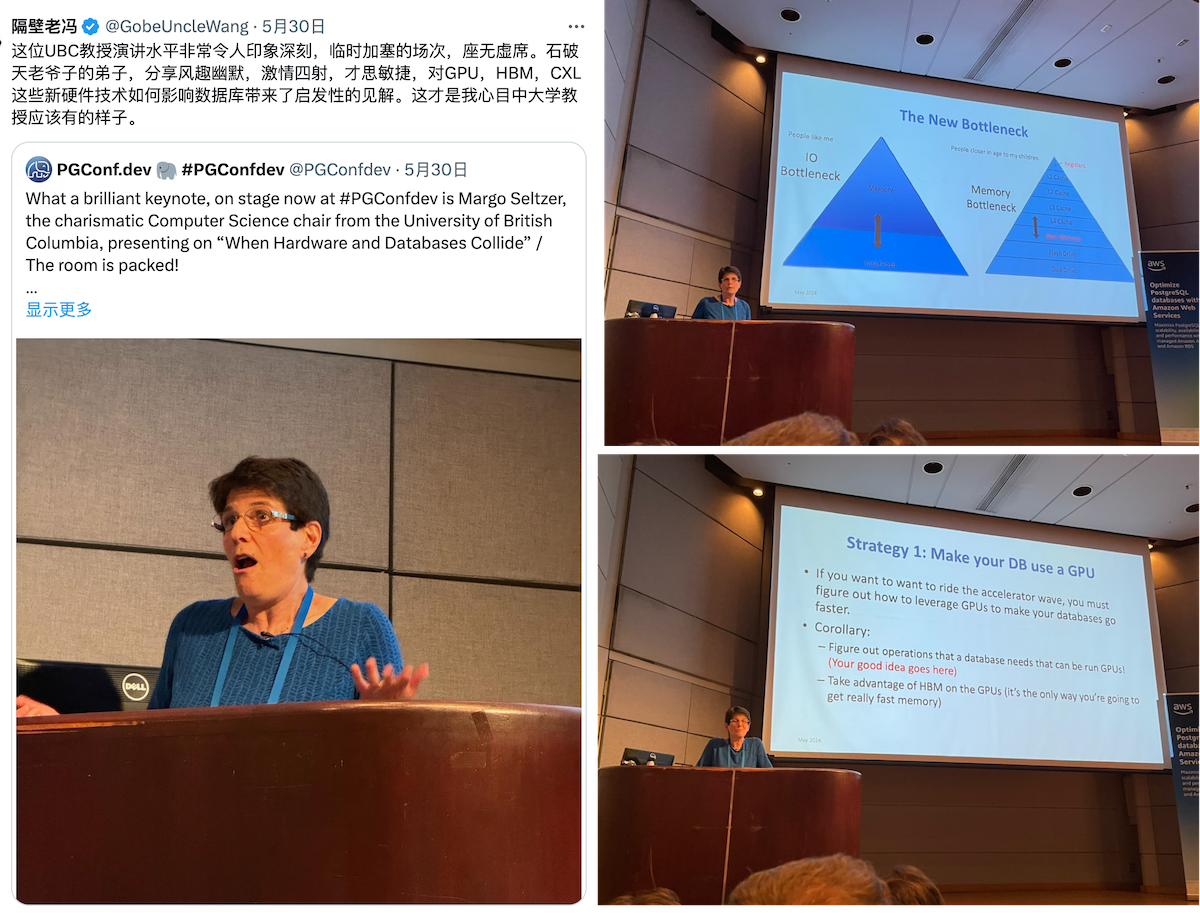

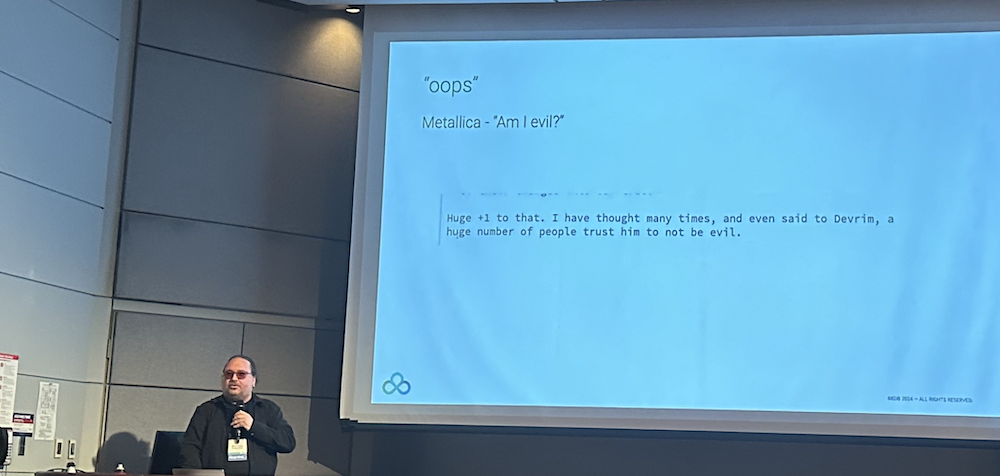

The issue of PostgreSQL minor version ABI compatibility was actually raised by Yuri back in June at PGConf 2024. During the extensions summit and his talk “Pushing boundaries with extensions, for extension”, he brought up this concern, but it didn’t receive much attention. Now it has exploded spectacularly, and I imagine Yuri is probably shrugging his shoulders saying: “Told you so.”

In short, the PostgreSQL community strongly recommends that users do not upgrade PostgreSQL in the coming week. Tom Lane has proposed releasing an unscheduled emergency minor version next Thursday to roll back these changes, overwriting the older 17.1, 16.5, and so on — essentially treating the problematic versions as if they “never existed.” Consequently, Pigsty 3.1, which was scheduled for release in the next couple of days and set to use the latest PostgreSQL 17.1 by default, will also be delayed by a week.

Overall, I believe this incident will have a positive impact. First, it’s not a quality issue with the core kernel itself. Second, because it was discovered early enough — on the very day of release — and promptly halted, there was no substantial impact on users. Unlike vulnerabilities in other databases/chips/operating systems that cause widespread damage upon discovery, this was caught early. Apart from a few overzealous update enthusiasts or unfortunate new installations, there shouldn’t be much impact. This is similar to the recent xz backdoor incident, which was also discovered by PG core developer Peter during PostgreSQL testing, further highlighting the vitality and insight of the PostgreSQL ecosystem.

What Happened

On the morning of November 14th, an email appeared on the PostgreSQL Hackers mailing list mentioning that the new minor version had actually broken the ABI. This isn’t a problem for the PostgreSQL database kernel itself, but the ABI change broke the convention between the PG kernel and extension plugins, causing extensions like TimescaleDB to fail on the new PG minor version.

PostgreSQL extension plugins are provided for specific major versions on specific operating system distributions. For example, PostGIS, TimescaleDB, and Citus are built for major versions like PG 12, 13, 14, 15, 16, and 17 released each year. Extensions built for PG 16.0 are generally expected to continue working on PG 16.1, 16.2, … 16.x. This means you can perform rolling upgrades of the PG kernel’s minor versions without worrying about extension plugin issues.

However, this isn’t an explicit promise but rather an implicit community understanding — ABI belongs to internal implementation details and shouldn’t have such promises or expectations. PostgreSQL has simply performed too well in the past, and everyone has grown accustomed to this behavior, making it a default working assumption reflected in various aspects including PGDG repository package naming and installation scripts.

This time, though, PG 17.1 and the backported versions to 16-12 modified the size of an internal structure, which can cause — extensions compiled for PG 17.0 when used on 17.1 — potential conflicts resulting in illegal writes or program crashes. Note that this issue doesn’t affect users of the PostgreSQL kernel itself; PostgreSQL has internal assertions to check for such situations.

However, for users of extensions like TimescaleDB, this means if you don’t use extensions recompiled for the current minor version, you’ll face such security risks. Given the current maintenance logic of PGDG repositories, extension plugins are only compiled against the latest PG minor version when a new extension version is released.

Regarding the PostgreSQL ABI issue, Marco Slot from CrunchyData wrote a detailed thread explaining it. Available for professional readers to reference.

https://x.com/marcoslot/status/1857403646134153438

How to Avoid Such Problems

As I mentioned previously in “PG’s Ultimate Achievement: The Most Complete PG Extension Repository”, I maintain a repository of many PG extension plugins for EL and Debian/Ubuntu, covering nearly half of the extensions in the entire PG ecosystem.

The PostgreSQL ABI issue was actually mentioned by Yuri before. As long as your extension plugins are compiled for the PostgreSQL minor version you’re currently using, there won’t be any problems. That’s why I recompile and package these extension plugins whenever a new minor version is released.

Last month, I had just finished compiling all the extension plugins for 17.0, and was about to start updates for compiling the 17.1 version. It looks like that won’t be necessary now, as 17.2 will roll back the ABI changes. While this means extensions compiled on 17.0 can continue to be used, I’ll still recompile and package against PG 17.2 and other main versions after 17.2 is released.

If you’re in the habit of installing PostgreSQL and extension plugins from the internet and don’t promptly upgrade minor versions, you’ll indeed face this security risk — where your newly installed extensions aren’t compiled for your older kernel version and crash due to ABI conflicts.

To be honest, I’ve encountered this problem in the real world quite early on, which is why when developing Pigsty, an out-of-the-box PostgreSQL distribution, I chose from Day 1 to first download all necessary packages and their dependencies locally, build a local software repository, and then provide Yum/Apt repositories to all nodes that need them. This approach ensures that all nodes in the environment install the same versions, and that it’s a consistent snapshot — the extension versions match the kernel version.

Moreover, this approach achieves the requirement of “independent control,” meaning that after your deployment goes live, you won’t encounter absurd situations like — the original software source shutting down or moving, or simply the upstream repository releasing an incompatible new version or new dependency, leading to major failures when setting up new machines/instances. This means you have a complete software copy for replication/expansion, with the ability to keep your services running indefinitely without worrying about someone “truly cutting off your lifeline.”

For example, when 17.1 was recently released, RedHat updated the default version of LLVM from 17 to 18 just two days prior, and unfortunately only updated EL8 without updating EL9. If users chose to install from the internet upstream at this time, it would fail directly. After I raised this issue to Devrim, he spent two hours fixing it by adding LLVM-18 to the EL9-specific patch Fix repository.

PS: If you didn’t know about this independent repository, you’d probably continue to encounter issues even after the fix, until RedHat fixed the problem themselves. But Pigsty would handle all these dirty details for you.

Some might say they could solve such version problems using Docker, which is certainly true. However, running databases in Docker comes with other issues, and these Docker images essentially use the operating system’s package manager in their Dockerfiles to download RPM/DEB packages from official repositories. Ultimately, someone has to do this work…

Of course, adapting to different operating systems means a significant maintenance workload. For example, I maintain 143 PG extension plugins for EL and 144 for Debian, each needing to be compiled for 10 major operating system versions (EL 8/9, Ubuntu 22/24, Debian 12, five major systems, amd64 and arm64) and 6 database major versions (PG 17-12). The combination of these elements means there are nearly 10,000 packages to build/test/distribute, including twenty Rust extensions that take half an hour to compile… But honestly, since it’s all semi-automated pipeline work, changing from running once a year to once every 3 months is acceptable.

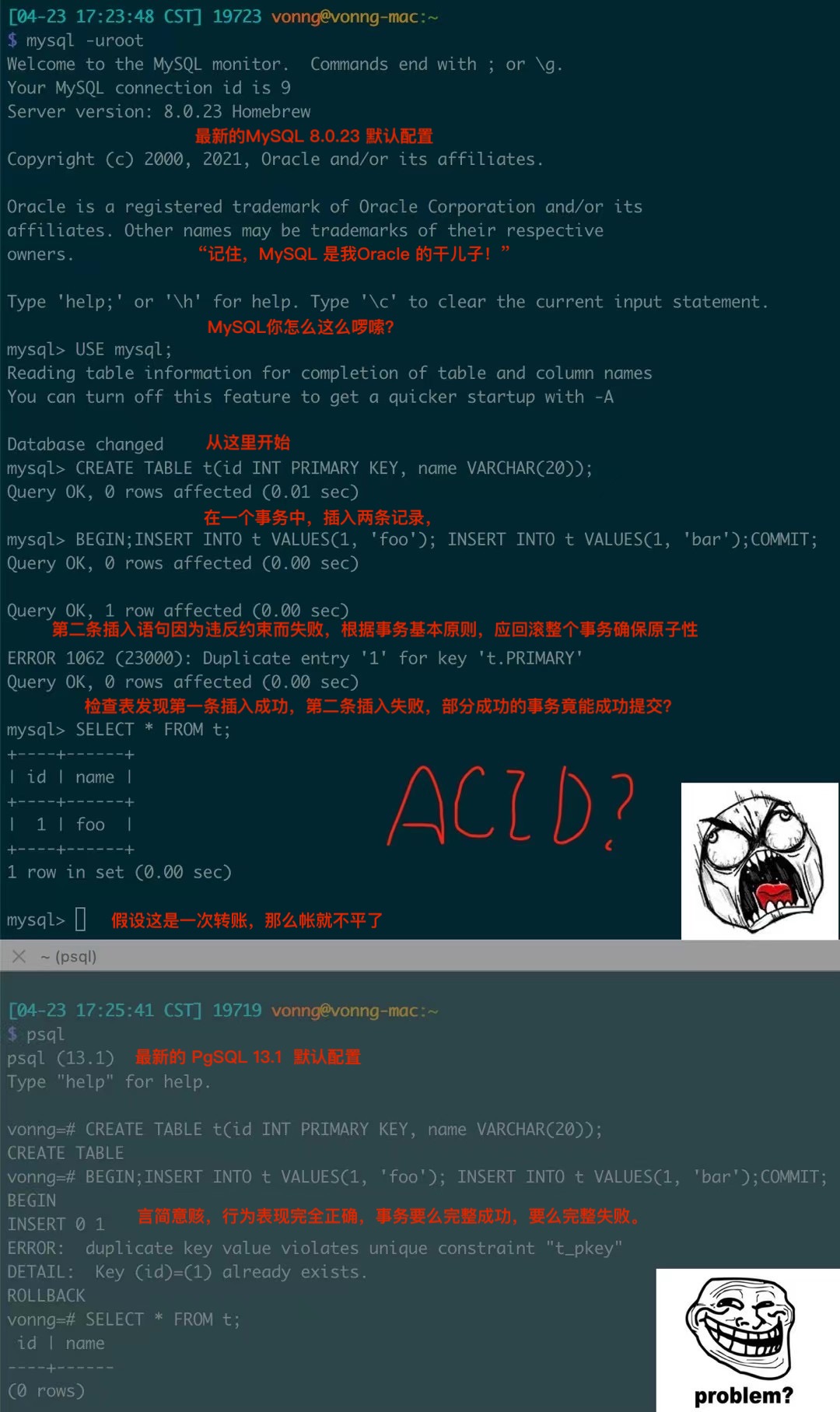

Appendix: Explanation of the ABI Issue

About the PostgreSQL extension ABI issue in the latest patch versions (17.1, 16.5, etc.)

C code in PostgreSQL extensions includes headers from PostgreSQL itself. When an extension is compiled, functions from the headers are represented as abstract symbols in the binary. These symbols are linked to actual function implementations when the extension is loaded, based on function names. This way, an extension compiled for PostgreSQL 17.0 can typically still load into PostgreSQL 17.1, as long as function names and signatures in the headers haven’t changed (i.e., the Application Binary Interface or “ABI” is stable).

Headers also declare structures (passed as pointers) to functions. Strictly speaking, structure definitions are also part of the ABI, but there are more subtleties here. After compilation, structures are primarily defined by their size and field offsets, so name changes don’t affect the ABI (though they affect the API). Size changes slightly affect the ABI. In most cases, PostgreSQL uses a macro (“makeNode”) to allocate structures on the heap, which looks at the compile-time size of the structure and initializes the bytes to 0.

The difference in 17.1 is that a new boolean was added to the ResultRelInfo structure, increasing its size. What happens next depends on who calls makeNode. If it’s code from PostgreSQL 17.1, it uses the new size. If it’s an extension compiled for 17.0, it uses the old size. When it calls PostgreSQL functions with a pointer allocated using the old size, PostgreSQL functions still assume the new size and may write beyond the allocated block. Generally, this is quite problematic. It can lead to bytes being written to unrelated memory areas or program crashes.

When running tests, PostgreSQL has internal checks (assertions) to detect this situation and throw warnings. However, PostgreSQL uses its own allocator, which always rounds up allocated bytes to powers of 2. The ResultRelInfo structure is 376 bytes (on my laptop), so it rounds up to 512 bytes, and similarly after the change (384 bytes on my laptop). Therefore, this particular structure change typically doesn’t affect allocation size. There might be uninitialized bytes, but this is usually resolved by calling InitResultRelInfo.

This issue mainly raises warnings in tests or assertion-enabled builds where extensions allocate ResultRelInfo, especially when running those tests with extension binaries compiled against older PostgreSQL versions. Unfortunately, the story doesn’t end there. TimescaleDB is a heavy user of ResultRelInfo and indeed encountered problems with the size change. For example, in one of its code paths, it needs to find an index in an array of ResultRelInfo pointers, for which it performs pointer arithmetic. This array is allocated by PostgreSQL (384 bytes), but the Timescale binary assumes 376 bytes, resulting in a meaningless number that triggers assertion failures or segfaults. https://github.com/timescale/timescaledb/blob/2.17.2/src/nodes/hypertable_modify.c#L1245…

The code here isn’t actually wrong, but the contract with PostgreSQL isn’t as expected. This is an interesting lesson for all of us. Similar issues might exist in other extensions, though not many extensions are as advanced as Timescale. Another advanced extension is Citus, but I’ve verified that Citus is safe. It does show assertion warnings. Everyone is advised to be cautious. The safest approach is to ensure extensions are compiled with headers from the PostgreSQL version you’re running.

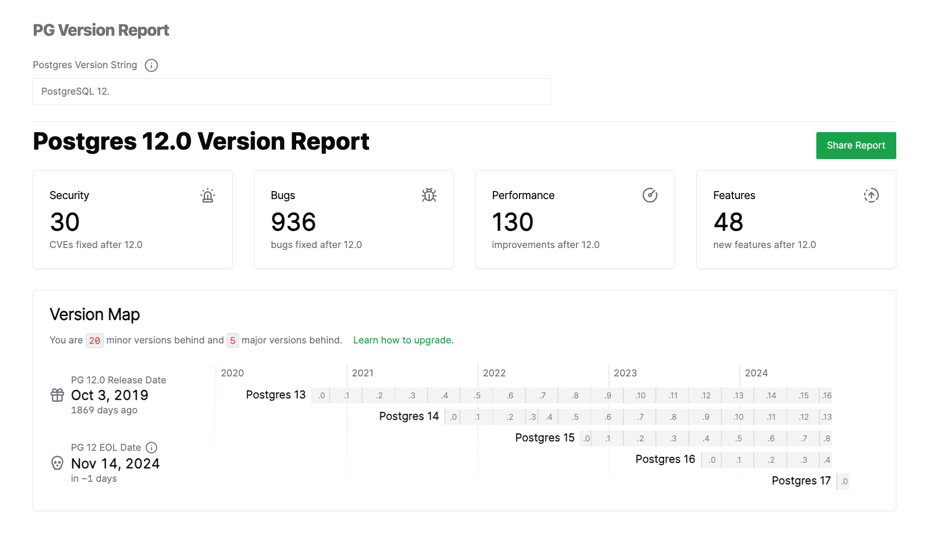

PostgreSQL 12 Reaches End-of-Life as PG 17 Takes the Throne

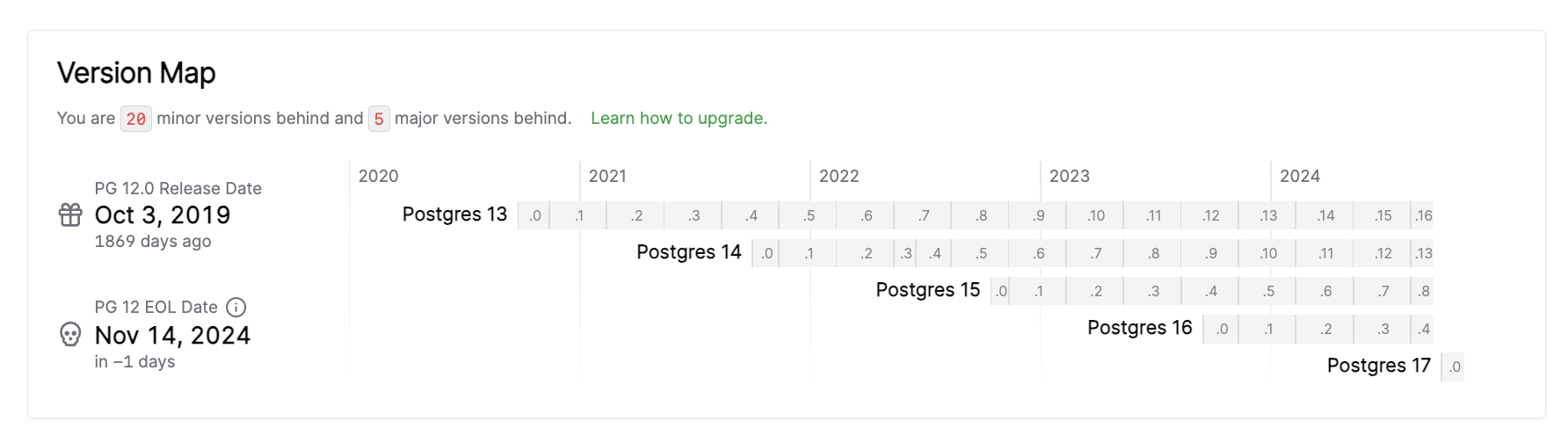

According to PostgreSQL’s versioning policy, PostgreSQL 12, released in 2019, officially exits its support lifecycle today (November 14, 2024).

PostgreSQL 12’s final minor version, 12.21, released today (November 14, 2024), marks the end of the road for PG 12. Meanwhile, the newly released PostgreSQL 17.1 emerges as the ideal choice for new projects.

| Version | Current minor | Supported | First Release | Final Release |

|---|---|---|---|---|

| 17 | 17.1 | Yes | September 26, 2024 | November 8, 2029 |

| 16 | 16.5 | Yes | September 14, 2023 | November 9, 2028 |

| 15 | 15.9 | Yes | October 13, 2022 | November 11, 2027 |

| 14 | 14.14 | Yes | September 30, 2021 | November 12, 2026 |

| 13 | 13.17 | Yes | September 24, 2020 | November 13, 2025 |

| 12 | 12.21 | No | October 3, 2019 | November 14, 2024 |

Farewell to PG 12

Over the past five years, PostgreSQL 12.20 (the previous minor version) addressed 34 security issues and fixed 936 bugs compared to PostgreSQL 12.0 released five years ago.

This final release (12.21) patches four CVE security vulnerabilities and includes 17 bug fixes. From this point forward, PostgreSQL 12 enters retirement, with no further security or error fixes.

- CVE-2024-10976: PostgreSQL row security ignores user ID changes in certain contexts (e.g., subqueries)

- CVE-2024-10977: PostgreSQL libpq preserves error messages from man-in-the-middle attacks

- CVE-2024-10978: PostgreSQL SET ROLE, SET SESSION AUTHORIZATION resets to incorrect user ID

- CVE-2024-10979: PostgreSQL PL/Perl environment variable changes execute arbitrary code

The risks of running outdated versions will continue to increase over time. Users still running PG 12 or earlier versions in production should develop an upgrade plan to a supported major version (13-17).

PostgreSQL 12, released five years ago, was a milestone release in my view - the most significant since PG 10. Notably, PG 12 introduced pluggable storage engine interfaces, allowing third parties to develop new storage engines. It also delivered major observability and usability improvements, such as real-time progress reporting for various tasks and csvlog format for easier log processing and analysis. Additionally, partitioned tables saw significant performance improvements and matured considerably.

My personal connection to PG 12 runs deep - when I created Pigsty, an out-of-the-box PostgreSQL database distribution, PG 12 was the first major version we publicly supported. It’s remarkable how five years have flown by; I still vividly remember adapting features from PG 11 to PG 12.

During these five years, Pigsty evolved from a personal PG monitoring system/testing sandbox into a widely-used open source project with global community recognition. Looking back, I can’t help but feel a sense of accomplishment.

PG 17 Takes the Throne

As one version departs, another ascends. Following PostgreSQL’s versioning policy, today’s routine quarterly release brings us PostgreSQL 17.1.

My friend Longxi Shuai from Qunar likes to upgrade immediately when a new PG version drops, while I prefer to wait for the first minor release after a major version launch.

Typically, many small fixes and refinements appear in the x.1 release following a major version. Additionally, the three-month buffer provides enough time for the PostgreSQL ecosystem of extensions to catch up and support the new major version - a crucial consideration for users of the PG ecosystem.

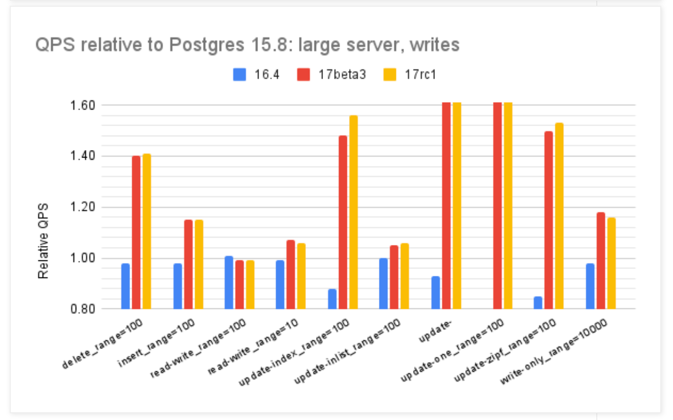

From PG 12 to the current PG 17, the PostgreSQL community has added 48 new feature enhancements and introduced 130 performance improvements. PostgreSQL 17’s write throughput, according to official statements, shows up to a 2x improvement in some scenarios compared to previous versions - making it well worth the upgrade.

https://smalldatum.blogspot.com/2024/09/postgres-17rc1-vs-sysbench-on-small.html

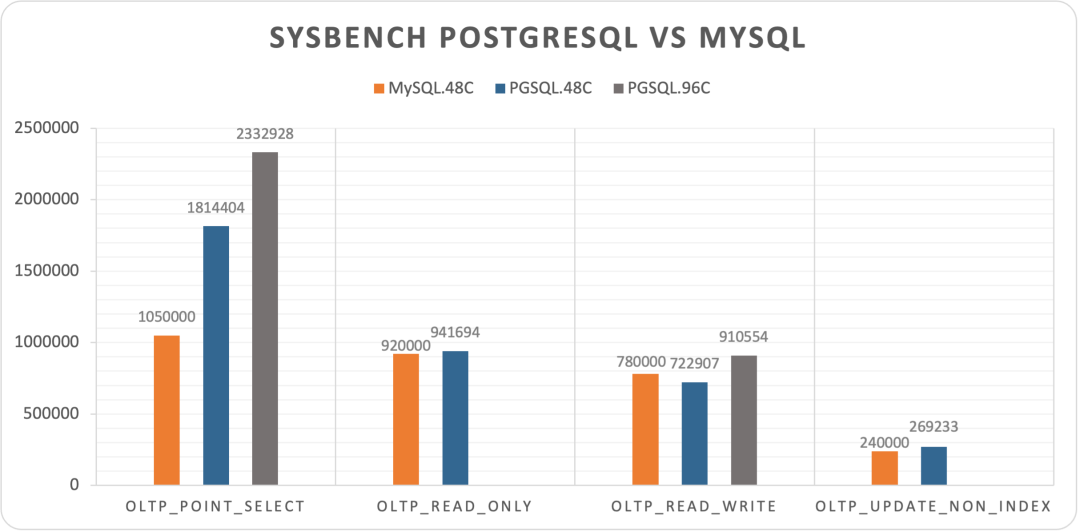

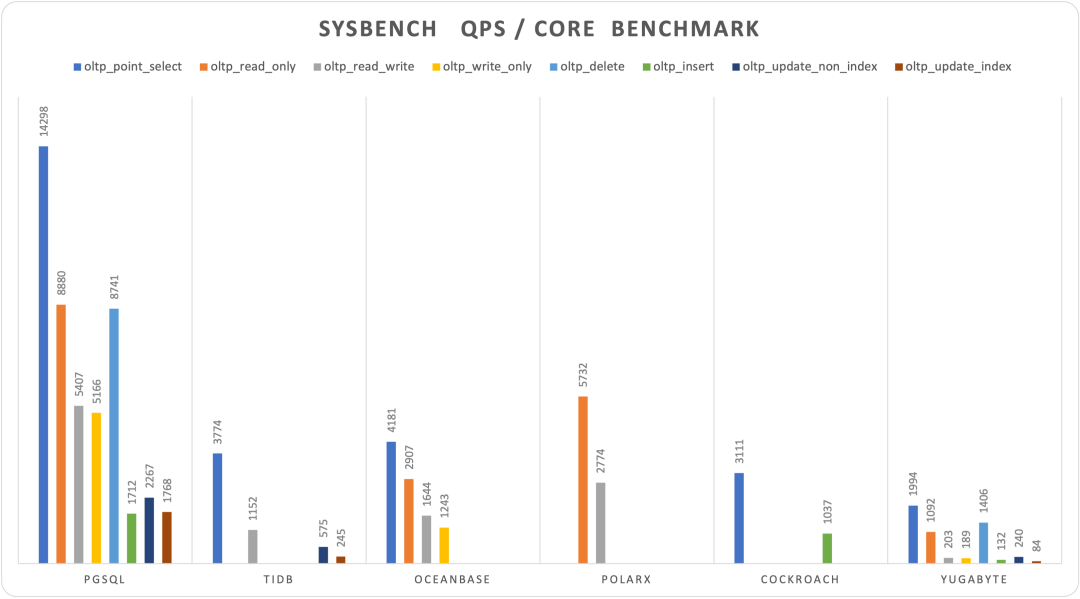

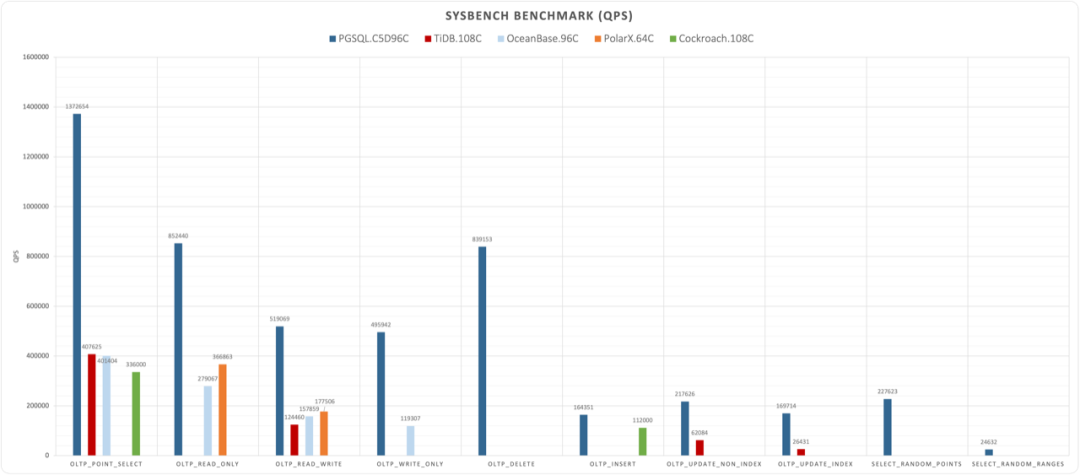

I conducted a comprehensive performance evaluation of PostgreSQL 14 three years ago, and I’m planning to run a fresh benchmark on PostgreSQL 17.1.

I recently acquired a beast of a physical machine: 128 cores, 256GB RAM, with four 3.2TB Gen4 NVMe SSDs plus a hardware NVMe RAID acceleration card. I’m eager to see what performance PostgreSQL, pgvector, and various OLAP extensions can achieve on this hardware monster - stay tuned for the results.

Overall, I believe 17.1’s release represents an opportune time to upgrade. I plan to release Pigsty v3.1 in the coming days, which will promote PG 17 as Pigsty’s default major version, replacing PG 16.

Considering that PostgreSQL has offered logical replication since 10.0, and Pigsty provides a complete solution for blue-green deployment upgrades using logical replication without downtime, major version upgrades are far less challenging than they once were. I’ll soon publish a tutorial on zero-downtime major version upgrades to help users seamlessly upgrade from PostgreSQL 16 or earlier versions to PG 17.

PG 17 Extensions

One particularly encouraging development is how quickly the PostgreSQL extension ecosystem has adapted to PG 17 compared to the transition from PG 15 to PG 16.

Last year, PG 16 was released in mid-September, but it took nearly six months for major extensions to catch up. For instance, TimescaleDB, a core extension in the PG ecosystem, only completed PG 16 support in early February with version 2.13. Other extensions followed similar timelines.

Only after PG 16 had been out for six months did it reach a satisfactory state. That’s when Pigsty promoted PG 16 to its default major version, replacing PG 15.

| Version | Date | Summary | Link |

|---|---|---|---|

| v3.1.0 | 2024-11-20 | PG 17 as default, config simplification, Ubuntu 24 & ARM support | WIP |

| v3.0.4 | 2024-10-30 | PG 17 extensions, OLAP suite, pg_duckdb | v3.0.4 |

| v3.0.3 | 2024-09-27 | PostgreSQL 17, Etcd maintenance optimizations, IvorySQL 3.4, PostGIS 3.5 | v3.0.3 |

| v3.0.2 | 2024-09-07 | Streamlined installation, PolarDB 15 support, monitor view updates | v3.0.2 |

| v3.0.1 | 2024-08-31 | Routine fixes, Patroni 4 support, Oracle compatibility improvements | v3.0.1 |

| v3.0.0 | 2024-08-25 | 333 extensions, pluggable kernels, MSSQL, Oracle, PolarDB compatibility | v3.0.0 |

| v2.7.0 | 2024-05-20 | Extension explosion with 20+ powerful new extensions and Docker applications | v2.7.0 |

| v2.6.0 | 2024-02-28 | PG 16 as default, introduced ParadeDB and DuckDB extensions | v2.6.0 |

| v2.5.1 | 2023-12-01 | Routine minor update, key PG16 extension support | v2.5.1 |

| v2.5.0 | 2023-09-24 | Ubuntu/Debian support: bullseye, bookworm, jammy, focal | v2.5.0 |

| v2.4.1 | 2023-09-24 | Supabase/PostgresML support & new extensions: graphql, jwt, pg_net, vault | v2.4.1 |

| v2.4.0 | 2023-09-14 | PG16, RDS monitoring, service consulting, new extensions: Chinese FTS/graph/HTTP/embeddings | v2.4.0 |

| v2.3.1 | 2023-09-01 | PGVector with HNSW, PG 16 RC1, docs overhaul, Chinese docs, routine fixes | v2.3.1 |

| v2.3.0 | 2023-08-20 | Host VIP, ferretdb, nocodb, MySQL stub, CVE fixes | v2.3.0 |

| v2.2.0 | 2023-08-04 | Dashboard & provisioning overhaul, UOS compatibility | v2.2.0 |

| v2.1.0 | 2023-06-10 | Support for PostgreSQL 12 ~ 16beta | v2.1.0 |

| v2.0.2 | 2023-03-31 | Added pgvector support, fixed MinIO CVE | v2.0.2 |

| v2.0.1 | 2023-03-21 | v2 bug fixes, security enhancements, Grafana version upgrade | v2.0.1 |

| v2.0.0 | 2023-02-28 | Major architectural upgrade, significantly improved compatibility, security, maintainability | v2.0.0 |

This time, the ecosystem adaptation from PG 16 to PG 17 has accelerated significantly - completing in less than three months what previously took six. I’m proud to say I’ve contributed substantially to this effort.

As I described in “PostgreSQL’s Ultimate Power: The Most Complete Extension Repository”, I maintain a repository that covers over half of the extensions in the PG ecosystem.

I recently completed the massive task of building over 140 extensions for PG 17 (also adding Ubuntu 24.04 and partial ARM support), while personally fixing or coordinating fixes for dozens of extensions with compatibility issues. The result: on EL systems, 301 out of 334 available extensions now work on PG 17, while on Debian systems, 302 out of 326 extensions are PG 17-compatible.

| Entry / Filter | All | PGDG | PIGSTY | CONTRIB | MISC | MISS | PG17 | PG16 | PG15 | PG14 | PG13 | PG12 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RPM Extension | 334 | 115 | 143 | 70 | 4 | 6 | 301 | 330 | 333 | 319 | 307 | 294 |

| DEB Extension | 326 | 104 | 144 | 70 | 4 | 14 | 302 | 322 | 325 | 316 | 303 | 293 |

Pigsty has achieved grand unification of the PostgreSQL extension ecosystem

Among major extensions, only a few remain without PG 17 support: the distributed extension Citus, the columnar storage extension Hydra, graph database extension AGE, and PGML. However, all other powerful extensions are now PG 17-ready.

Particularly noteworthy is the recent OLAP DuckDB integration competition in the PG ecosystem. ParadeDB’s pg_analytics, personal developer Hongyan Li’s duckdb_fdw, CrunchyData’s pg_parquet, MooncakeLab’s pg_mooncake, and even pg_duckdb from Hydra and DuckDB’s parent company MotherDuck - all now support PG 17 and are available in the Pigsty extension repository.

Considering that Citus has a relatively small user base, and the columnar Hydra already has numerous DuckDB extensions as alternatives, I believe PG 17 has reached a satisfactory state for extension support and is ready for production use as the primary major version. Achieving this milestone took about half the time it required for PG 16.

About Pigsty v3.1

Pigsty is a free and open-source, out-of-the-box PostgreSQL database distribution that allows users to deploy enterprise-grade RDS cloud database services locally with a single command, helping users leverage PostgreSQL - the world’s most advanced open-source database.

PostgreSQL is undoubtedly becoming the Linux kernel of the database world, and Pigsty aims to be its Debian distribution. Our PostgreSQL database distribution offers six key value propositions:

- Provides the most comprehensive extension support in the PostgreSQL ecosystem

- Delivers the most powerful and complete monitoring system in the PostgreSQL ecosystem

- Offers out-of-the-box, user-friendly tools and best practices

- Provides self-healing, low-maintenance high availability and PITR experience

- Delivers reliable deployment directly on bare OS without containers

- No vendor lock-in, a democratized RDS experience with full control

Worth mentioning, we’ve added PG-derived kernel replacement capabilities in Pigsty v3, allowing you to use derivative PG kernels for unique features and capabilities:

- Microsoft SQL Server-compatible Babelfish kernel support

- Oracle-compatible IvorySQL 3.4 kernel support

- Alibaba Cloud PolarDB for PostgreSQL/Oracle kernel support

- Easier self-hosting of Supabase - the open-source Firebase alternative and all-in-one backend platform

If you’re looking for an authentic PostgreSQL experience, we welcome you to try our distribution - it’s open-source, free, and comes with no vendor lock-in. We also offer commercial consulting support to solve challenging issues and provide peace of mind.

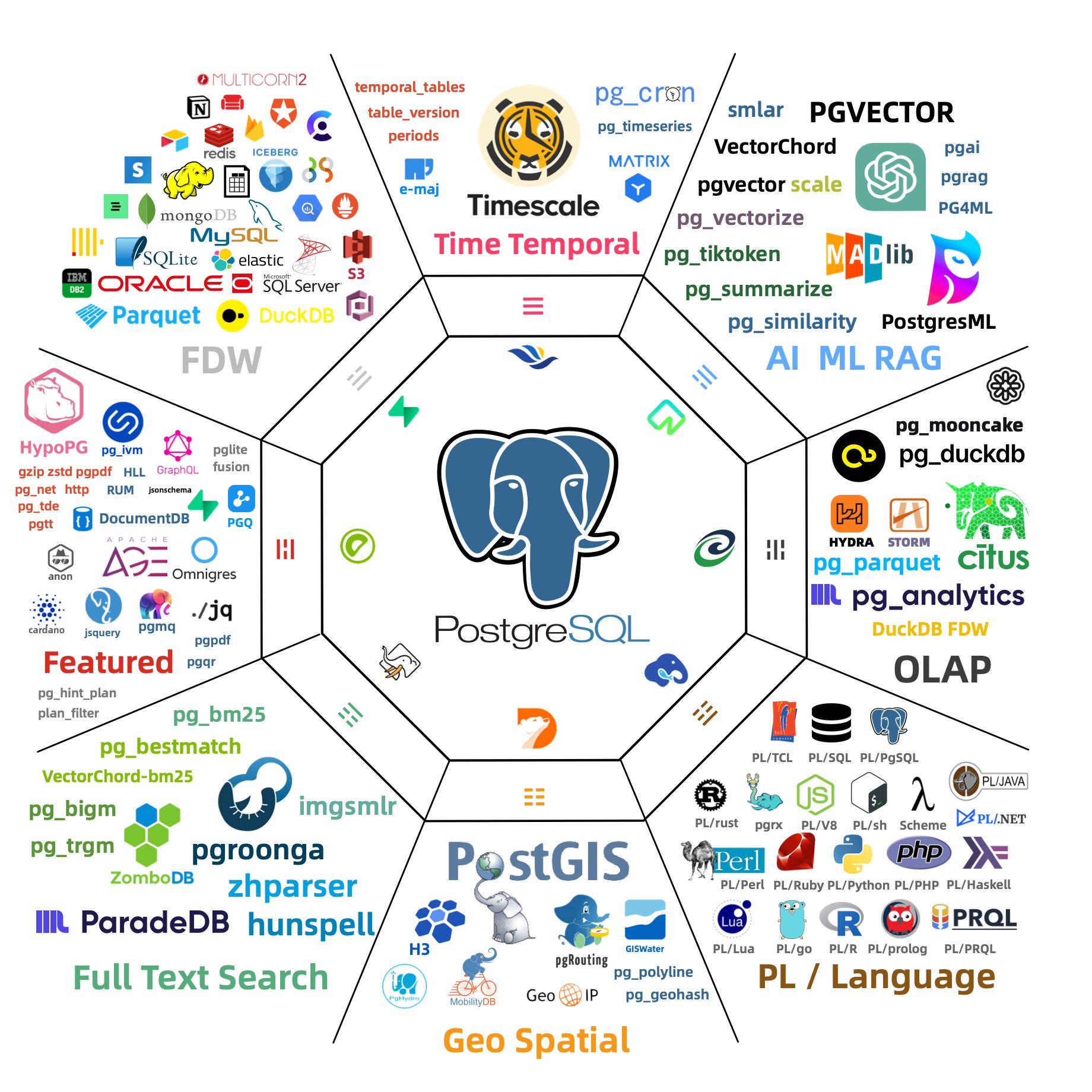

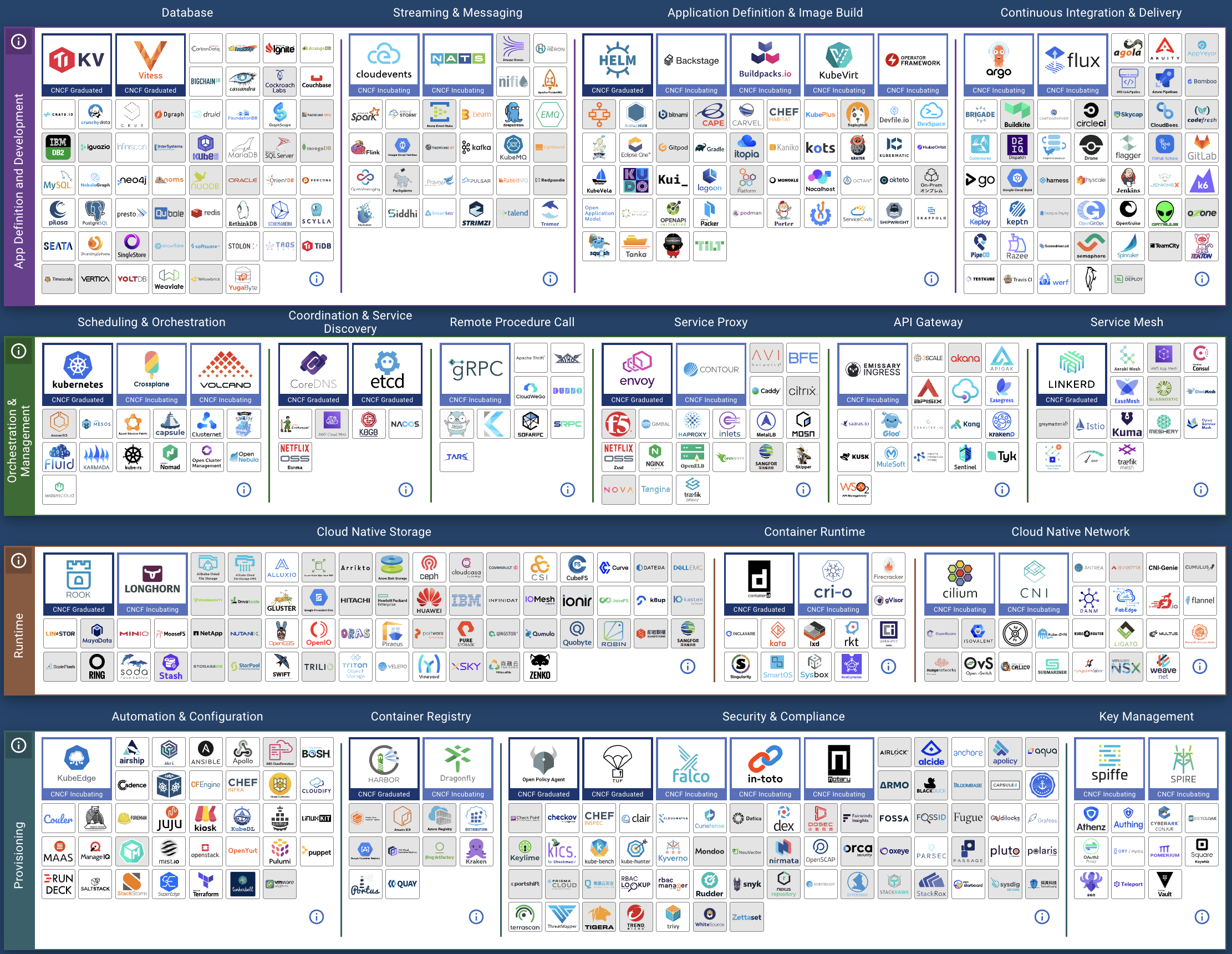

The idea way to install PostgreSQL Extensions

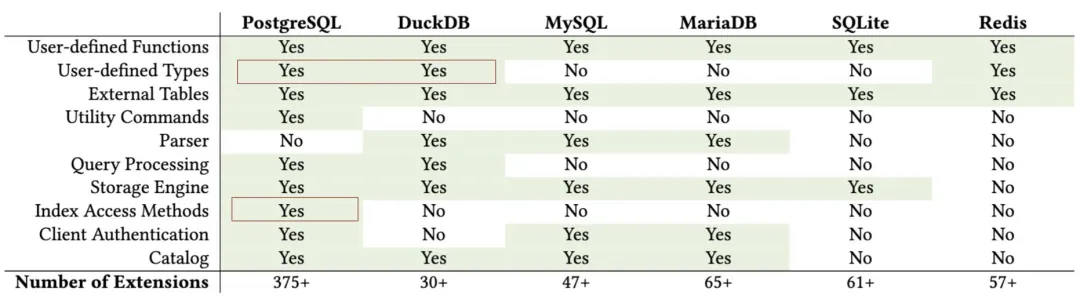

PostgreSQL Is Eating the Database World through the power of extensibility. With 400 extensions powering PostgreSQL, we may not say it’s invincible, but it’s definitely getting much closer.

I believe the PostgreSQL community has reached a consensus on the importance of extensions. So the real question now becomes: “What should we do about it?”

What’s the primary problem with PostgreSQL extensions? In my opinion, it’s their accessibility. Extensions are useless if most users can’t easily install and enable them. But it’s not that easy.

Even the largest cloud postgres vendors are struggling with this. They have some inherent limitations (multi-tenancy, security, licensing) that make it hard for them to fully address this issue.

So here’s my plan, I’ve created a repository that hosts 400 of the most capable extensions in the PostgreSQL ecosystem, available as RPM / DEB packages on mainstream Linux OS distros. And the goal is to take PostgreSQL one solid step closer to becoming the all-powerful database and achieve the great alignment between the Debian and EL OS ecosystems.

The status quo

The PostgreSQL ecosystem is rich with extensions, but how do you actually install and use them? This initial hurdle becomes a roadblock for many. There are some existing solutions:

PGXN says, “You can download and compile extensions on the fly with pgxnclient.”

Tembo says, “We have prepared pre-configured extension stack as Docker images.”

StackGres & Omnigres says, “We download .so files on the fly.” All solid ideas.

While based on my experience, the vast majority of users still rely on their operating system’s package manager to install PG extensions. On-the-fly compilation and downloading shared libraries might not be a viable option for production env. Since many DB setups don’t have internet access or a proper toolchain ready.

In the meantime, Existing OS package managers like yum/dnf/apt already solve issues like dependency resolution, upgrades, and version management well.

There’s no need to reinvent the wheel or disrupt existing standards. So the real question is: Who’s going to package these extensions into ready-to-use software?

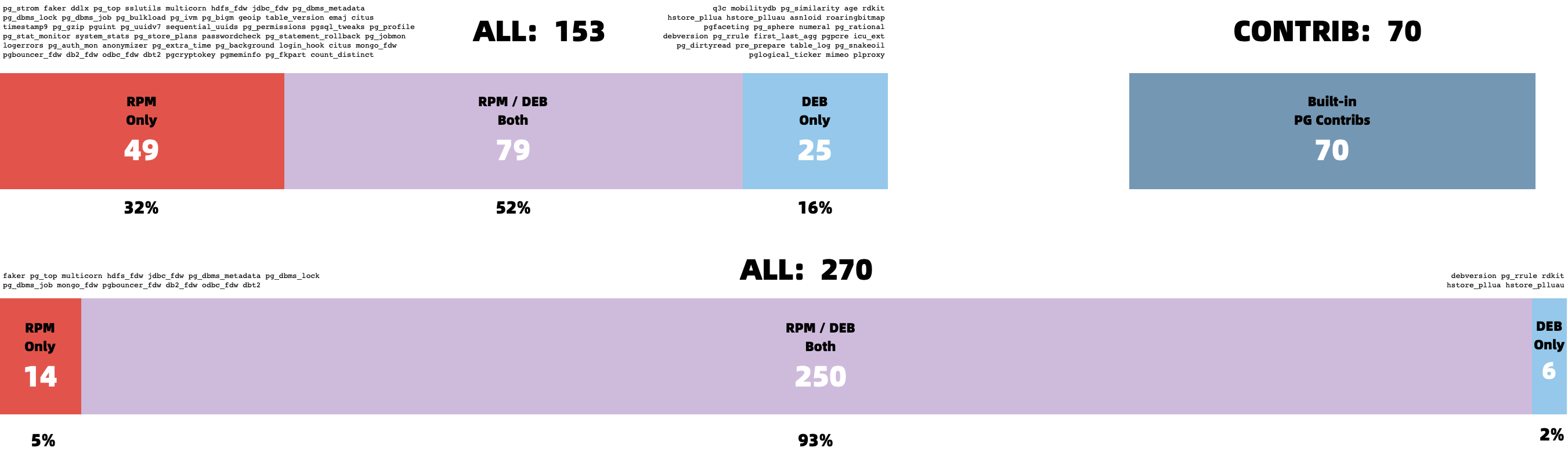

PGDG has already made a fantastic effort with official YUM and APT repositories. In addition to the 70 built-in Contrib extensions bundled with PostgreSQL,the PGDG YUM repo offers 128 RPM extensions, while the APT repo offers 104 DEB extensions. These extensions are compiled and packaged in the same environment as the PostgreSQL kernel, making them easy to install alongside the PostgreSQL binary packages. In fact, even most PostgreSQL Docker images rely on the PGDG repo to install extensions.

I’m deeply grateful for Devrim’s maintenance of the PGDG YUM repo and Christoph’s work with the APT repo. Their efforts to make PostgreSQL installation and extension management seamless are incredibly valuable. But as a distribution creator myself, I’ve encountered some challenges with PostgreSQL extension distribution.

What’s the challenge?

The first major issue facing extension users is Alignment.

In the two primary Linux distro camps — Debian and EL — there’s a significant number of PostgreSQL extensions. Excluding the 70 built-in Contrib extensions bundled with PostgreSQL, the YUM repo offers 128 extensions, and the APT repo provides 104.

However, when we dig deeper, we see that alignment between the two repos is not ideal. The combined total of extensions across both repos is 153, but the overlap is just 79. That means only half of the extensions are available in both ecosystems!

Only half of the extensions are available in both EL and Debian ecosystems!

Next, we run into further alignment issues within each ecosystem itself. The availability of extensions can vary between different major OS versions.

For instance, pljava, sequential_uuids, and firebird_fdw are only available in EL9, but not in EL8. Similarly, rdkit is available in Ubuntu 22+ / Debian 12+, but not in Ubuntu 20 / Debian 11.

There’s also the issue of architecture support. For example, citus does not provide arm64 packages in the Debian repo.

And then we have alignment issues across different PostgreSQL major versions. Some extensions won’t compile on older PostgreSQL versions, while others won’t work on newer ones. Some extensions are only available for specific PostgreSQL versions in certain distributions, and so on.

These alignment issues lead to a significant number of permutations. For example, if we consider five mainstream OS distributions (el8, el9, debian12, ubuntu22, ubuntu24),

two CPU architectures (x86_64 and arm64), and six PostgreSQL major versions (12–17), that’s 60-70 RPM/DEB packages per extension—just for one extension!

On top of alignment, there’s the problem of completeness. PGXN lists over 375 extensions, but the PostgreSQL ecosystem could have as many as 1,000+. The PGDG repos, however, contain only about one-tenth of them.

There are also several powerful new Rust-based extensions that PGDG doesn’t include, such as pg_graphql, pg_jsonschema, and wrappers for self-hosting Supabase;

pg_search as an Elasticsearch alternative; and pg_analytics, pg_parquet, pg_mooncake for OLAP processing. The reason? They are too slow to compile…

What’s the solution?

Over the past six months, I’ve focused on consolidating the PostgreSQL extension ecosystem. Recently, I reached a milestone I’m quite happy with. I’ve created a PG YUM/APT repository with a catalog of 400available PostgreSQL extensions.

Here are some key stats for the repo: It hosts 400 extensions in total. Excluding the 70 built-in extensions that come with PostgreSQL, this leaves 270 third-party extensions. Of these, about half are maintained by the official PGDG repos (126 RPM, 102 DEB). The other half (131 RPM, 143DEB) are maintained, fixed, compiled, packaged, and distributed by myself.

| OS \ Entry | All | PGDG | PIGSTY | CONTRIB | MISC | MISS | PG17 | PG16 | PG15 | PG14 | PG13 | PG12 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RPM | 334 | 115 | 143 | 70 | 4 | 6 | 301 | 330 | 333 | 319 | 307 | 294 |

| DEB | 326 | 104 | 144 | 70 | 4 | 14 | 302 | 322 | 325 | 316 | 303 | 293 |

For each extension, I’ve built versions for the 6 major PostgreSQL versions (12–17) across five popular Linux distributions: EL8, EL9, Ubuntu 22.04, Ubuntu 24.04, and Debian 12. I’ve also provided some limited support for older OS versions like EL7, Debian 11, and Ubuntu 20.04.

This repository also addresses most of the alignment issue. Initially, there were extensions in the APT and YUM repos that were unique to each, but I’ve worked to port as many of these unique extensions to the other ecosystem. Now, only 7 APT extensions are missing from the YUM repo, and 16 extensions are missing in APT—just 6% of the total. Many missing PGDG extensions have also been resolved.

I’ve created a comprehensive directory listing all supported extensions, with detailed info, dependency installation instructions, and other important notes.

I hope this repository can serve as the ultimate solution to the frustration users face when extensions are difficult to find, compile, or install.

How to use this repo?

Now, for a quick plug — what’s the easiest way to install and use these extensions?

The simplest option is to use the OSS PostgreSQL distribution: Pigsty. The repo is autoconfigured by default, so all you need to do is declare them in the config inventory.

For example, the self-hosting supabase template requires extensions that aren’t available in the PGDG repo. You can simply download, install, preload, config and create extensions by referring to their names.

all:

children:

pg-meta:

hosts: { 10.10.10.10: { pg_seq: 1, pg_role: primary } }

vars:

pg_cluster: pg-meta

# INSTALL EXTENSIONS

pg_extensions:

- supabase # essential extensions for supabase

- timescaledb postgis pg_graphql pg_jsonschema wrappers pg_search pg_analytics pg_parquet plv8 duckdb_fdw pg_cron pg_timetable pgqr

- supautils pg_plan_filter passwordcheck plpgsql_check pgaudit pgsodium pg_vault pgjwt pg_ecdsa pg_session_jwt index_advisor

- pgvector pgvectorscale pg_summarize pg_tiktoken pg_tle pg_stat_monitor hypopg pg_hint_plan pg_http pg_net pg_smtp_client pg_idkit

# LOAD EXTENSIONS

pg_libs: 'pg_stat_statements, plpgsql, plpgsql_check, pg_cron, pg_net, timescaledb, auto_explain, pg_tle, plan_filter'

# CONFIG EXTENSIONS

pg_parameters:

cron.database_name: postgres

pgsodium.enable_event_trigger: off

# CREATE EXTENSIONS

pg_databases:

- name: postgres

baseline: supabase.sql

schemas: [ extensions ,auth ,realtime ,storage ,graphql_public ,supabase_functions ,_analytics ,_realtime ]

extensions:

- { name: pgcrypto ,schema: extensions }

- { name: pg_net ,schema: extensions }

- { name: pgjwt ,schema: extensions }

- { name: uuid-ossp ,schema: extensions }

- { name: pgsodium }

- { name: supabase_vault }

- { name: pg_graphql }

- { name: pg_jsonschema }

- { name: wrappers }

- { name: http }

- { name: pg_cron }

- { name: timescaledb }

- { name: pg_tle }

- { name: vector }

vars:

pg_version: 17

# DOWNLOAD EXTENSIONS

repo_extra_packages:

- pgsql-main

- supabase # essential extensions for supabase

- timescaledb postgis pg_graphql pg_jsonschema wrappers pg_search pg_analytics pg_parquet plv8 duckdb_fdw pg_cron pg_timetable pgqr

- supautils pg_plan_filter passwordcheck plpgsql_check pgaudit pgsodium pg_vault pgjwt pg_ecdsa pg_session_jwt index_advisor

- pgvector pgvectorscale pg_summarize pg_tiktoken pg_tle pg_stat_monitor hypopg pg_hint_plan pg_http pg_net pg_smtp_client pg_idkit

To simply add extensions to existing clusters:

./infra.yml -t repo_build -e '{"repo_packages":[citus]}' # download

./pgsql.yml -t pg_extension -e '{"pg_extensions": ["citus"]}' # install

Through this repo was meant to be used with Pigsty, But it is not mandatory. You can still enable this repository on any EL/Debian/Ubuntu system with a simple one-liner in the shell:

APT Repo

For Ubuntu 22.04 & Debian 12 or any compatible platforms, use the following commands to add the APT repo:

curl -fsSL https://repo.pigsty.io/key | sudo gpg --dearmor -o /etc/apt/keyrings/pigsty.gpg

sudo tee /etc/apt/sources.list.d/pigsty-io.list > /dev/null <<EOF

deb [signed-by=/etc/apt/keyrings/pigsty.gpg] https://repo.pigsty.io/apt/infra generic main